An Attention-Based System for Damage Assessment Using Satellite Imagery

Paper and Code

Apr 14, 2020

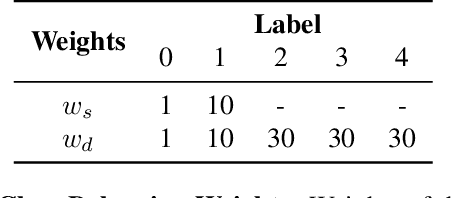

When disaster strikes, accurate situational information and a fast, effective response are critical to save lives. Widely available, high resolution satellite images enable emergency responders to estimate locations, causes, and severity of damage. Quickly and accurately analyzing the extensive amount of satellite imagery available, though, requires an automatic approach. In this paper, we present Siam-U-Net-Attn model - a multi-class deep learning model with an attention mechanism - to assess damage levels of buildings given a pair of satellite images depicting a scene before and after a disaster. We evaluate the proposed method on xView2, a large-scale building damage assessment dataset, and demonstrate that the proposed approach achieves accurate damage scale classification and building segmentation results simultaneously.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge