AlphaGAN: Fully Differentiable Architecture Search for Generative Adversarial Networks

Paper and Code

Jun 16, 2020

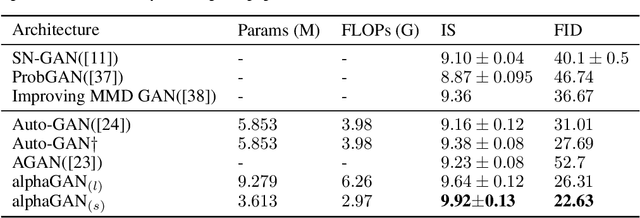

Generative Adversarial Networks (GANs) are formulated as minimax game problems, whereby generators attempt to approach real data distributions by virtue of adversarial learning against discriminators. The intrinsic problem complexity poses the challenge to enhance the performance of generative networks. In this work, we aim to boost model learning from the perspective of network architectures, by incorporating recent progress on automated architecture search into GANs. To this end, we propose a fully differentiable search framework for generative adversarial networks, dubbed alphaGAN. The searching process is formalized as solving a bi-level minimax optimization problem, in which the outer-level objective aims for seeking a suitable network architecture towards pure Nash Equilibrium conditioned on the generator and the discriminator network parameters optimized with a traditional GAN loss in the inner level. The entire optimization performs a first-order method by alternately minimizing the two-level objective in a fully differentiable manner, enabling architecture search to be completed in an enormous search space. Extensive experiments on CIFAR-10 and STL-10 datasets show that our algorithm can obtain high-performing architectures only with 3-GPU hours on a single GPU in the search space comprised of approximate 2 ? 1011 possible configurations. We also provide a comprehensive analysis on the behavior of the searching process and the properties of searched architectures, which would benefit further research on architectures for generative models. Pretrained models and codes are available at https://github.com/yuesongtian/AlphaGAN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge