AERA Chat: An Interactive Platform for Automated Explainable Student Answer Assessment

Paper and Code

Oct 12, 2024

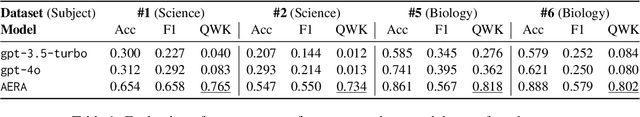

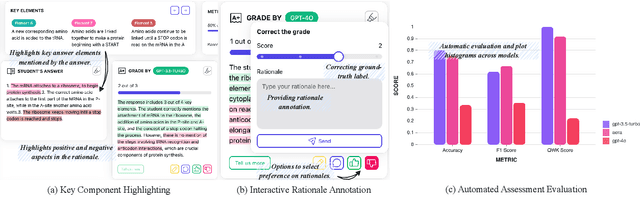

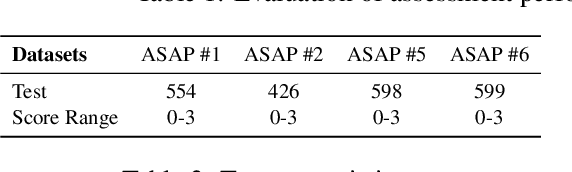

Generating rationales that justify scoring decisions has emerged as a promising approach to enhance explainability in the development of automated scoring systems. However, the scarcity of publicly available rationale data and the high cost of annotation have resulted in existing methods typically relying on noisy rationales generated by large language models (LLMs). To address these challenges, we have developed AERA Chat, an interactive platform, to provide visually explained assessment of student answers and streamline the verification of rationales. Users can input questions and student answers to obtain automated, explainable assessment results from LLMs. The platform's innovative visualization features and robust evaluation tools make it useful for educators to assist their marking process, and for researchers to evaluate assessment performance and quality of rationales generated by different LLMs, or as a tool for efficient annotation. We evaluated three rationale generation approaches on our platform to demonstrate its capability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge