Adversarial Shapley Value Experience Replay for Task-Free Continual Learning

Paper and Code

Aug 31, 2020

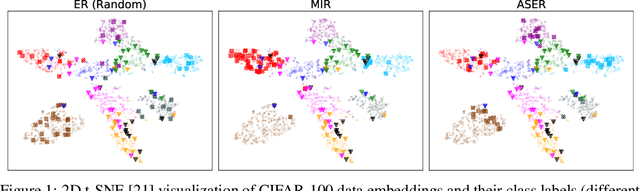

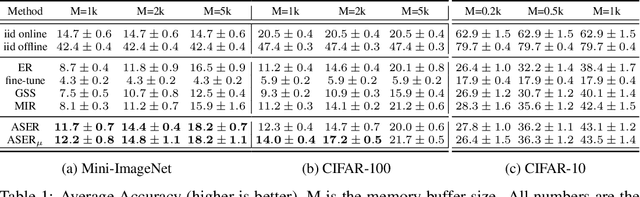

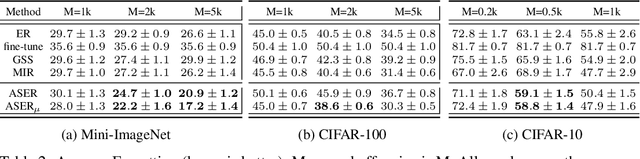

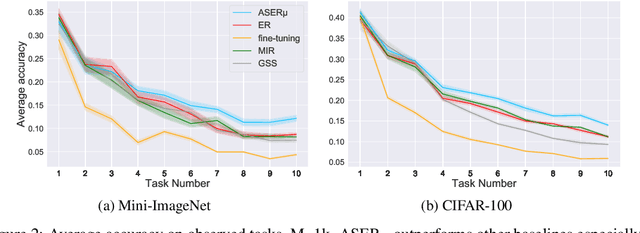

Continual learning is a branch of deep learning that seeks to strike a balance between learning stability and plasticity. In this paper, we specifically focus on the task-free setting where data are streamed online without task metadata and clear task boundaries. A simple and highly effective algorithm class for this setting is known as Experience Replay (ER) that selectively stores data samples from previous experience and leverages them to interleave memory-based and online batch learning updates. Recent advances in ER have proposed novel methods for scoring which samples to store in memory and which memory samples to interleave with online data during learning updates. In this paper, we contribute a novel Adversarial Shapley value ER (ASER) method that scores memory data samples according to their ability to preserve latent decision boundaries for previously observed classes (to maintain learning stability and avoid forgetting) while interfering with latent decision boundaries of current classes being learned (to encourage plasticity and optimal learning of new class boundaries). Overall, we observe that ASER provides competitive or improved performance on a variety of datasets compared to state-of-the-art ER-based continual learning methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge