Adversarial Alignment for Source Free Object Detection

Paper and Code

Jan 11, 2023

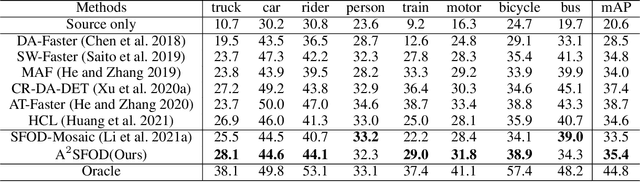

Source-free object detection (SFOD) aims to transfer a detector pre-trained on a label-rich source domain to an unlabeled target domain without seeing source data. While most existing SFOD methods generate pseudo labels via a source-pretrained model to guide training, these pseudo labels usually contain high noises due to heavy domain discrepancy. In order to obtain better pseudo supervisions, we divide the target domain into source-similar and source-dissimilar parts and align them in the feature space by adversarial learning. Specifically, we design a detection variance-based criterion to divide the target domain. This criterion is motivated by a finding that larger detection variances denote higher recall and larger similarity to the source domain. Then we incorporate an adversarial module into a mean teacher framework to drive the feature spaces of these two subsets indistinguishable. Extensive experiments on multiple cross-domain object detection datasets demonstrate that our proposed method consistently outperforms the compared SFOD methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge