Adaptive Robust Model Predictive Control via Uncertainty Cancellation

Paper and Code

Dec 02, 2022

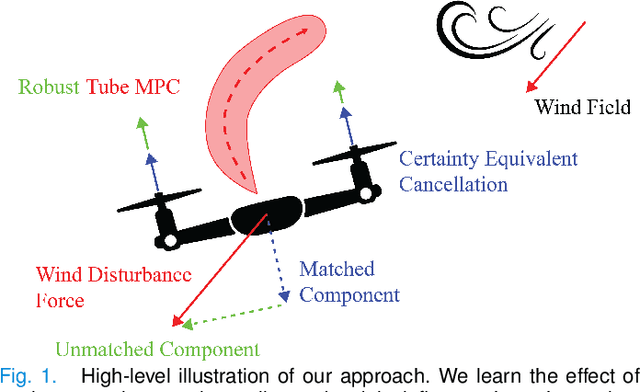

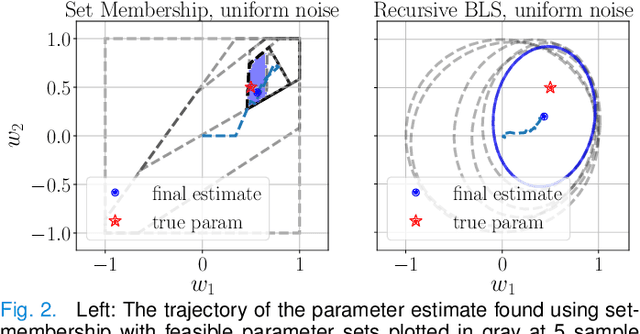

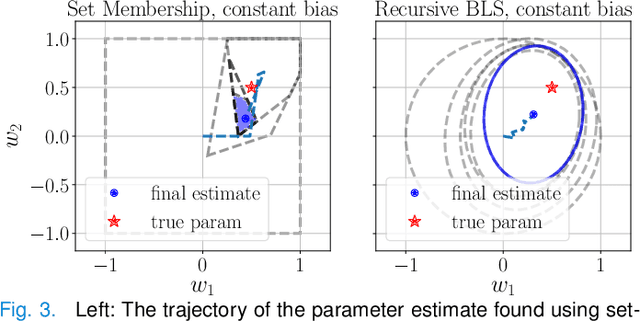

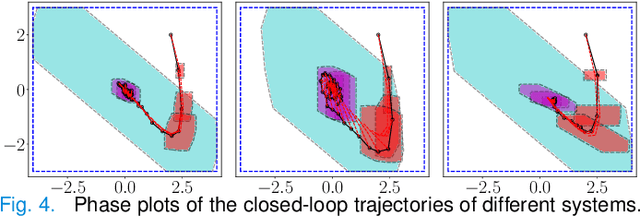

We propose a learning-based robust predictive control algorithm that compensates for significant uncertainty in the dynamics for a class of discrete-time systems that are nominally linear with an additive nonlinear component. Such systems commonly model the nonlinear effects of an unknown environment on a nominal system. We optimize over a class of nonlinear feedback policies inspired by certainty equivalent "estimate-and-cancel" control laws pioneered in classical adaptive control to achieve significant performance improvements in the presence of uncertainties of large magnitude, a setting in which existing learning-based predictive control algorithms often struggle to guarantee safety. In contrast to previous work in robust adaptive MPC, our approach allows us to take advantage of structure (i.e., the numerical predictions) in the a priori unknown dynamics learned online through function approximation. Our approach also extends typical nonlinear adaptive control methods to systems with state and input constraints even when we cannot directly cancel the additive uncertain function from the dynamics. We apply contemporary statistical estimation techniques to certify the system's safety through persistent constraint satisfaction with high probability. Moreover, we propose using Bayesian meta-learning algorithms that learn calibrated model priors to help satisfy the assumptions of the control design in challenging settings. Finally, we show in simulation that our method can accommodate more significant unknown dynamics terms than existing methods and that the use of Bayesian meta-learning allows us to adapt to the test environments more rapidly.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge