Adaptive Recurrent Neural Network via Persistent Memory

Paper and Code

May 18, 2018

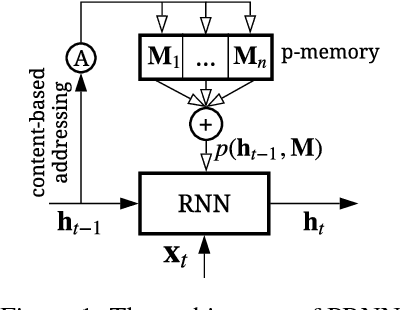

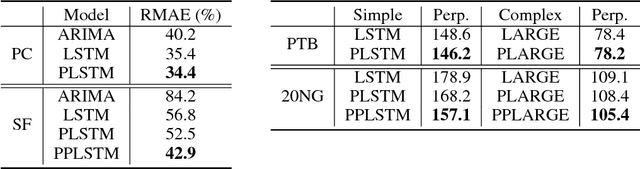

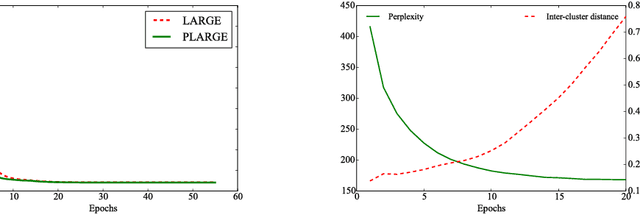

Although Recurrent Neural Network (RNN) has been a powerful tool for modeling sequential data, its performance is inadequate when processing sequences with multiple patterns. In this paper, we address this challenge by introducing a persistent memory and constructing an adaptive RNN. The persistent memory augmented RNN (termed as PRNN) captures the principle patterns in training sequences and stores them in an external memory. By leveraging the persistent memory, the proposed method can adaptively update states according to the similarities between encoded inputs and memory slots, leading to a stronger capacity in assimilating sequences with multiple patterns. Content-based addressing is suggested in memory accessing, and gradient descent is utilized for implicitly updating the memory. Our approach can be further extended by combining the prior knowledge of data. Experiments on several datasets demonstrate the effectiveness of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge