A Unifying Bayesian Formulation of Measures of Interpretability in Human-AI

Paper and Code

Apr 21, 2021

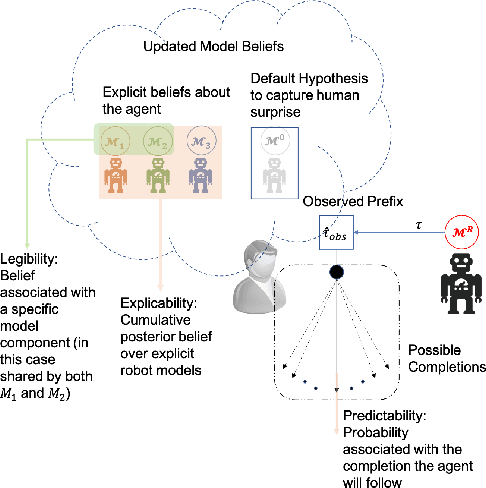

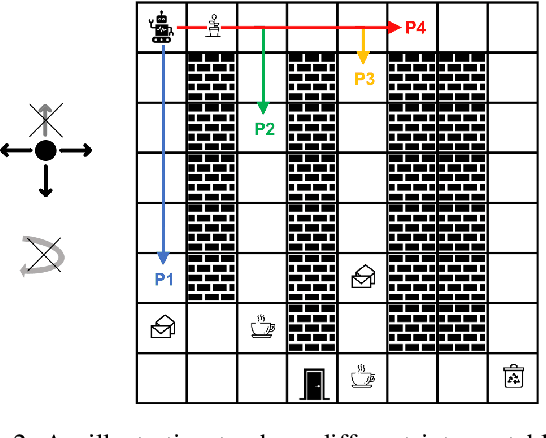

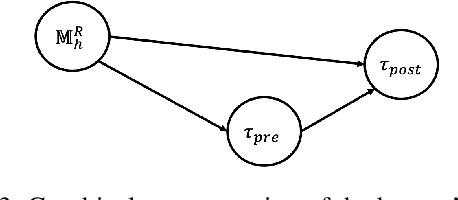

Existing approaches for generating human-aware agent behaviors have considered different measures of interpretability in isolation. Further, these measures have been studied under differing assumptions, thus precluding the possibility of designing a single framework that captures these measures under the same assumptions. In this paper, we present a unifying Bayesian framework that models a human observer's evolving beliefs about an agent and thereby define the problem of Generalized Human-Aware Planning. We will show that the definitions of interpretability measures like explicability, legibility and predictability from the prior literature fall out as special cases of our general framework. Through this framework, we also bring a previously ignored fact to light that the human-robot interactions are in effect open-world problems, particularly as a result of modeling the human's beliefs over the agent. Since the human may not only hold beliefs unknown to the agent but may also form new hypotheses about the agent when presented with novel or unexpected behaviors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge