A Theoretically Guaranteed Deep Optimization Framework for Robust Compressive Sensing MRI

Paper and Code

Nov 13, 2018

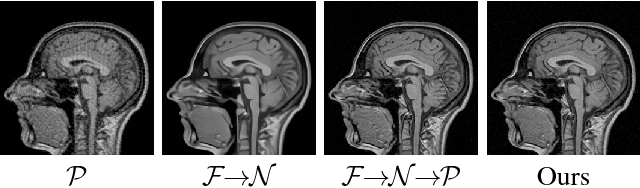

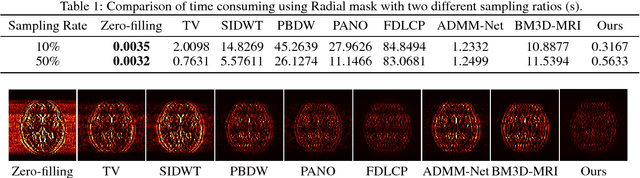

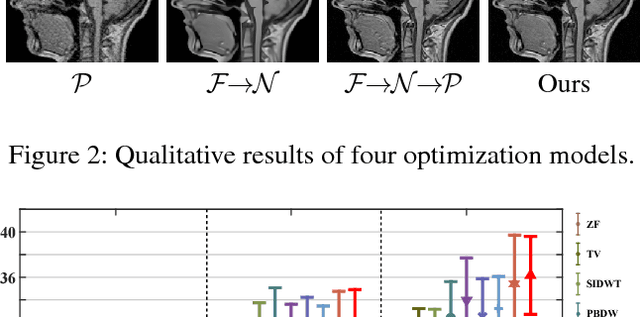

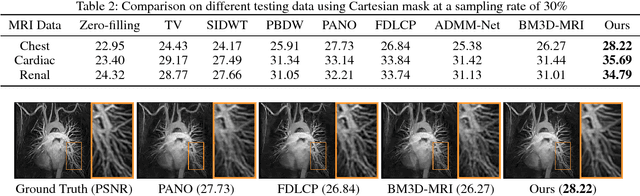

Magnetic Resonance Imaging (MRI) is one of the most dynamic and safe imaging techniques available for clinical applications. However, the rather slow speed of MRI acquisitions limits the patient throughput and potential indi cations. Compressive Sensing (CS) has proven to be an efficient technique for accelerating MRI acquisition. The most widely used CS-MRI model, founded on the premise of reconstructing an image from an incompletely filled k-space, leads to an ill-posed inverse problem. In the past years, lots of efforts have been made to efficiently optimize the CS-MRI model. Inspired by deep learning techniques, some preliminary works have tried to incorporate deep architectures into CS-MRI process. Unfortunately, the convergence issues (due to the experience-based networks) and the robustness (i.e., lack real-world noise modeling) of these deeply trained optimization methods are still missing. In this work, we develop a new paradigm to integrate designed numerical solvers and the data-driven architectures for CS-MRI. By introducing an optimal condition checking mechanism, we can successfully prove the convergence of our established deep CS-MRI optimization scheme. Furthermore, we explicitly formulate the Rician noise distributions within our framework and obtain an extended CS-MRI network to handle the real-world nosies in the MRI process. Extensive experimental results verify that the proposed paradigm outperforms the existing state-of-the-art techniques both in reconstruction accuracy and efficiency as well as robustness to noises in real scene.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge