A Reinforcement Learning Framework for Sequencing Multi-Robot Behaviors

Paper and Code

Sep 13, 2019

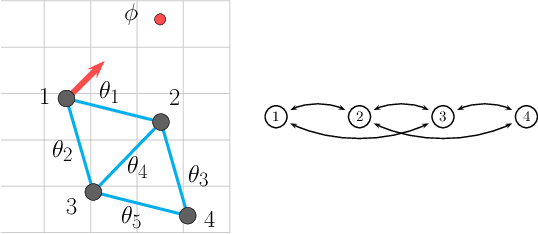

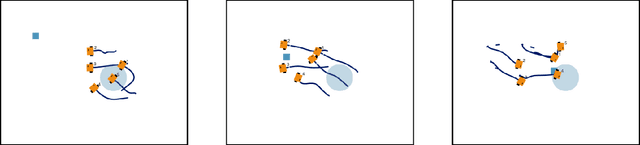

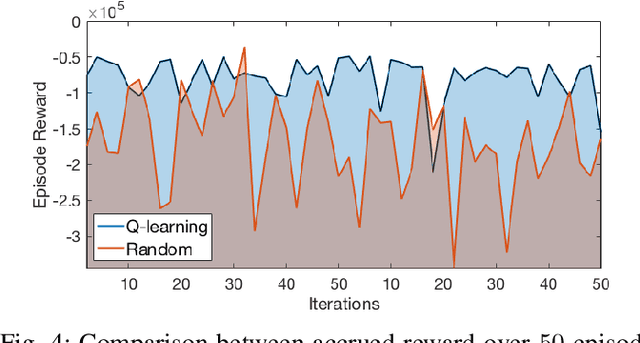

Given a list of behaviors and associated parameterized controllers for solving different individual tasks, we study the problem of selecting an optimal sequence of coordinated behaviors in multi-robot systems for completing a given mission, which could not be handled by any single behavior. In addition, we are interested in the case where partial information of the underlying mission is unknown, therefore, the robots must cooperatively learn this information through their course of actions. Such problem can be formulated as an optimal decision problem in multi-robot systems, however, it is in general intractable due to modeling imperfections and the curse of dimensionality of the decision variables. To circumvent these issues, we first consider an alternate formulation of the original problem through introducing a sequence of behaviors' switching times. Our main contribution is then to propose a novel reinforcement learning based method, that combines Q-learning and online gradient descent, for solving this reformulated problem. In particular, the optimal sequence of the robots' behaviors is found by using Q-learning while the optimal parameters of the associated controllers are obtained through an online gradient descent method. Finally, to illustrate the effectiveness of our proposed method we implement it on a team of differential-drive robots for solving two different missions, namely, convoy protection and object manipulation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge