A Novel Approach for Correcting Multiple Discrete Rigid In-Plane Motions Artefacts in MRI Scans

Paper and Code

Jun 29, 2020

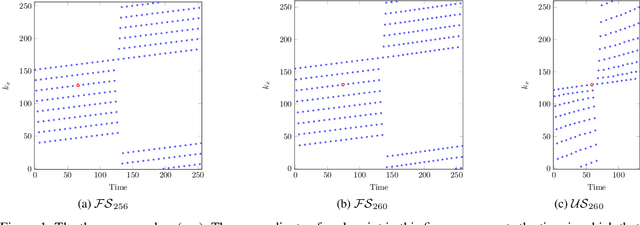

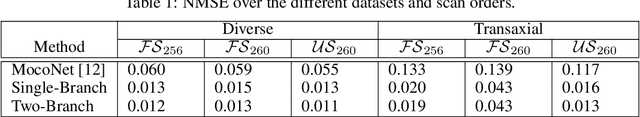

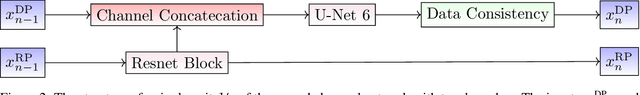

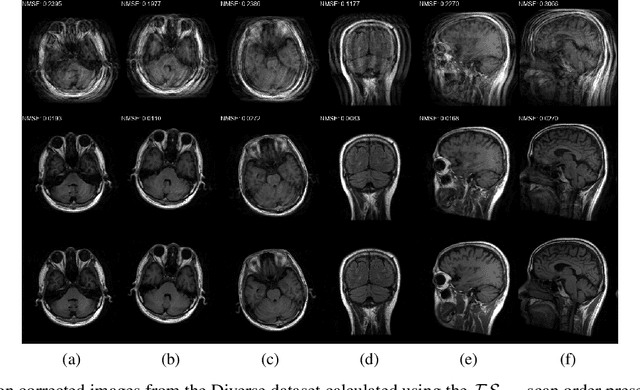

Motion artefacts created by patient motion during an MRI scan occur frequently in practice, often rendering the scans clinically unusable and requiring a re-scan. While many methods have been employed to ameliorate the effects of patient motion, these often fall short in practice. In this paper we propose a novel method for removing motion artefacts using a deep neural network with two input branches that discriminates between patient poses using the motion's timing. The first branch receives a subset of the $k$-space data collected during a single patient pose, and the second branch receives the remaining part of the collected $k$-space data. The proposed method can be applied to artefacts generated by multiple movements of the patient. Furthermore, it can be used to correct motion for the case where $k$-space has been under-sampled, to shorten the scan time, as is common when using methods such as parallel imaging or compressed sensing. Experimental results on both simulated and real MRI data show the efficacy of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge