A Neural-Symbolic Approach to Design of CAPTCHA

Paper and Code

Sep 25, 2018

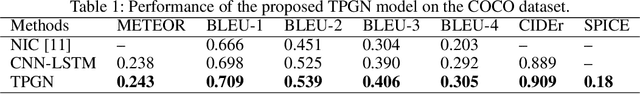

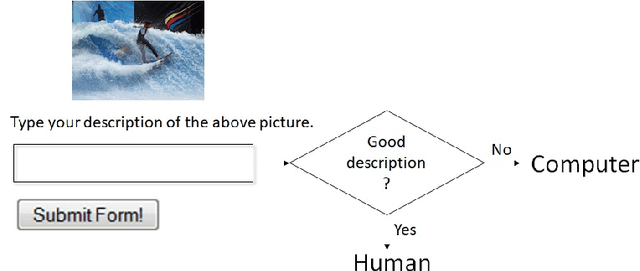

CAPTCHAs based on reading text are susceptible to machine-learning-based attacks due to recent significant advances in deep learning (DL). To address this, this paper promotes image/visual captioning based CAPTCHAs, which is robust against machine-learning-based attacks. To develop image/visual-captioning-based CAPTCHAs, this paper proposes a new image captioning architecture by exploiting tensor product representations (TPR), a structured neural-symbolic framework developed in cognitive science over the past 20 years, with the aim of integrating DL with explicit language structures and rules. We call it the Tensor Product Generation Network (TPGN). The key ideas of TPGN are: 1) unsupervised learning of role-unbinding vectors of words via a TPR-based deep neural network, and 2) integration of TPR with typical DL architectures including Long Short-Term Memory (LSTM) models. The novelty of our approach lies in its ability to generate a sentence and extract partial grammatical structure of the sentence by using role-unbinding vectors, which are obtained in an unsupervised manner. Experimental results demonstrate the effectiveness of the proposed approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge