A Multi-level Neural Network for Implicit Causality Detection in Web Texts

Paper and Code

Sep 08, 2019

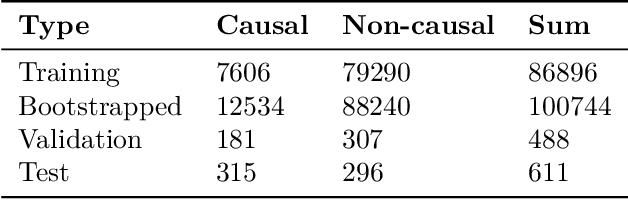

Mining causality from text is a complex and crucial natural language understanding task. Most of the early attempts at its solution can group into two categories: 1) utilizing co-occurrence frequency and world knowledge for causality detection; 2) extracting cause-effect pairs by using connectives and syntax patterns directly. However, because causality has various linguistic expressions, the noisy data and ignoring implicit expressions problems induced by these methods cannot be avoided. In this paper, we present a neural causality detection model, namely Multi-level Causality Detection Network (MCDN), to address this problem. Specifically, we adopt multi-head self-attention to acquire semantic feature at word level and integrate a novel Relation Network to infer causality at segment level. To the best of our knowledge, in touch with the causality tasks, this is the first time that the Relation Network is applied. The experimental results on the AltLex dataset, demonstrate that: a) MCDN is highly effective for the ambiguous and implicit causality inference; b) comparing with the regular text classification task, causality detection requires stronger inference capability; c) the proposed approach achieved state-of-the-art performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge