A game method for improving the interpretability of convolution neural network

Paper and Code

Oct 21, 2019

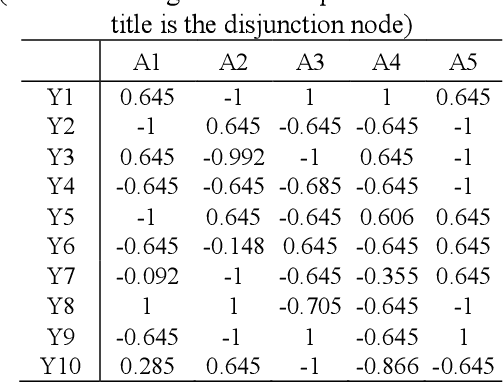

Real artificial intelligence always has been focused on by many machine learning researchers, especially in the area of deep learning. However deep neural network is hard to be understood and explained, and sometimes, even metaphysics. The reason is, we believe that: the network is essentially a perceptual model. Therefore, we believe that in order to complete complex intelligent activities from simple perception, it is necessary to con-struct another interpretable logical network to form accurate and reasonable responses and explanations to external things. Researchers like Bolei Zhou and Quanshi Zhang have found many explanatory rules for deep feature extraction aimed at the feature extraction stage of convolution neural network. However, although researchers like Marco Gori have also made great efforts to improve the interpretability of the fully connected layers of the network, the problem is also very difficult. This paper firstly analyzes its reason. Then a method of constructing logical network based on the fully connected layers and extracting logical relation between input and output of the layers is proposed. The game process between perceptual learning and logical abstract cognitive learning is implemented to improve the interpretable performance of deep learning process and deep learning model. The benefits of our approach are illustrated on benchmark data sets and in real-world experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge