Zhisheng Lu

Efficient Transformer for Single Image Super-Resolution

Aug 25, 2021

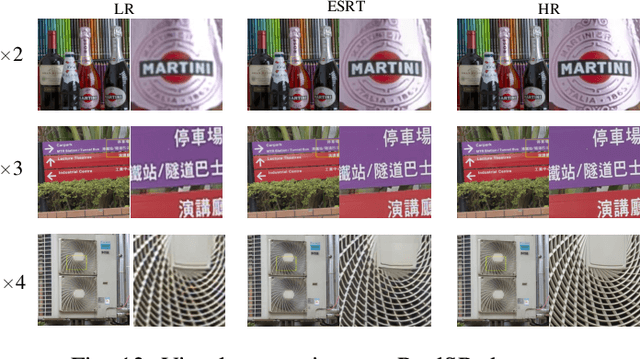

Abstract:Single image super-resolution task has witnessed great strides with the development of deep learning. However, most existing studies focus on building a more complex neural network with a massive number of layers, bringing heavy computational cost and memory storage. Recently, as Transformer yields brilliant results in NLP tasks, more and more researchers start to explore the application of Transformer in computer vision tasks. But with the heavy computational cost and high GPU memory occupation of the vision Transformer, the network can not be designed too deep. To address this problem, we propose a novel Efficient Super-Resolution Transformer (ESRT) for fast and accurate image super-resolution. ESRT is a hybrid Transformer where a CNN-based SR network is first designed in the front to extract deep features. Specifically, there are two backbones for formatting the ESRT: lightweight CNN backbone (LCB) and lightweight Transformer backbone (LTB). Among them, LCB is a lightweight SR network to extract deep SR features at a low computational cost by dynamically adjusting the size of the feature map. LTB is made up of an efficient Transformer (ET) with a small GPU memory occupation, which benefited from the novel efficient multi-head attention (EMHA). In EMHA, a feature split module (FSM) is proposed to split the long sequence into sub-segments and then these sub-segments are applied by attention operation. This module can significantly decrease the GPU memory occupation. Extensive experiments show that our ESRT achieves competitive results. Compared with the original Transformer which occupies 16057M GPU memory, the proposed ET only occupies 4191M GPU memory with better performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge