Zheyan Zhang

DiffMVR: Diffusion-based Automated Multi-Guidance Video Restoration

Nov 27, 2024

Abstract:In this work, we address a challenge in video inpainting: reconstructing occluded regions in dynamic, real-world scenarios. Motivated by the need for continuous human motion monitoring in healthcare settings, where facial features are frequently obscured, we propose a diffusion-based video-level inpainting model, DiffMVR. Our approach introduces a dynamic dual-guided image prompting system, leveraging adaptive reference frames to guide the inpainting process. This enables the model to capture both fine-grained details and smooth transitions between video frames, offering precise control over inpainting direction and significantly improving restoration accuracy in challenging, dynamic environments. DiffMVR represents a significant advancement in the field of diffusion-based inpainting, with practical implications for real-time applications in various dynamic settings.

MeshingNet: A New Mesh Generation Method based on Deep Learning

Apr 15, 2020

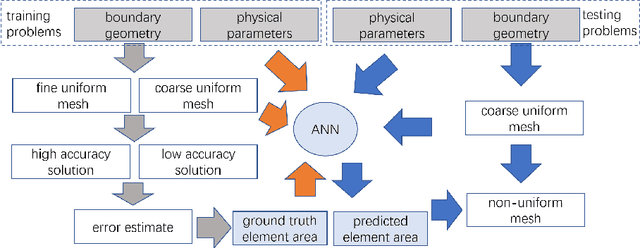

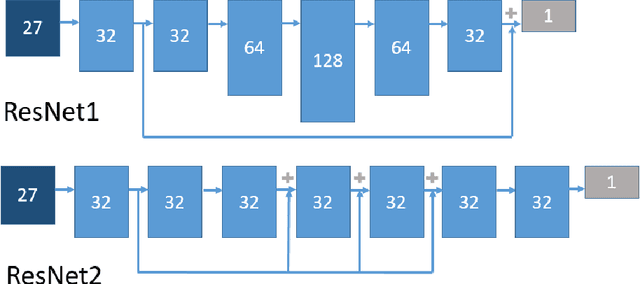

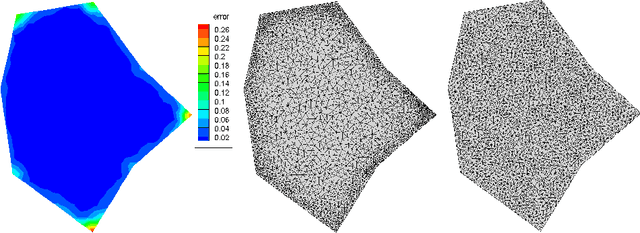

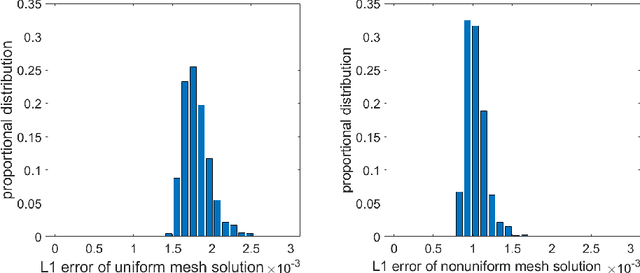

Abstract:We introduce a novel approach to automatic unstructured mesh generation using machine learning to predict an optimal finite element mesh for a previously unseen problem. The framework that we have developed is based around training an artificial neural network (ANN) to guide standard mesh generation software, based upon a prediction of the required local mesh density throughout the domain. We describe the training regime that is proposed, based upon the use of \emph{a posteriori} error estimation, and discuss the topologies of the ANNs that we have considered. We then illustrate performance using two standard test problems, a single elliptic partial differential equation (PDE) and a system of PDEs associated with linear elasticity. We demonstrate the effective generation of high quality meshes for arbitrary polygonal geometries and a range of material parameters, using a variety of user-selected error norms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge