Zhangling Chen

Label-Removed Generative Adversarial Networks Incorporating with K-Means

Feb 19, 2019

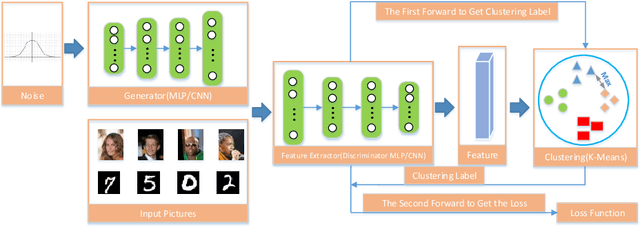

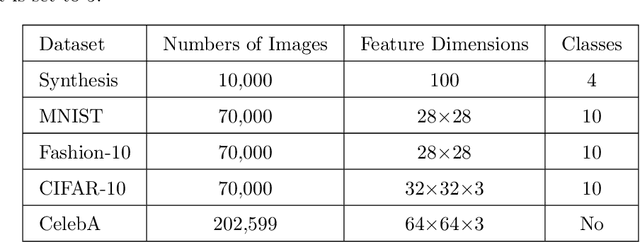

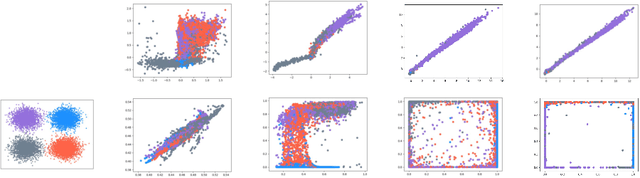

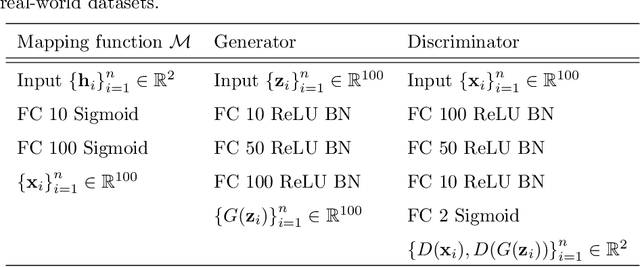

Abstract:Generative Adversarial Networks (GANs) have achieved great success in generating realistic images. Most of these are conditional models, although acquisition of class labels is expensive and time-consuming in practice. To reduce the dependence on labeled data, we propose an un-conditional generative adversarial model, called K-Means-GAN (KM-GAN), which incorporates the idea of updating centers in K-Means into GANs. Specifically, we redesign the framework of GANs by applying K-Means on the features extracted from the discriminator. With obtained labels from K-Means, we propose new objective functions from the perspective of deep metric learning (DML). Distinct from previous works, the discriminator is treated as a feature extractor rather than a classifier in KM-GAN, meanwhile utilization of K-Means makes features of the discriminator more representative. Experiments are conducted on various datasets, such as MNIST, Fashion-10, CIFAR-10 and CelebA, and show that the quality of samples generated by KM-GAN is comparable to some conditional generative adversarial models.

Exponential Discriminative Metric Embedding in Deep Learning

Mar 07, 2018

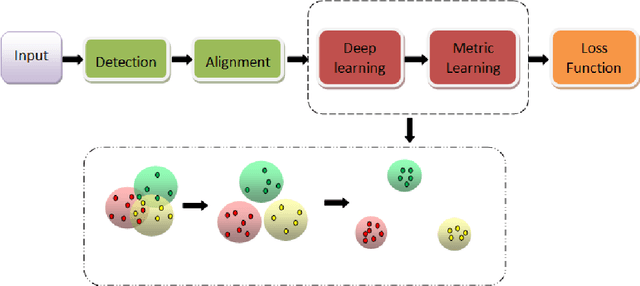

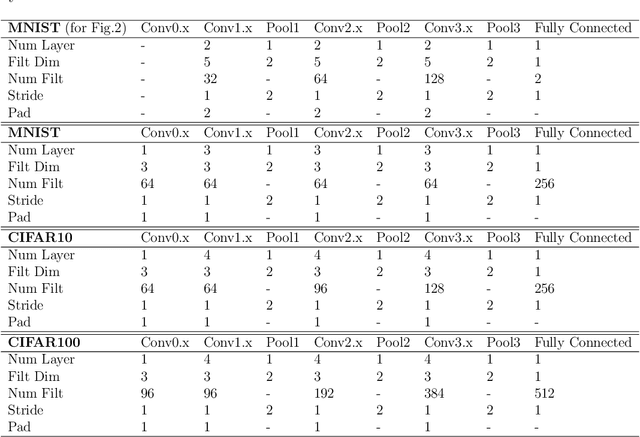

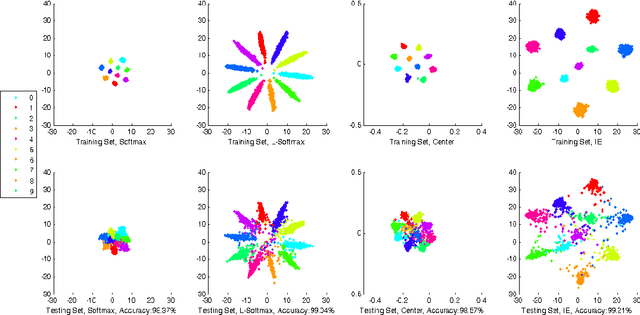

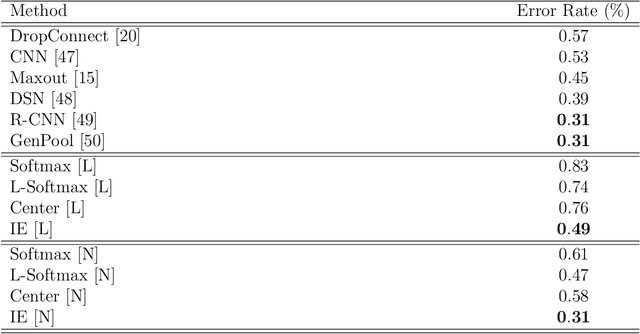

Abstract:With the remarkable success achieved by the Convolutional Neural Networks (CNNs) in object recognition recently, deep learning is being widely used in the computer vision community. Deep Metric Learning (DML), integrating deep learning with conventional metric learning, has set new records in many fields, especially in classification task. In this paper, we propose a replicable DML method, called Include and Exclude (IE) loss, to force the distance between a sample and its designated class center away from the mean distance of this sample to other class centers with a large margin in the exponential feature projection space. With the supervision of IE loss, we can train CNNs to enhance the intra-class compactness and inter-class separability, leading to great improvements on several public datasets ranging from object recognition to face verification. We conduct a comparative study of our algorithm with several typical DML methods on three kinds of networks with different capacity. Extensive experiments on three object recognition datasets and two face recognition datasets demonstrate that IE loss is always superior to other mainstream DML methods and approach the state-of-the-art results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge