Zhangcheng Qiang

An Ecosystem for Ontology Interoperability

Jul 16, 2025Abstract:Ontology interoperability is one of the complicated issues that restricts the use of ontologies in knowledge graphs (KGs). Different ontologies with conflicting and overlapping concepts make it difficult to design, develop, and deploy an interoperable ontology for downstream tasks. We propose an ecosystem for ontology interoperability. The ecosystem employs three state-of-the-art semantic techniques in different phases of the ontology engineering life cycle: ontology design patterns (ODPs) in the design phase, ontology matching and versioning (OM\&OV) in the develop phase, and ontology-compliant knowledge graphs (OCKGs) in the deploy phase, to achieve better ontology interoperability in real-world applications. A case study in the building domain validates the usefulness of the proposed ecosystem.

How Does A Text Preprocessing Pipeline Affect Ontology Syntactic Matching?

Nov 06, 2024Abstract:The generic text preprocessing pipeline, comprising Tokenisation, Normalisation, Stop Words Removal, and Stemming/Lemmatisation, has been implemented in many ontology matching (OM) systems. However, the lack of standardisation in text preprocessing creates diversity in mapping results. In this paper, we investigate the effect of the text preprocessing pipeline on OM tasks at syntactic levels. Our experiments on 8 Ontology Alignment Evaluation Initiative (OAEI) track repositories with 49 distinct alignments indicate: (1) Tokenisation and Normalisation are currently more effective than Stop Words Removal and Stemming/Lemmatisation; and (2) The selection of Lemmatisation and Stemming is task-specific. We recommend standalone Lemmatisation or Stemming with post-hoc corrections. We find that (3) Porter Stemmer and Snowball Stemmer perform better than Lancaster Stemmer; and that (4) Part-of-Speech (POS) Tagging does not help Lemmatisation. To repair less effective Stop Words Removal and Stemming/Lemmatisation used in OM tasks, we propose a novel context-based pipeline repair approach that significantly improves matching correctness and overall matching performance. We also discuss the use of text preprocessing pipeline in the new era of large language models (LLMs).

OM4OV: Leveraging Ontology Matching for Ontology Versioning

Sep 30, 2024

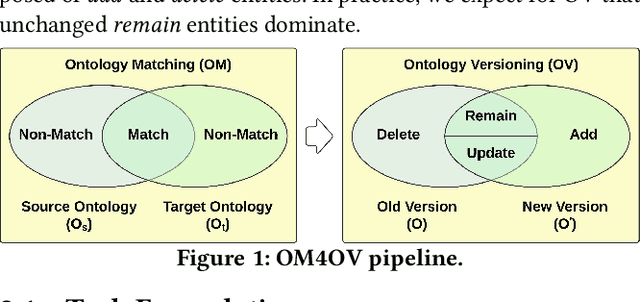

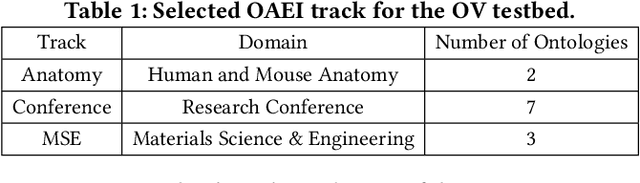

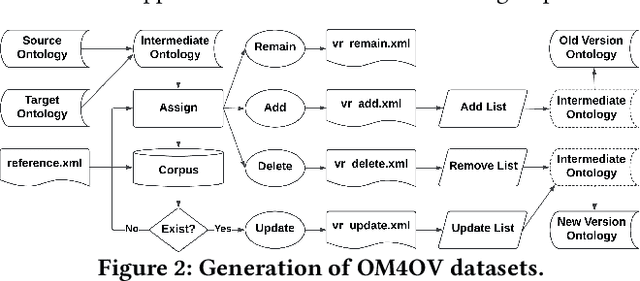

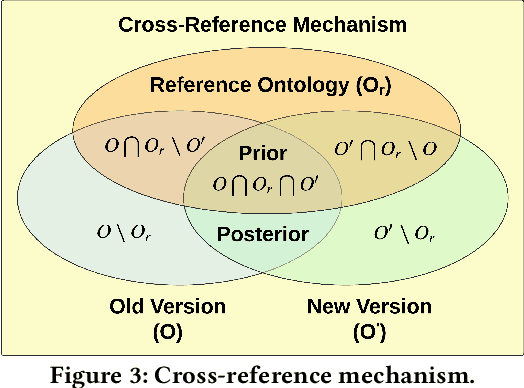

Abstract:Due to the dynamic nature of the semantic web, ontology version control is required to capture time-varying information, most importantly for widely-used ontologies. Despite the long-standing recognition of ontology versioning (OV) as a crucial component for efficient ontology management, the growing size of ontologies and accumulating errors caused by manual labour overwhelm current OV approaches. In this paper, we propose yet another approach to performing OV using existing ontology matching (OM) techniques and systems. We introduce a unified OM4OV pipeline. From an OM perspective, we reconstruct a new task formulation, performance measurement, and dataset construction for OV tasks. Reusing the prior alignment(s) from OM, we also propose a cross-reference mechanism to effectively reduce the matching candidature and improve overall OV performance. We experimentally validate the OM4OV pipeline and its cross-reference mechanism using three datasets from the Alignment Evaluation Initiative (OAEI) and exploit insights on OM used for OV tasks.

OAEI-LLM: A Benchmark Dataset for Understanding Large Language Model Hallucinations in Ontology Matching

Sep 21, 2024Abstract:Hallucinations of large language models (LLMs) commonly occur in domain-specific downstream tasks, with no exception in ontology matching (OM). The prevalence of using LLMs for OM raises the need for benchmarks to better understand LLM hallucinations. The OAEI-LLM dataset is an extended version of the Ontology Alignment Evaluation Initiative (OAEI) datasets that evaluate LLM-specific hallucinations in OM tasks. We outline the methodology used in dataset construction and schema extension, and provide examples of potential use cases.

Agent-OM: Leveraging Large Language Models for Ontology Matching

Dec 01, 2023Abstract:Ontology matching (OM) enables semantic interoperability between different ontologies and resolves their conceptual heterogeneity by aligning related entities. OM systems currently have two prevailing design paradigms: conventional knowledge-based expert systems and newer machine learning-based predictive systems. While large language models (LLMs) and LLM-based agents have become revolutionary in data engineering and have been applied creatively in various domains, their potential for OM remains underexplored. This study introduces a novel agent-powered LLM-based design paradigm for OM systems. With thoughtful consideration of several specific challenges to leverage LLMs for OM, we propose a generic framework, namely Agent-OM, consisting of two Siamese agents for retrieval and matching, with a set of simple prompt-based OM tools. Our framework is implemented in a proof-of-concept system. Evaluations of three Ontology Alignment Evaluation Initiative (OAEI) tracks over state-of-the-art OM systems show that our system can achieve very close results to the best long-standing performance on simple OM tasks and significantly improve the performance on complex and few-shot OM tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge