Yuya Tatsuta

Robotic Waste Sorter with Agile Manipulation and Quickly Trainable Detector

Apr 02, 2021

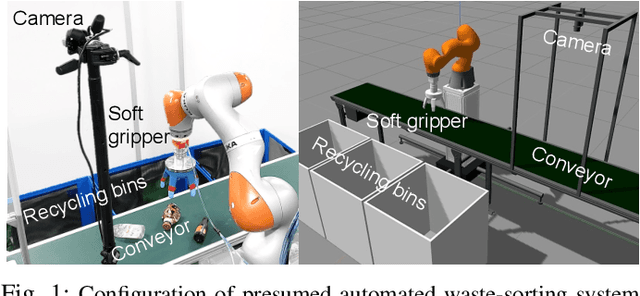

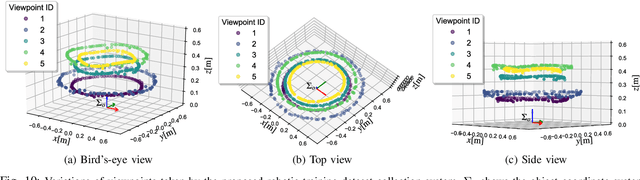

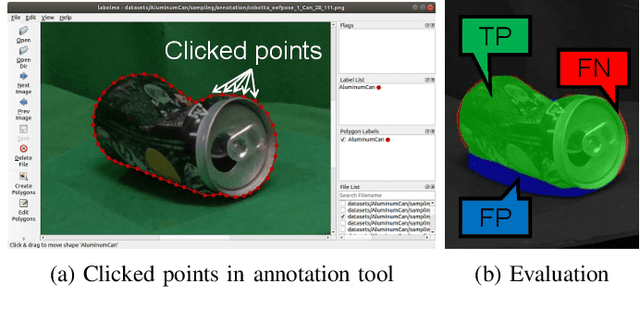

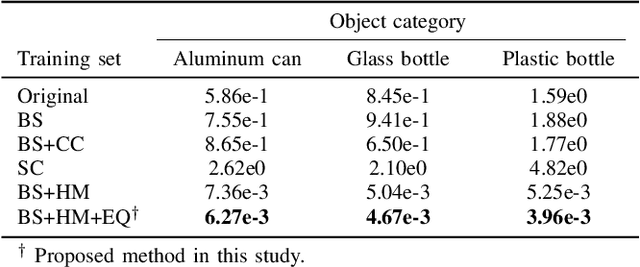

Abstract:Owing to human labor shortages, the automation of labor-intensive manual waste-sorting is needed. The goal of automating the waste-sorting is to replace the human role of robust detection and agile manipulation of the waste items by robots. To achieve this, we propose three methods. First, we propose a combined manipulation method using graspless push-and-drop and pick-and-release manipulation. Second, we propose a robotic system that can automatically collect object images to quickly train a deep neural network model. Third, we propose the method to mitigate the differences in the appearance of target objects from two scenes: one for the dataset collection and the other for waste sorting in a recycling factory. If differences exist, the performance of a trained waste detector could be decreased. We address differences in illumination and background by applying object scaling, histogram matching with histogram equalization, and background synthesis to the source target-object images. Via experiments in an indoor experimental workplace for waste-sorting, we confirmed the proposed methods enable quickly collecting the training image sets for three classes of waste items, i.e., aluminum can, glass bottle, and plastic bottle and detecting them with higher performance than the methods that do not consider the differences. We also confirmed that the proposed method enables the robot quickly manipulate them.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge