Yuxiu Hua

Deep Reinforcement Learning with Discrete Normalized Advantage Functions for Resource Management in Network Slicing

Jun 10, 2019

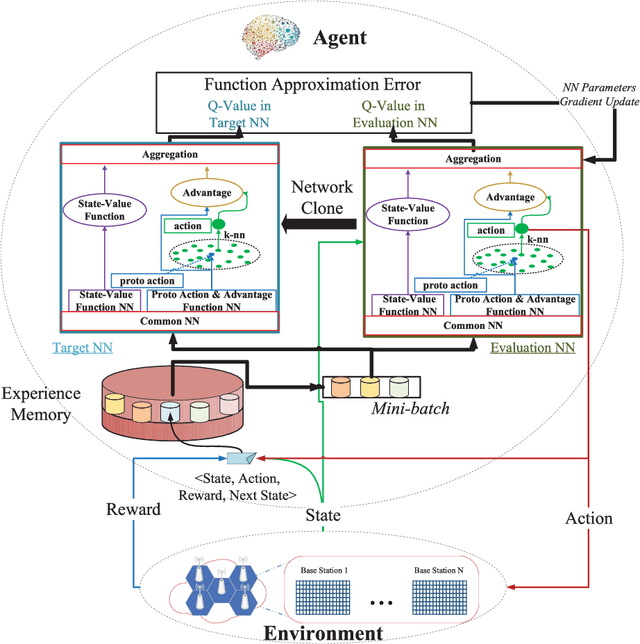

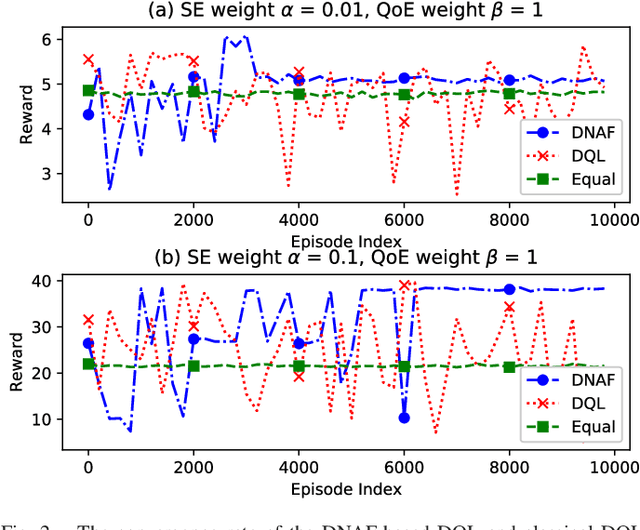

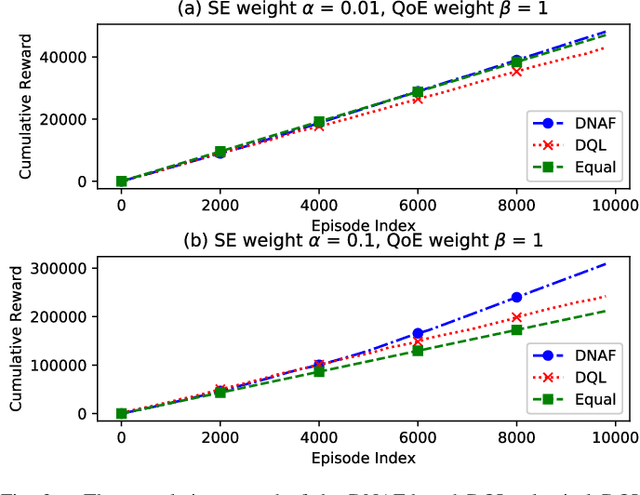

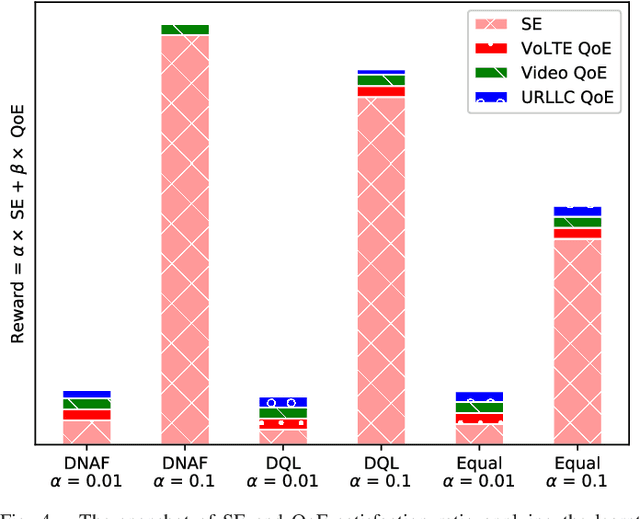

Abstract:Network slicing promises to provision diversified services with distinct requirements in one infrastructure. Deep reinforcement learning (e.g., deep $\mathcal{Q}$-learning, DQL) is assumed to be an appropriate algorithm to solve the demand-aware inter-slice resource management issue in network slicing by regarding the varying demands and the allocated bandwidth as the environment state and the action, respectively. However, allocating bandwidth in a finer resolution usually implies larger action space, and unfortunately DQL fails to quickly converge in this case. In this paper, we introduce discrete normalized advantage functions (DNAF) into DQL, by separating the $\mathcal{Q}$-value function as a state-value function term and an advantage term and exploiting a deterministic policy gradient descent (DPGD) algorithm to avoid the unnecessary calculation of $\mathcal{Q}$-value for every state-action pair. Furthermore, as DPGD only works in continuous action space, we embed a k-nearest neighbor algorithm into DQL to quickly find a valid action in the discrete space nearest to the DPGD output. Finally, we verify the faster convergence of the DNAF-based DQL through extensive simulations.

GAN-based Deep Distributional Reinforcement Learning for Resource Management in Network Slicing

May 10, 2019

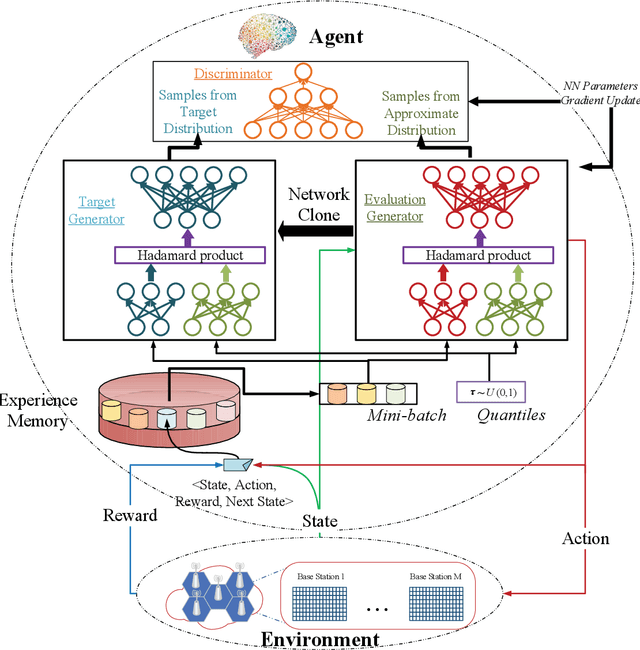

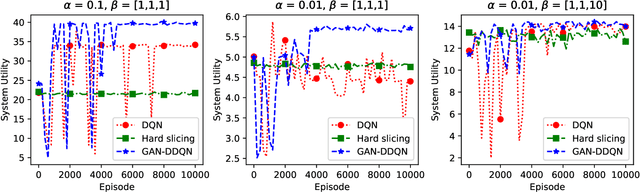

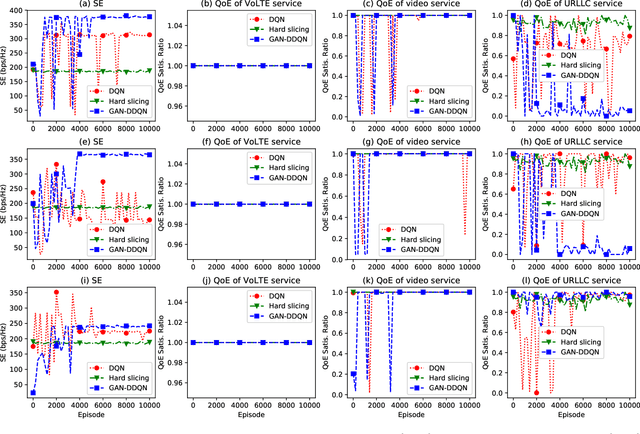

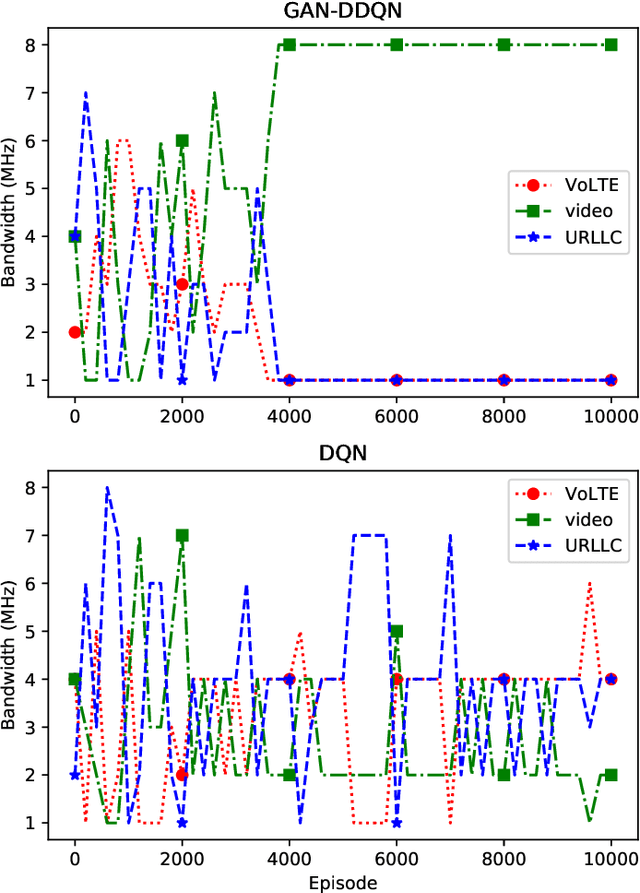

Abstract:Network slicing is a key technology in 5G communications system, which aims to dynamically and efficiently allocate resources for diversified services with distinct requirements over a common underlying physical infrastructure. Therein, demand-aware allocation is of significant importance to network slicing. In this paper, we consider a scenario that contains several slices in one base station on sharing the same bandwidth. Deep reinforcement learning (DRL) is leveraged to solve this problem by regarding the varying demands and the allocated bandwidth as the environment \emph{state} and \emph{action}, respectively. In order to obtain better quality of experience (QoE) satisfaction ratio and spectrum efficiency (SE), we propose generative adversarial network (GAN) based deep distributional Q network (GAN-DDQN) to learn the distribution of state-action values. Furthermore, we estimate the distributions by approximating a full quantile function, which can make the training error more controllable. In order to protect the stability of GAN-DDQN's training process from the widely-spanning utility values, we also put forward a reward-clipping mechanism. Finally, we verify the performance of the proposed GAN-DDQN algorithm through extensive simulations.

Deep Learning with Long Short-Term Memory for Time Series Prediction

Oct 24, 2018

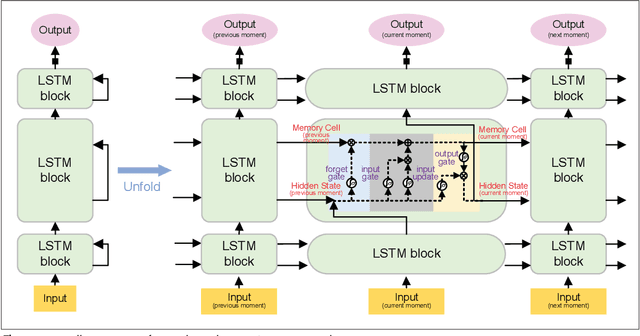

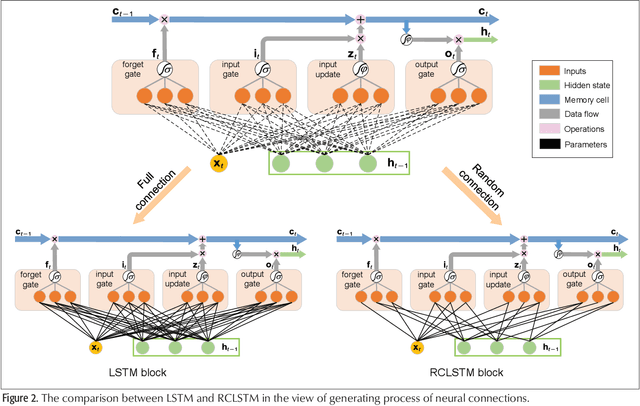

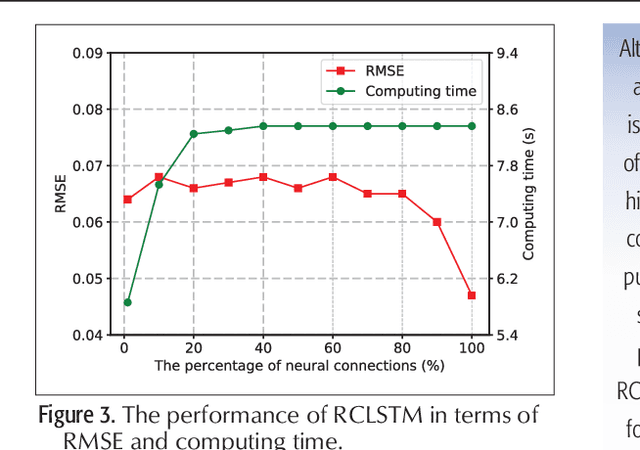

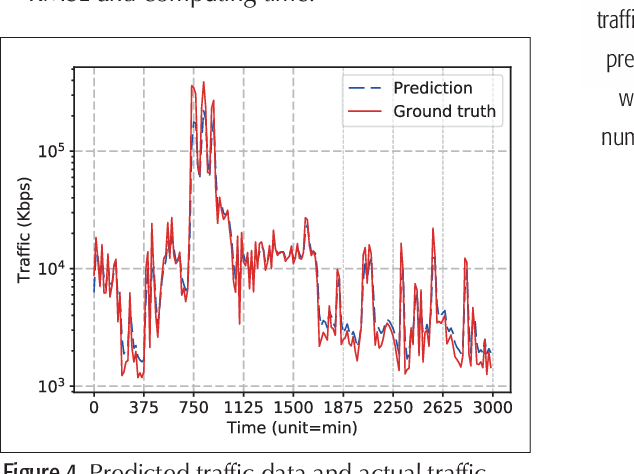

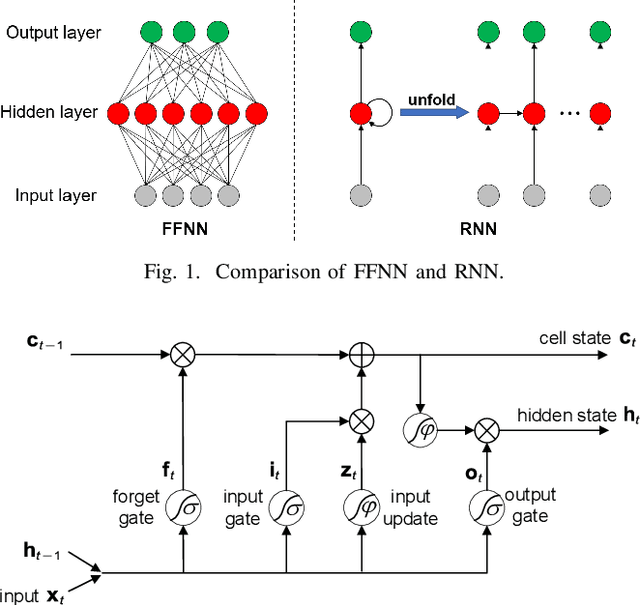

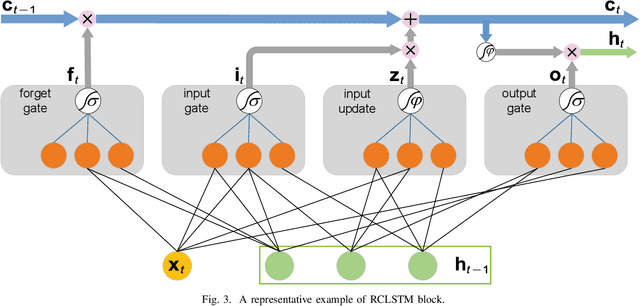

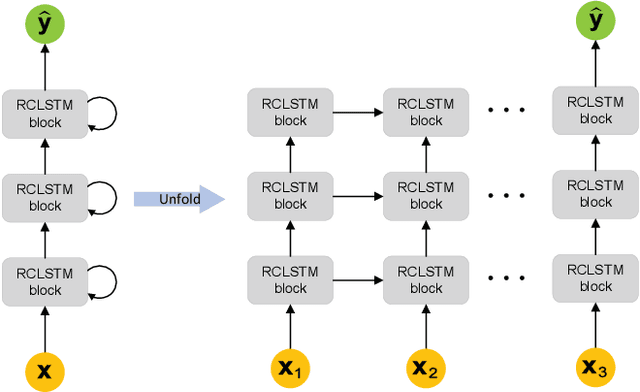

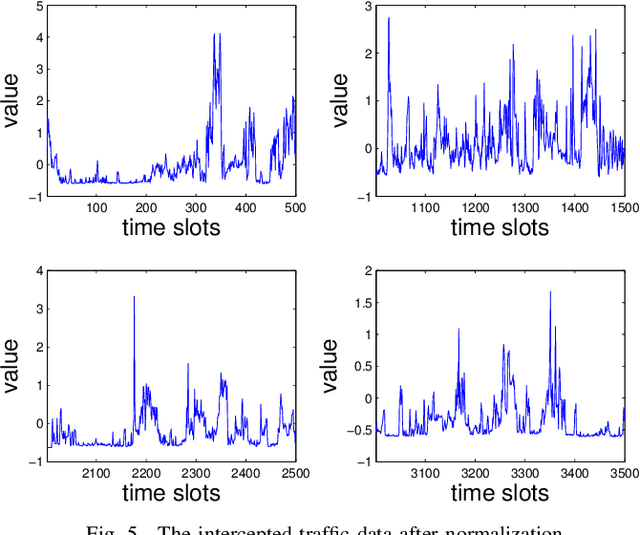

Abstract:Time series prediction can be generalized as a process that extracts useful information from historical records and then determines future values. Learning long-range dependencies that are embedded in time series is often an obstacle for most algorithms, whereas Long Short-Term Memory (LSTM) solutions, as a specific kind of scheme in deep learning, promise to effectively overcome the problem. In this article, we first give a brief introduction to the structure and forward propagation mechanism of the LSTM model. Then, aiming at reducing the considerable computing cost of LSTM, we put forward the Random Connectivity LSTM (RCLSTM) model and test it by predicting traffic and user mobility in telecommunication networks. Compared to LSTM, RCLSTM is formed via stochastic connectivity between neurons, which achieves a significant breakthrough in the architecture formation of neural networks. In this way, the RCLSTM model exhibits a certain level of sparsity, which leads to an appealing decrease in the computational complexity and makes the RCLSTM model become more applicable in latency-stringent application scenarios. In the field of telecommunication networks, the prediction of traffic series and mobility traces could directly benefit from this improvement as we further demonstrate that the prediction accuracy of RCLSTM is comparable to that of the conventional LSTM no matter how we change the number of training samples or the length of input sequences.

Traffic Prediction Based on Random Connectivity in Deep Learning with Long Short-Term Memory

Apr 03, 2018

Abstract:Traffic prediction plays an important role in evaluating the performance of telecommunication networks and attracts intense research interests. A significant number of algorithms and models have been put forward to analyse traffic data and make prediction. In the recent big data era, deep learning has been exploited to mine the profound information hidden in the data. In particular, Long Short-Term Memory (LSTM), one kind of Recurrent Neural Network (RNN) schemes, has attracted a lot of attentions due to its capability of processing the long-range dependency embedded in the sequential traffic data. However, LSTM has considerable computational cost, which can not be tolerated in tasks with stringent latency requirement. In this paper, we propose a deep learning model based on LSTM, called Random Connectivity LSTM (RCLSTM). Compared to the conventional LSTM, RCLSTM makes a notable breakthrough in the formation of neural network, which is that the neurons are connected in a stochastic manner rather than full connected. So, the RCLSTM, with certain intrinsic sparsity, have many neural connections absent (distinguished from the full connectivity) and which leads to the reduction of the parameters to be trained and the computational cost. We apply the RCLSTM to predict traffic and validate that the RCLSTM with even 35% neural connectivity still shows a satisfactory performance. When we gradually add training samples, the performance of RCLSTM becomes increasingly closer to the baseline LSTM. Moreover, for the input traffic sequences of enough length, the RCLSTM exhibits even superior prediction accuracy than the baseline LSTM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge