GAN-based Deep Distributional Reinforcement Learning for Resource Management in Network Slicing

Paper and Code

May 10, 2019

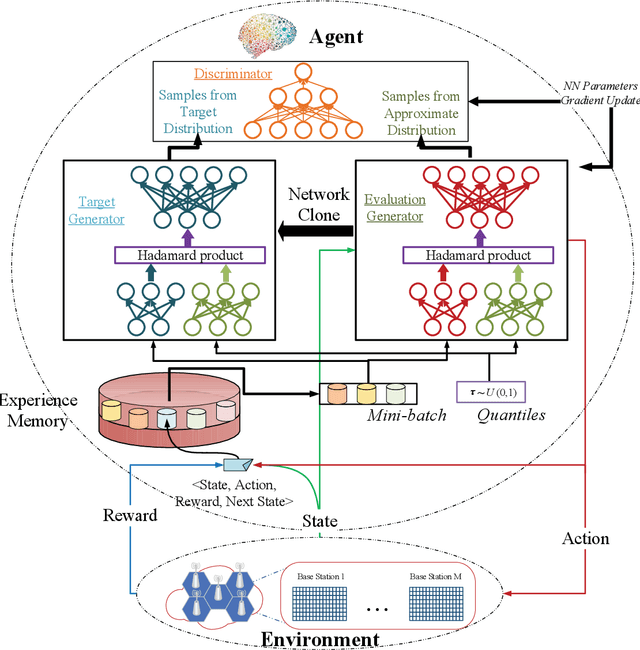

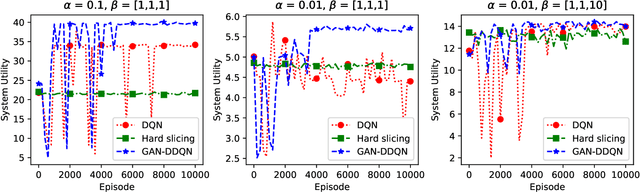

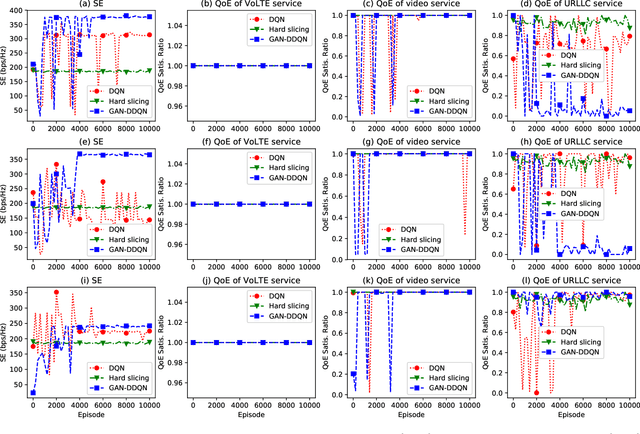

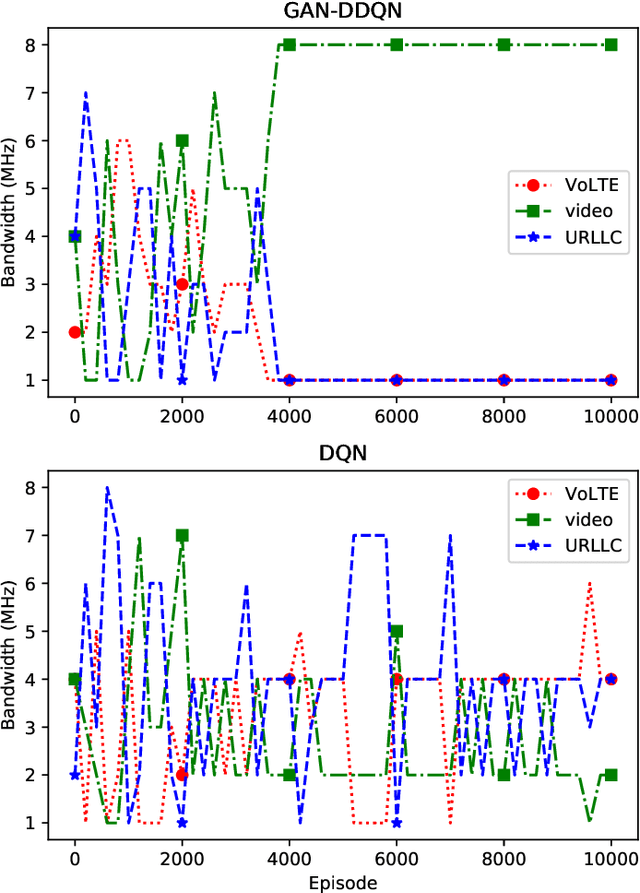

Network slicing is a key technology in 5G communications system, which aims to dynamically and efficiently allocate resources for diversified services with distinct requirements over a common underlying physical infrastructure. Therein, demand-aware allocation is of significant importance to network slicing. In this paper, we consider a scenario that contains several slices in one base station on sharing the same bandwidth. Deep reinforcement learning (DRL) is leveraged to solve this problem by regarding the varying demands and the allocated bandwidth as the environment \emph{state} and \emph{action}, respectively. In order to obtain better quality of experience (QoE) satisfaction ratio and spectrum efficiency (SE), we propose generative adversarial network (GAN) based deep distributional Q network (GAN-DDQN) to learn the distribution of state-action values. Furthermore, we estimate the distributions by approximating a full quantile function, which can make the training error more controllable. In order to protect the stability of GAN-DDQN's training process from the widely-spanning utility values, we also put forward a reward-clipping mechanism. Finally, we verify the performance of the proposed GAN-DDQN algorithm through extensive simulations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge