Yuriy Biktairov

Kraken: enabling joint trajectory prediction by utilizing Mode Transformer and Greedy Mode Processing

Dec 08, 2023Abstract:Accurate and reliable motion prediction is essential for safe urban autonomy. The most prominent motion prediction approaches are based on modeling the distribution of possible future trajectories of each actor in autonomous system's vicinity. These "independent" marginal predictions might be accurate enough to properly describe casual driving situations where the prediction target is not likely to interact with other actors. They are, however, inadequate for modeling interactive situations where the actors' future trajectories are likely to intersect. To mitigate this issue we propose Kraken -- a real-time trajectory prediction model capable of approximating pairwise interactions between the actors as well as producing accurate marginal predictions. Kraken relies on a simple Greedy Mode Processing technique allowing it to convert a factorized prediction for a pair of agents into a physically-plausible joint prediction. It also utilizes the Mode Transformer module to increase the diversity of predicted trajectories and make the joint prediction more informative. We evaluate Kraken on Waymo Motion Prediction challenge where it held the first place in the Interaction leaderboard and the second place in the Motion leaderboard in October 2021.

Match and Locate: low-frequency monocular odometry based on deep feature matching

Nov 16, 2023

Abstract:Accurate and robust pose estimation plays a crucial role in many robotic systems. Popular algorithms for pose estimation typically rely on high-fidelity and high-frequency signals from various sensors. Inclusion of these sensors makes the system less affordable and much more complicated. In this work we introduce a novel approach for the robotic odometry which only requires a single camera and, importantly, can produce reliable estimates given even extremely low-frequency signal of around one frame per second. The approach is based on matching image features between the consecutive frames of the video stream using deep feature matching models. The resulting coarse estimate is then adjusted by a convolutional neural network, which is also responsible for estimating the scale of the transition, otherwise irretrievable using only the feature matching information. We evaluate the performance of the approach in the AISG-SLA Visual Localisation Challenge and find that while being computationally efficient and easy to implement our method shows competitive results with only around $3^{\circ}$ of orientation estimation error and $2m$ of translation estimation error taking the third place in the challenge.

PRANK: motion Prediction based on RANKing

Oct 22, 2020

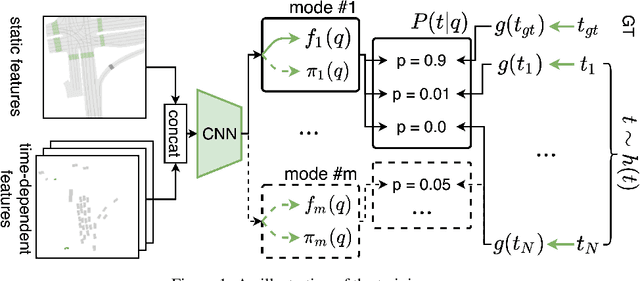

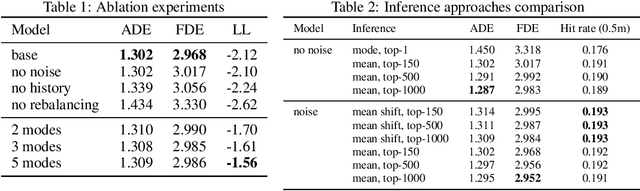

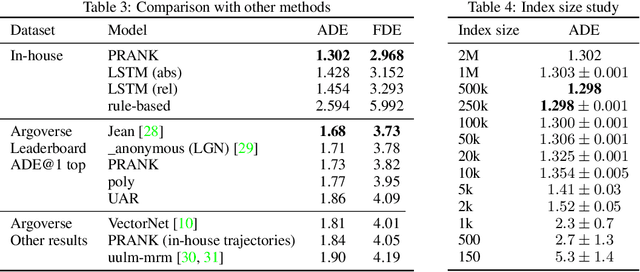

Abstract:Predicting the motion of agents such as pedestrians or human-driven vehicles is one of the most critical problems in the autonomous driving domain. The overall safety of driving and the comfort of a passenger directly depend on its successful solution. The motion prediction problem also remains one of the most challenging problems in autonomous driving engineering, mainly due to high variance of the possible agent's future behavior given a situation. The two phenomena responsible for the said variance are the multimodality caused by the uncertainty of the agent's intent (e.g., turn right or move forward) and uncertainty in the realization of a given intent (e.g., which lane to turn into). To be useful within a real-time autonomous driving pipeline, a motion prediction system must provide efficient ways to describe and quantify this uncertainty, such as computing posterior modes and their probabilities or estimating density at the point corresponding to a given trajectory. It also should not put substantial density on physically impossible trajectories, as they can confuse the system processing the predictions. In this paper, we introduce the PRANK method, which satisfies these requirements. PRANK takes rasterized bird-eye images of agent's surroundings as an input and extracts features of the scene with a convolutional neural network. It then produces the conditional distribution of agent's trajectories plausible in the given scene. The key contribution of PRANK is a way to represent that distribution using nearest-neighbor methods in latent trajectory space, which allows for efficient inference in real time. We evaluate PRANK on the in-house and Argoverse datasets, where it shows competitive results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge