Yueyue Dai

Senior Member, IEEE

Energy Efficient Computation Offloading in Aerial Edge Networks With Multi-Agent Cooperation

Feb 14, 2023Abstract:With the high flexibility of supporting resource-intensive and time-sensitive applications, unmanned aerial vehicle (UAV)-assisted mobile edge computing (MEC) is proposed as an innovational paradigm to support the mobile users (MUs). As a promising technology, digital twin (DT) is capable of timely mapping the physical entities to virtual models, and reflecting the MEC network state in real-time. In this paper, we first propose an MEC network with multiple movable UAVs and one DT-empowered ground base station to enhance the MEC service for MUs. Considering the limited energy resource of both MUs and UAVs, we formulate an online problem of resource scheduling to minimize the weighted energy consumption of them. To tackle the difficulty of the combinational problem, we formulate it as a Markov decision process (MDP) with multiple types of agents. Since the proposed MDP has huge state space and action space, we propose a deep reinforcement learning approach based on multi-agent proximal policy optimization (MAPPO) with Beta distribution and attention mechanism to pursue the optimal computation offloading policy. Numerical results show that our proposed scheme is able to efficiently reduce the energy consumption and outperforms the benchmarks in performance, convergence speed and utilization of resources.

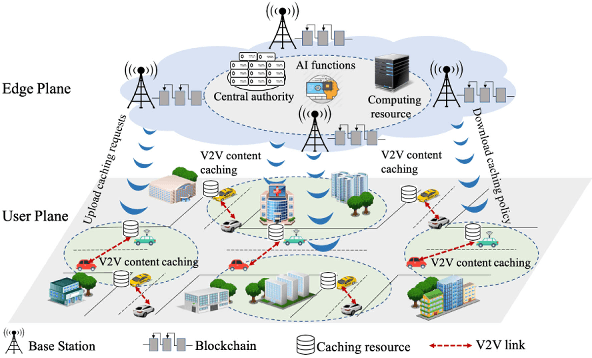

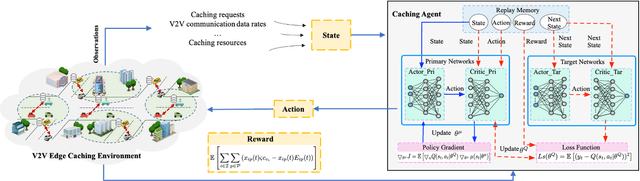

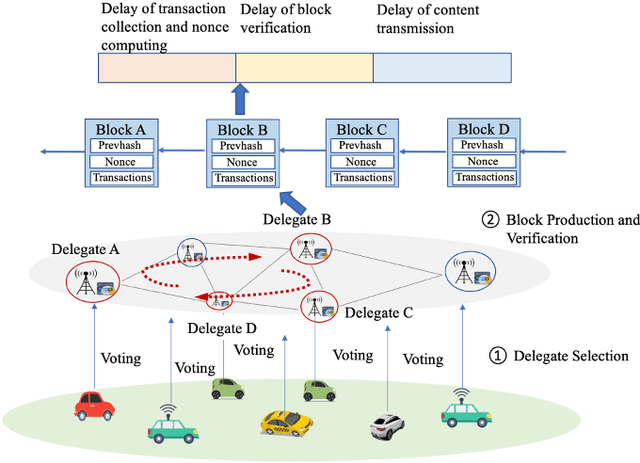

Deep Reinforcement Learning and Permissioned Blockchain for Content Caching in Vehicular Edge Computing and Networks

Nov 19, 2020

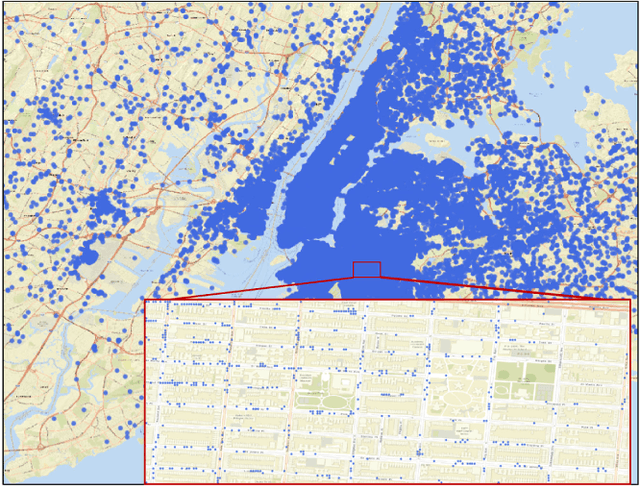

Abstract:Vehicular Edge Computing (VEC) is a promising paradigm to enable huge amount of data and multimedia content to be cached in proximity to vehicles. However, high mobility of vehicles and dynamic wireless channel condition make it challenge to design an optimal content caching policy. Further, with much sensitive personal information, vehicles may be not willing to caching their contents to an untrusted caching provider. Deep Reinforcement Learning (DRL) is an emerging technique to solve the problem with high-dimensional and time-varying features. Permission blockchain is able to establish a secure and decentralized peer-to-peer transaction environment. In this paper, we integrate DRL and permissioned blockchain into vehicular networks for intelligent and secure content caching. We first propose a blockchain empowered distributed content caching framework where vehicles perform content caching and base stations maintain the permissioned blockchain. Then, we exploit the advanced DRL approach to design an optimal content caching scheme with taking mobility into account. Finally, we propose a new block verifier selection method, Proof-of-Utility (PoU), to accelerate block verification process. Security analysis shows that our proposed blockchain empowered content caching can achieve security and privacy protection. Numerical results based on a real dataset from Uber indicate that the DRL-inspired content caching scheme significantly outperforms two benchmark policies.

Edge Intelligence for Energy-efficient Computation Offloading and Resource Allocation in 5G Beyond

Nov 18, 2020

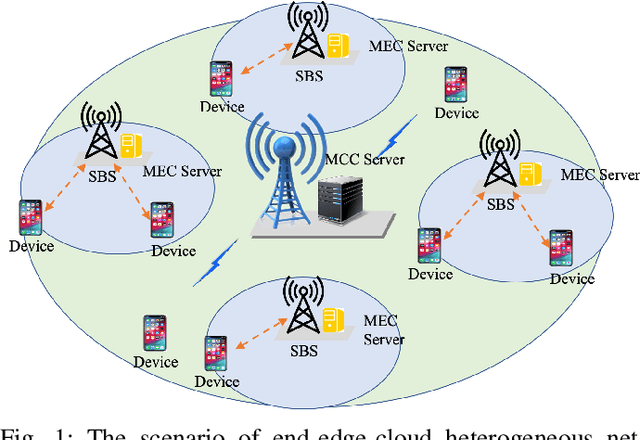

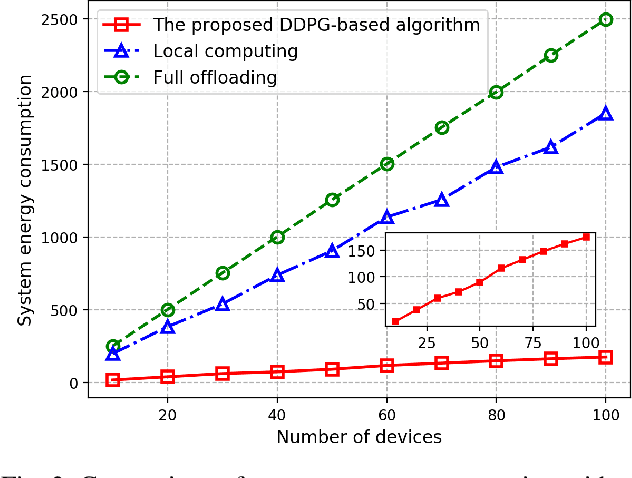

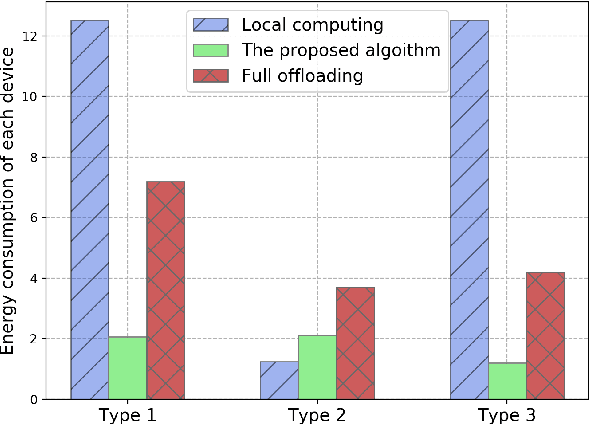

Abstract:5G beyond is an end-edge-cloud orchestrated network that can exploit heterogeneous capabilities of the end devices, edge servers, and the cloud and thus has the potential to enable computation-intensive and delay-sensitive applications via computation offloading. However, in multi user wireless networks, diverse application requirements and the possibility of various radio access modes for communication among devices make it challenging to design an optimal computation offloading scheme. In addition, having access to complete network information that includes variables such as wireless channel state, and available bandwidth and computation resources, is a major issue. Deep Reinforcement Learning (DRL) is an emerging technique to address such an issue with limited and less accurate network information. In this paper, we utilize DRL to design an optimal computation offloading and resource allocation strategy for minimizing system energy consumption. We first present a multi-user end-edge-cloud orchestrated network where all devices and base stations have computation capabilities. Then, we formulate the joint computation offloading and resource allocation problem as a Markov Decision Process (MDP) and propose a new DRL algorithm to minimize system energy consumption. Numerical results based on a real-world dataset demonstrate that the proposed DRL-based algorithm significantly outperforms the benchmark policies in terms of system energy consumption. Extensive simulations show that learning rate, discount factor, and number of devices have considerable influence on the performance of the proposed algorithm.

Deep Reinforcement Learning for Stochastic Computation Offloading in Digital Twin Networks

Nov 18, 2020

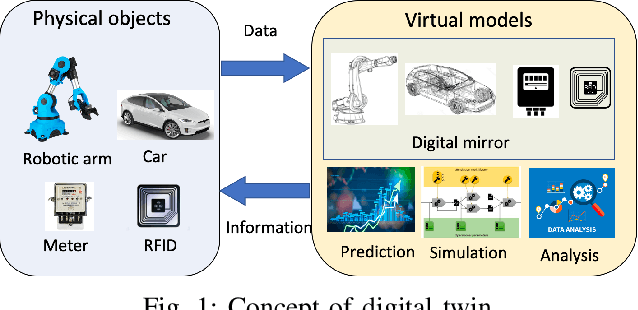

Abstract:The rapid development of Industrial Internet of Things (IIoT) requires industrial production towards digitalization to improve network efficiency. Digital Twin is a promising technology to empower the digital transformation of IIoT by creating virtual models of physical objects. However, the provision of network efficiency in IIoT is very challenging due to resource-constrained devices, stochastic tasks, and resources heterogeneity. Distributed resources in IIoT networks can be efficiently exploited through computation offloading to reduce energy consumption while enhancing data processing efficiency. In this paper, we first propose a new paradigm Digital Twin Networks (DTN) to build network topology and the stochastic task arrival model in IIoT systems. Then, we formulate the stochastic computation offloading and resource allocation problem to minimize the long-term energy efficiency. As the formulated problem is a stochastic programming problem, we leverage Lyapunov optimization technique to transform the original problem into a deterministic per-time slot problem. Finally, we present Asynchronous Actor-Critic (AAC) algorithm to find the optimal stochastic computation offloading policy. Illustrative results demonstrate that our proposed scheme is able to significantly outperforms the benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge