Yuche Li

Towards Efficient Single Image Dehazing and Desnowing

Apr 19, 2022

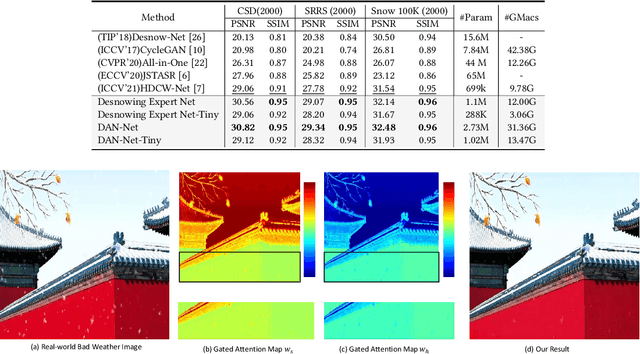

Abstract:Removing adverse weather conditions like rain, fog, and snow from images is a challenging problem. Although the current recovery algorithms targeting a specific condition have made impressive progress, it is not flexible enough to deal with various degradation types. We propose an efficient and compact image restoration network named DAN-Net (Degradation-Adaptive Neural Network) to address this problem, which consists of multiple compact expert networks with one adaptive gated neural. A single expert network efficiently addresses specific degradation in nasty winter scenes relying on the compact architecture and three novel components. Based on the Mixture of Experts strategy, DAN-Net captures degradation information from each input image to adaptively modulate the outputs of task-specific expert networks to remove various adverse winter weather conditions. Specifically, it adopts a lightweight Adaptive Gated Neural Network to estimate gated attention maps of the input image, while different task-specific experts with the same topology are jointly dispatched to process the degraded image. Such novel image restoration pipeline handles different types of severe weather scenes effectively and efficiently. It also enjoys the benefit of coordinate boosting in which the whole network outperforms each expert trained without coordination. Extensive experiments demonstrate that the presented manner outperforms the state-of-the-art single-task methods on image quality and has better inference efficiency. Furthermore, we have collected the first real-world winter scenes dataset to evaluate winter image restoration methods, which contains various hazy and snowy images snapped in winter. Both the dataset and source code will be publicly available.

Underwater Light Field Retention : Neural Rendering for Underwater Imaging

Mar 21, 2022

Abstract:Underwater Image Rendering aims to generate a true-to-life underwater image from a given clean one, which could be applied to various practical applications such as underwater image enhancement, camera filter, and virtual gaming. We explore two less-touched but challenging problems in underwater image rendering, namely, i) how to render diverse underwater scenes by a single neural network? ii) how to adaptively learn the underwater light fields from natural exemplars, \textit{i,e.}, realistic underwater images? To this end, we propose a neural rendering method for underwater imaging, dubbed UWNR (Underwater Neural Rendering). Specifically, UWNR is a data-driven neural network that implicitly learns the natural degenerated model from authentic underwater images, avoiding introducing erroneous biases by hand-craft imaging models. Compared with existing underwater image generation methods, UWNR utilizes the natural light field to simulate the main characteristics of the underwater scene. Thus, it is able to synthesize a wide variety of underwater images from one clean image with various realistic underwater images. Extensive experiments demonstrate that our approach achieves better visual effects and quantitative metrics over previous methods. Moreover, we adopt UWNR to build an open Large Neural Rendering Underwater Dataset containing various types of water quality, dubbed LNRUD.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge