Yu-Siang Huang

Pop Music Transformer: Generating Music with Rhythm and Harmony

Feb 01, 2020

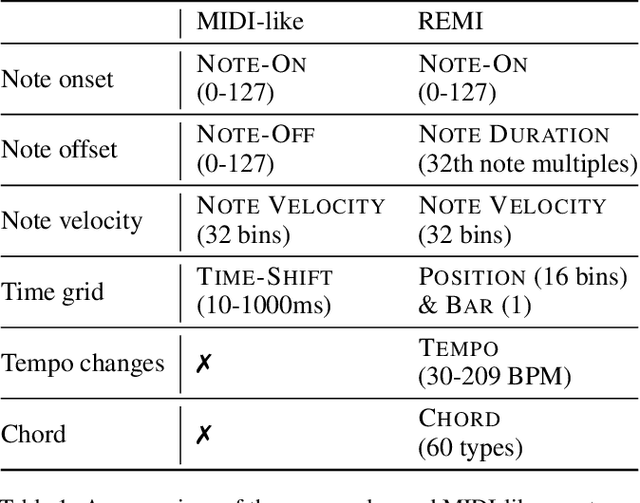

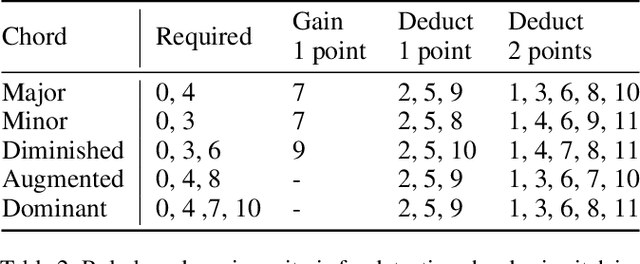

Abstract:The task automatic music composition entails generative modeling of music in symbolic formats such as the musical scores. By serializing a score as a sequence of MIDI-like events, recent work has demonstrated that state-of-the-art sequence models with self-attention work nicely for this task, especially for composing music with long-range coherence. In this paper, we show that sequence models can do even better when we improve the way a musical score is converted into events. The new event set, dubbed "REMI" (REvamped MIDI-derived events), provides sequence models a metric context for modeling the rhythmic patterns of music, while allowing for local tempo changes. Moreover, it explicitly sets up a harmonic structure and makes chord progression controllable. It also facilitates coordinating different tracks of a musical piece, such as the piano, bass and drums. With this new approach, we build a Pop Music Transformer that composes Pop piano music with a more plausible rhythmic structure than prior arts do. The code, data and pre-trained model are publicly available.\footnote{\url{https://github.com/YatingMusic/remi}}

Pop Music Highlighter: Marking the Emotion Keypoints

Sep 25, 2018

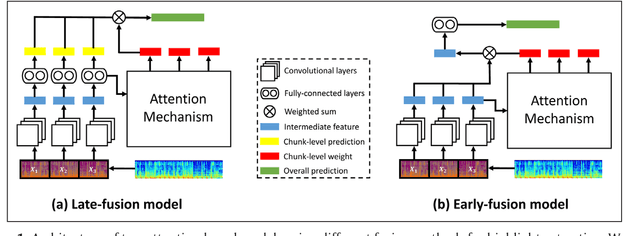

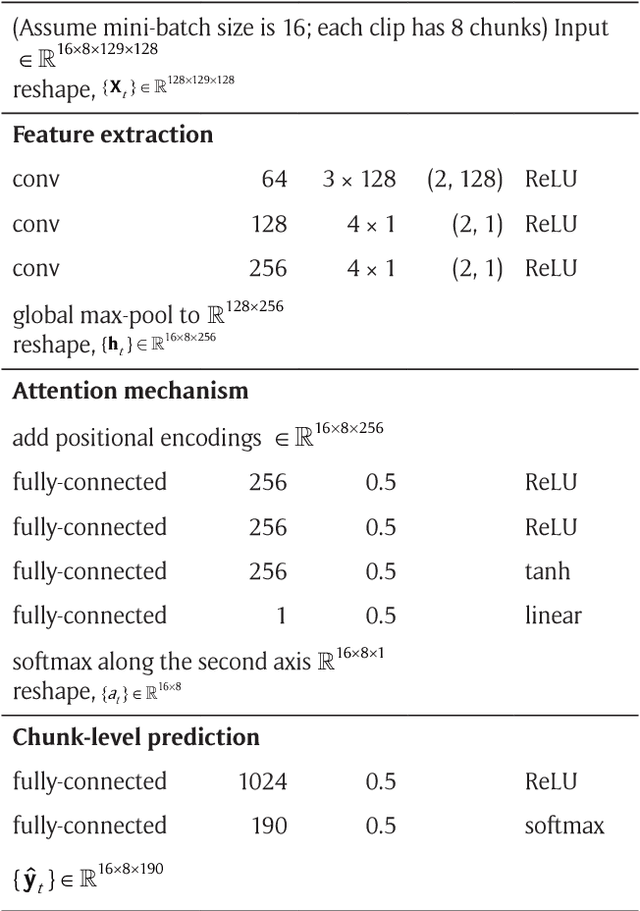

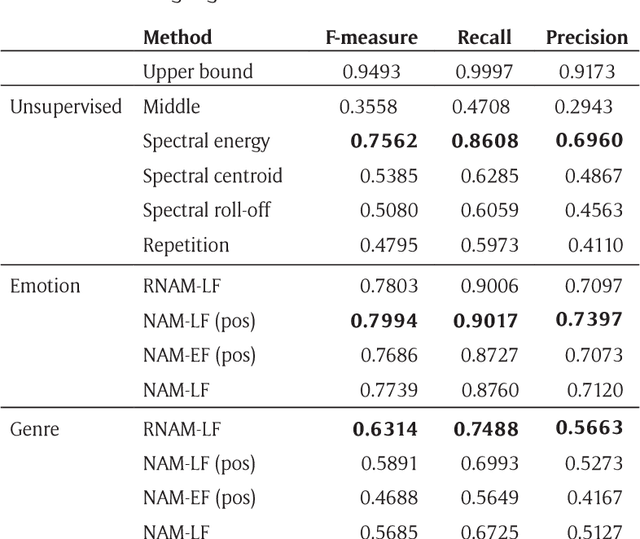

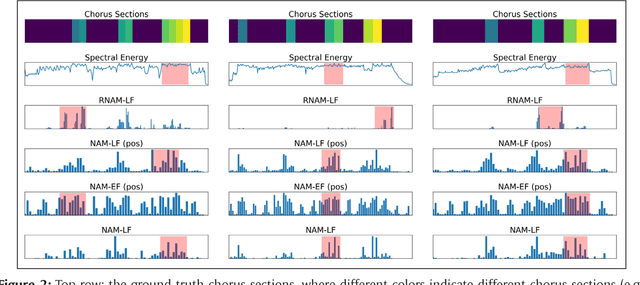

Abstract:The goal of music highlight extraction is to get a short consecutive segment of a piece of music that provides an effective representation of the whole piece. In a previous work, we introduced an attention-based convolutional recurrent neural network that uses music emotion classification as a surrogate task for music highlight extraction, for Pop songs. The rationale behind that approach is that the highlight of a song is usually the most emotional part. This paper extends our previous work in the following two aspects. First, methodology-wise we experiment with a new architecture that does not need any recurrent layers, making the training process faster. Moreover, we compare a late-fusion variant and an early-fusion variant to study which one better exploits the attention mechanism. Second, we conduct and report an extensive set of experiments comparing the proposed attention-based methods against a heuristic energy-based method, a structural repetition-based method, and a few other simple feature-based methods for this task. Due to the lack of public-domain labeled data for highlight extraction, following our previous work we use the RWC POP 100-song data set to evaluate how the detected highlights overlap with any chorus sections of the songs. The experiments demonstrate the effectiveness of our methods over competing methods. For reproducibility, we open source the code and pre-trained model at https://github.com/remyhuang/pop-music-highlighter/.

Generating Music Medleys via Playing Music Puzzle Games

Nov 17, 2017

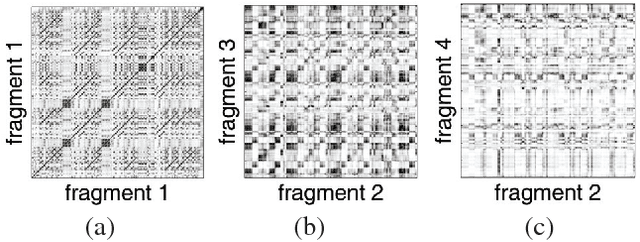

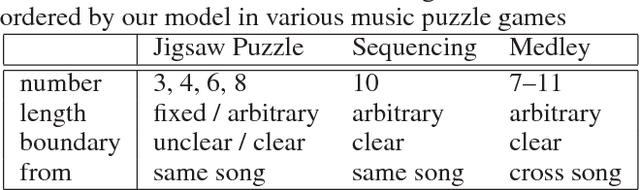

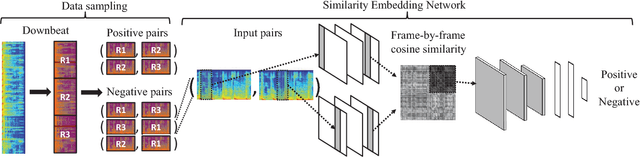

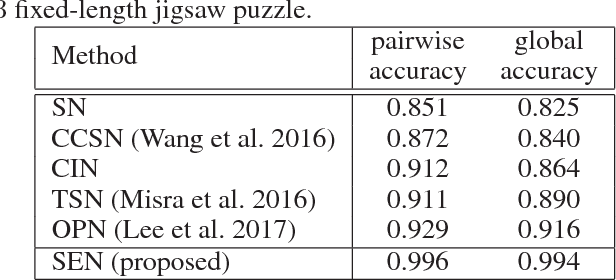

Abstract:Generating music medleys is about finding an optimal permutation of a given set of music clips. Toward this goal, we propose a self-supervised learning task, called the music puzzle game, to train neural network models to learn the sequential patterns in music. In essence, such a game requires machines to correctly sort a few multisecond music fragments. In the training stage, we learn the model by sampling multiple non-overlapping fragment pairs from the same songs and seeking to predict whether a given pair is consecutive and is in the correct chronological order. For testing, we design a number of puzzle games with different difficulty levels, the most difficult one being music medley, which requiring sorting fragments from different songs. On the basis of state-of-the-art Siamese convolutional network, we propose an improved architecture that learns to embed frame-level similarity scores computed from the input fragment pairs to a common space, where fragment pairs in the correct order can be more easily identified. Our result shows that the resulting model, dubbed as the similarity embedding network (SEN), performs better than competing models across different games, including music jigsaw puzzle, music sequencing, and music medley. Example results can be found at our project website, https://remyhuang.github.io/DJnet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge