Young-Keun Kim

Developing a Compressed Object Detection Model based on YOLOv4 for Deployment on Embedded GPU Platform of Autonomous System

Aug 01, 2021

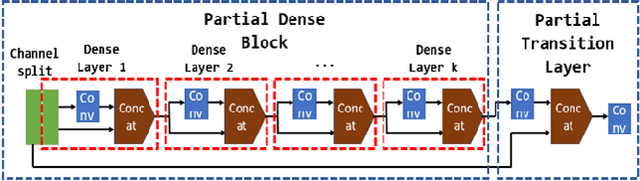

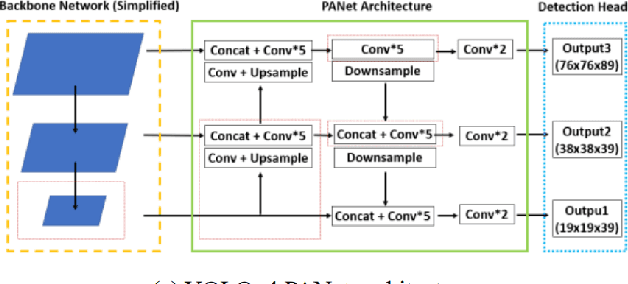

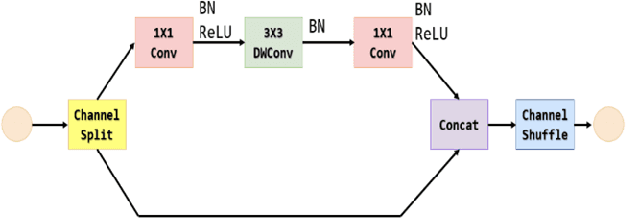

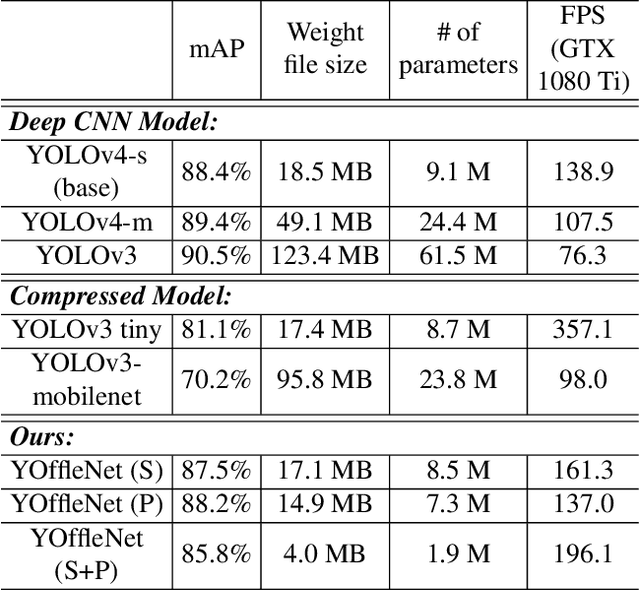

Abstract:Latest CNN-based object detection models are quite accurate but require a high-performance GPU to run in real-time. They still are heavy in terms of memory size and speed for an embedded system with limited memory space. Since the object detection for autonomous system is run on an embedded processor, it is preferable to compress the detection network as light as possible while preserving the detection accuracy. There are several popular lightweight detection models but their accuracy is too low for safe driving applications. Therefore, this paper proposes a new object detection model, referred as YOffleNet, which is compressed at a high ratio while minimizing the accuracy loss for real-time and safe driving application on an autonomous system. The backbone network architecture is based on YOLOv4, but we could compress the network greatly by replacing the high-calculation-load CSP DenseNet with the lighter modules of ShuffleNet. Experiments with KITTI dataset showed that the proposed YOffleNet is compressed by 4.7 times than the YOLOv4-s that could achieve as fast as 46 FPS on an embedded GPU system(NVIDIA Jetson AGX Xavier). Compared to the high compression ratio, the accuracy is reduced slightly to 85.8% mAP, that is only 2.6% lower than YOLOv4-s. Thus, the proposed network showed a high potential to be deployed on the embedded system of the autonomous system for the real-time and accurate object detection applications.

EEG-Inception: An Accurate and Robust End-to-End Neural Network for EEG-based Motor Imagery Classification

Feb 01, 2021

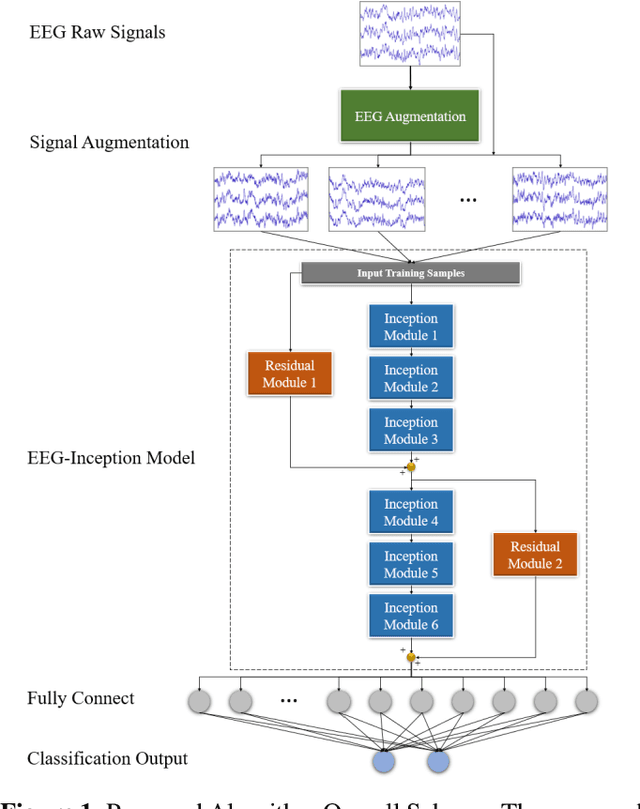

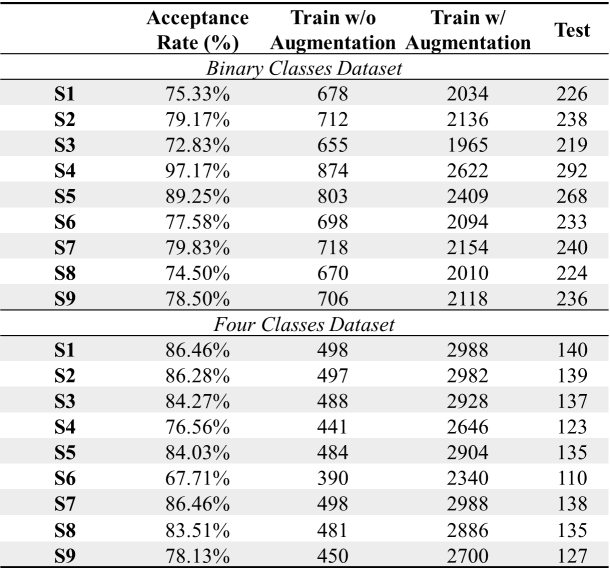

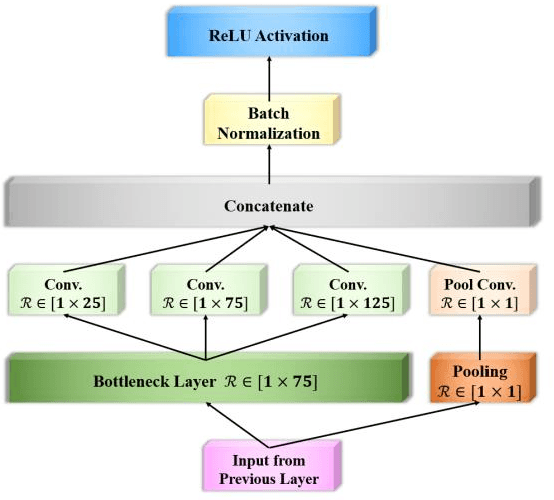

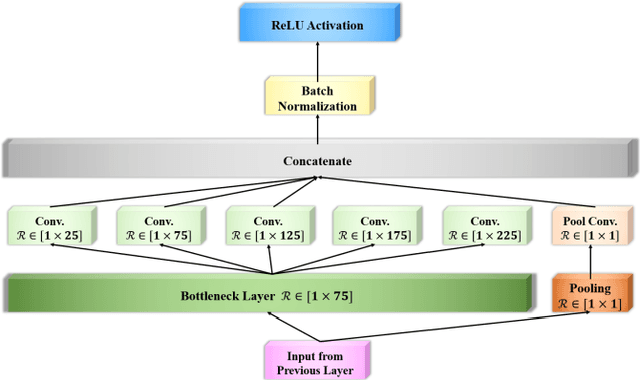

Abstract:Classification of EEG-based motor imagery (MI) is a crucial non-invasive application in brain-computer interface (BCI) research. This paper proposes a novel convolutional neural network (CNN) architecture for accurate and robust EEG-based MI classification that outperforms the state-of-the-art methods. The proposed CNN model, namely EEG-Inception, is built on the backbone of the Inception-Time network, which showed to be highly efficient and accurate for time-series classification. Also, the proposed network is an end-to-end classification, as it takes the raw EEG signals as the input and does not require complex EEG signal-preprocessing. Furthermore, this paper proposes a novel data augmentation method for EEG signals to enhance the accuracy, at least by 3%, and reduce overfitting with limited BCI datasets. The proposed model outperforms all the state-of-the-art methods by achieving the average accuracy of 88.4% and 88.6% on the 2008 BCI Competition IV 2a (four-classes) and 2b datasets (binary-classes), respectively. Furthermore, it takes less than 0.025 seconds to test a sample suitable for real-time processing. Moreover, the classification standard deviation for nine different subjects achieves the lowest value of 5.5 for the 2b dataset and 7.1 for the 2a dataset, which validates that the proposed method is highly robust. From the experiment results, it can be inferred that the EEG-Inception network exhibits a strong potential as a subject-independent classifier for EEG-based MI tasks.

FRDet: Balanced and Lightweight Object Detector based on Fire-Residual Modules for Embedded Processor of Autonomous Driving

Nov 16, 2020

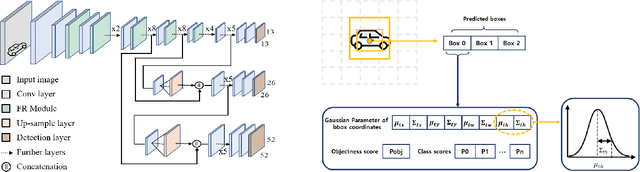

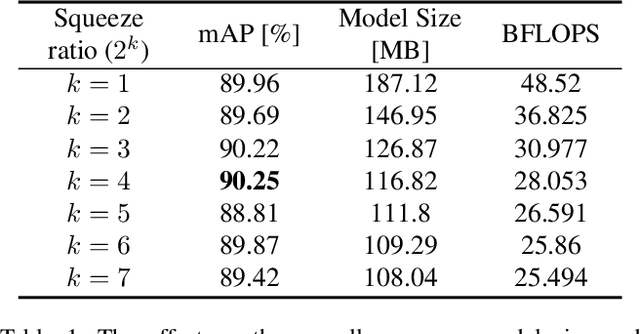

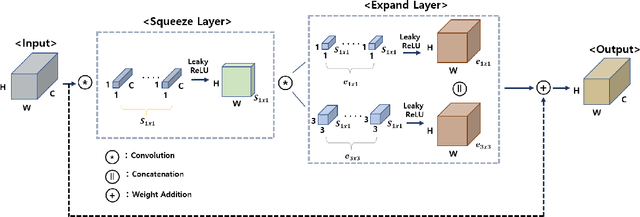

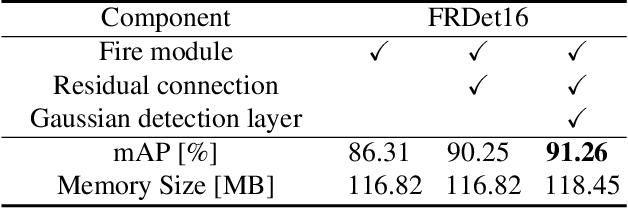

Abstract:For deployment on an embedded processor for autonomous driving, the object detection network should satisfy all of the accuracy, real-time inference, and light model size requirements. Conventional deep CNN-based detectors aim for high accuracy, making their model size heavy for an embedded system with limited memory space. In contrast, lightweight object detectors are greatly compressed but at a significant sacrifice of accuracy. Therefore, we propose FRDet, a lightweight one-stage object detector that is balanced to satisfy all the constraints of accuracy, model size, and real-time processing on an embedded GPU processor for autonomous driving applications. Our network aims to maximize the compression of the model while achieving or surpassing YOLOv3 level of accuracy. This paper proposes the Fire-Residual (FR) module to design a lightweight network with low accuracy loss by adapting fire modules with residual skip connections. In addition, the Gaussian uncertainty modeling of the bounding box is applied to further enhance the localization accuracy. Experiments on the KITTI dataset showed that FRDet reduced the memory size by 50.8% but achieved higher accuracy by 1.12% mAP compared to YOLOv3. Moreover, the real-time detection speed reached 31.3 FPS on an embedded GPU board(NVIDIA Xavier). The proposed network achieved higher compression with comparable accuracy compared to other deep CNN object detectors while showing improved accuracy than the lightweight detector baselines. Therefore, the proposed FRDet is a well-balanced and efficient object detector for practical application in autonomous driving that can satisfies all the criteria of accuracy, real-time inference, and light model size.

Accurate Alignment Inspection System for Low-resolution Automotive and Mobility LiDAR

Aug 24, 2020

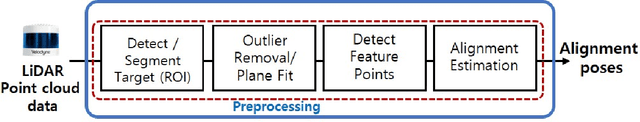

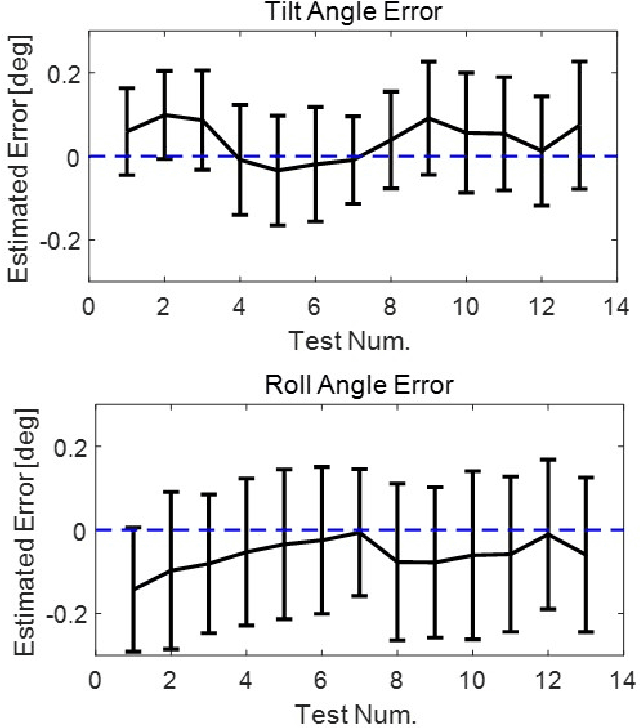

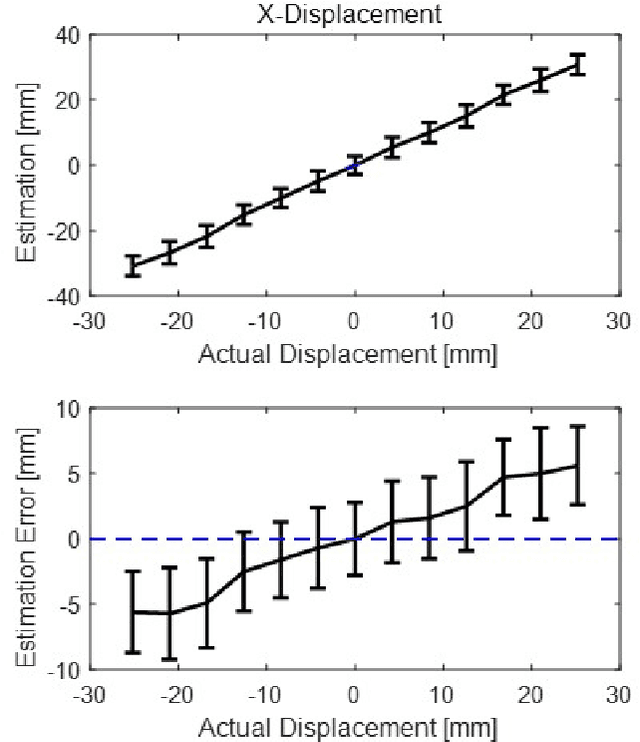

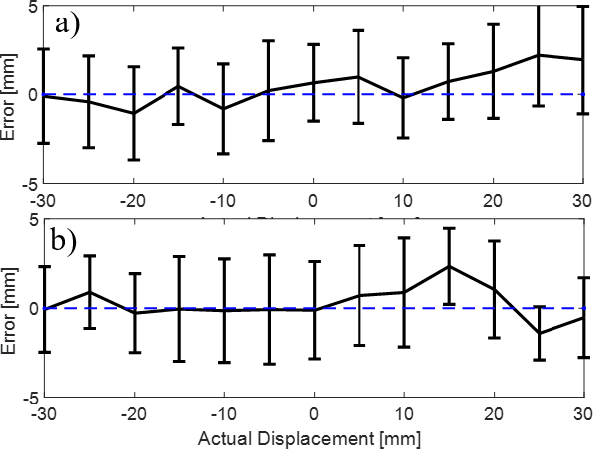

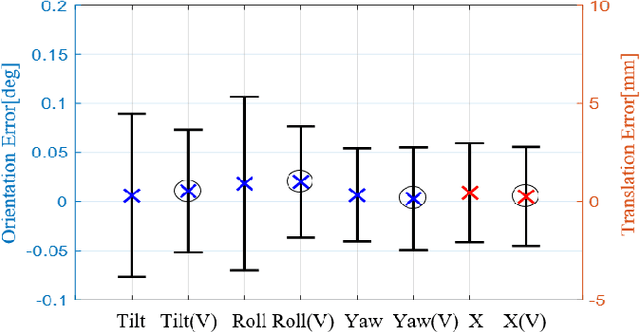

Abstract:A misalignment of LiDAR as low as a few degrees could cause a significant error in obstacle detection and mapping that could cause safety and quality issues. In this paper, an accurate inspection system is proposed for estimating a LiDAR alignment error after sensor attachment on a mobility system such as a vehicle or robot. The proposed method uses only a single target board at the fixed position to estimate the three orientations (roll, tilt, and yaw) and the horizontal position of the LiDAR attachment with sub-degree and millimeter level accuracy. After the proposed preprocessing steps, the feature beam points that are the closest to each target corner are extracted and used to calculate the sensor attachment pose with respect to the target board frame using a nonlinear optimization method and with a low computational cost. The performance of the proposed method is evaluated using a test bench that can control the reference yaw and horizontal translation of LiDAR within ranges of 3 degrees and 30 millimeters, respectively. The experimental results for a low-resolution 16 channel LiDAR (Velodyne VLP-16) confirmed that misalignment could be estimated with accuracy within 0.2 degrees and 4 mm. The high accuracy and simplicity of the proposed system make it practical for large-scale industrial applications such as automobile or robot manufacturing process that inspects the sensor attachment for the safety quality control.

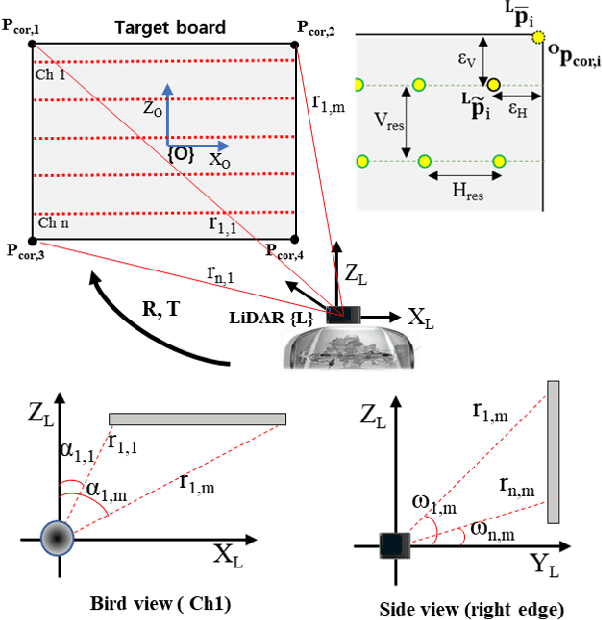

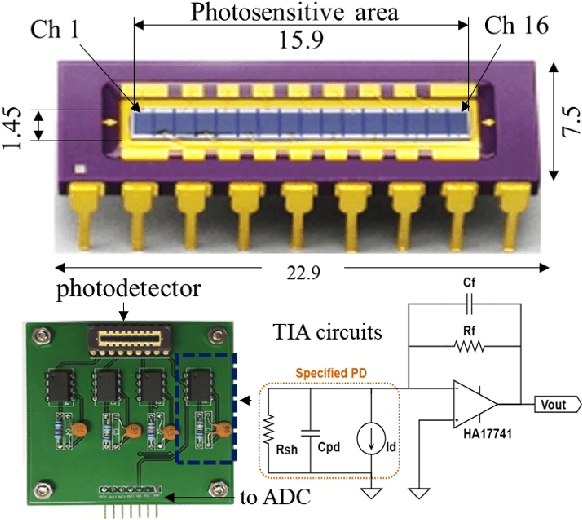

Automatic LiDAR Extrinsic Calibration System using Photodetector and Planar Board for Large-scale Applications

Aug 24, 2020

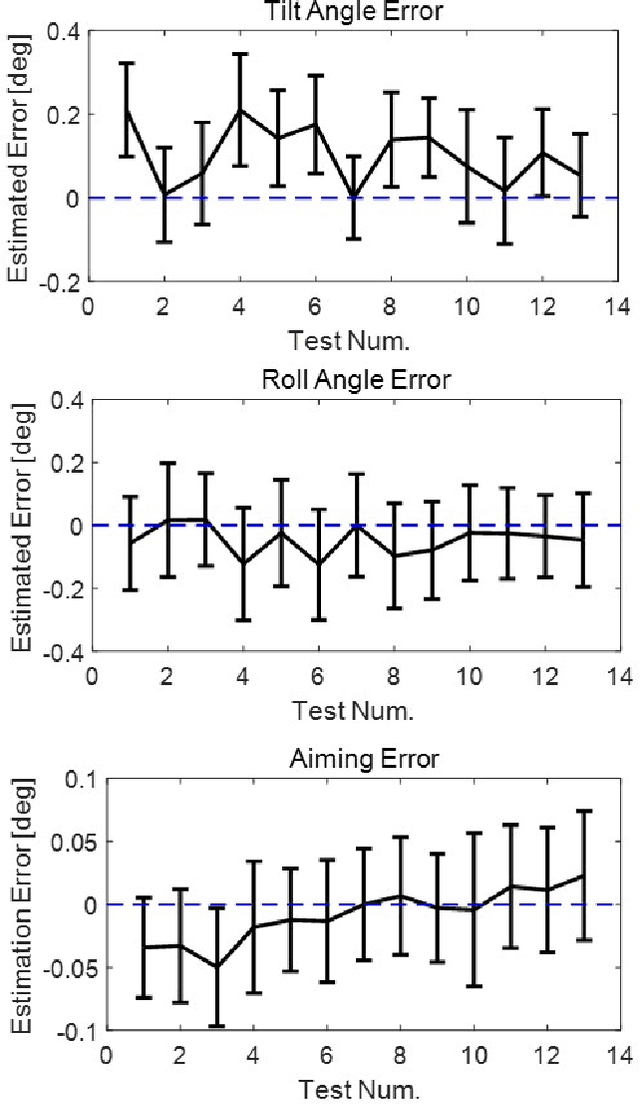

Abstract:This paper presents a novel automatic calibration system to estimate the extrinsic parameters of LiDAR mounted on a mobile platform for sensor misalignment inspection in the large-scale production of highly automated vehicles. To obtain subdegree and subcentimeter accuracy levels of extrinsic calibration, this study proposed a new concept of a target board with embedded photodetector arrays, named the PD-target system, to find the precise position of the correspondence laser beams on the target surface. Furthermore, the proposed system requires only the simple design of the target board at the fixed pose in a close range to be readily applicable in the automobile manufacturing environment. The experimental evaluation of the proposed system on low-resolution LiDAR showed that the LiDAR offset pose can be estimated within 0.1 degree and 3 mm levels of precision. The high accuracy and simplicity of the proposed calibration system make it practical for large-scale applications for the reliability and safety of autonomous systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge