Yongli Sun

Collaborative Attention Network for Person Re-identification

Nov 29, 2019

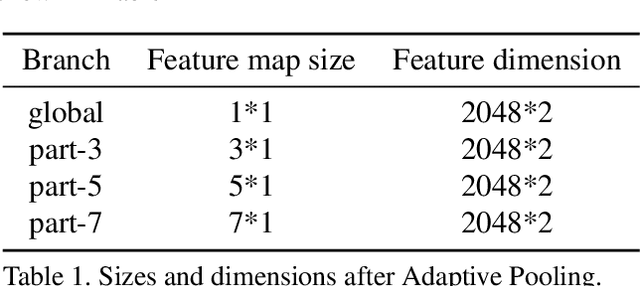

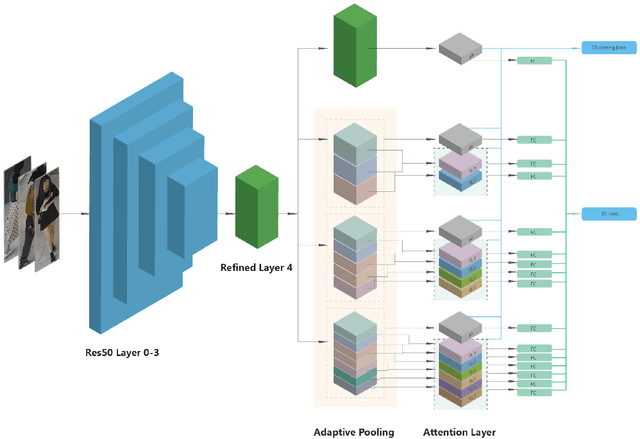

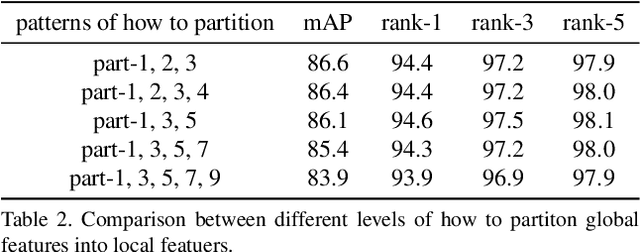

Abstract:Jointly utilizing global and local features to improve model accuracy is becoming a popular approach for the person re-identification (ReID) problem, because previous works using global features alone have very limited capacity at extracting discriminative local patterns in the obtained feature representation. Existing works that attempt to collect local patterns either explicitly slice the global feature into several local pieces in a handcrafted way, or apply the attention mechanism to implicitly infer the importance of different local regions. In this paper, we show that by explicitly learning the importance of small local parts and part combinations, we can further improve the final feature representation for Re-ID. Specifically, we first separate the global feature into multiple local slices at different scale with a proposed multi-branch structure. Then we introduce the Collaborative Attention Network (CAN) to automatically learn the combination of features from adjacent slices. In this way, the combination keeps the intrinsic relation between adjacent features across local regions and scales, without losing information by partitioning the global features. Experiment results on several widely-used public datasets including Market-1501, DukeMTMC-ReID and CUHK03 prove that the proposed method outperforms many existing state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge