Ya-Qiu Jin

SAR-NeRF: Neural Radiance Fields for Synthetic Aperture Radar Multi-View Representation

Jul 11, 2023Abstract:SAR images are highly sensitive to observation configurations, and they exhibit significant variations across different viewing angles, making it challenging to represent and learn their anisotropic features. As a result, deep learning methods often generalize poorly across different view angles. Inspired by the concept of neural radiance fields (NeRF), this study combines SAR imaging mechanisms with neural networks to propose a novel NeRF model for SAR image generation. Following the mapping and projection pinciples, a set of SAR images is modeled implicitly as a function of attenuation coefficients and scattering intensities in the 3D imaging space through a differentiable rendering equation. SAR-NeRF is then constructed to learn the distribution of attenuation coefficients and scattering intensities of voxels, where the vectorized form of 3D voxel SAR rendering equation and the sampling relationship between the 3D space voxels and the 2D view ray grids are analytically derived. Through quantitative experiments on various datasets, we thoroughly assess the multi-view representation and generalization capabilities of SAR-NeRF. Additionally, it is found that SAR-NeRF augumented dataset can significantly improve SAR target classification performance under few-shot learning setup, where a 10-type classification accuracy of 91.6\% can be achieved by using only 12 images per class.

Reciprocal Translation between SAR and Optical Remote Sensing Images with Cascaded-Residual Adversarial Networks

Jan 24, 2019

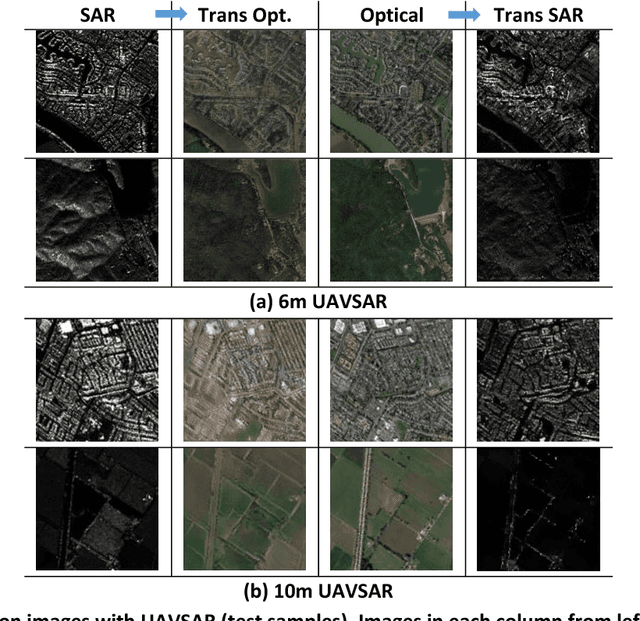

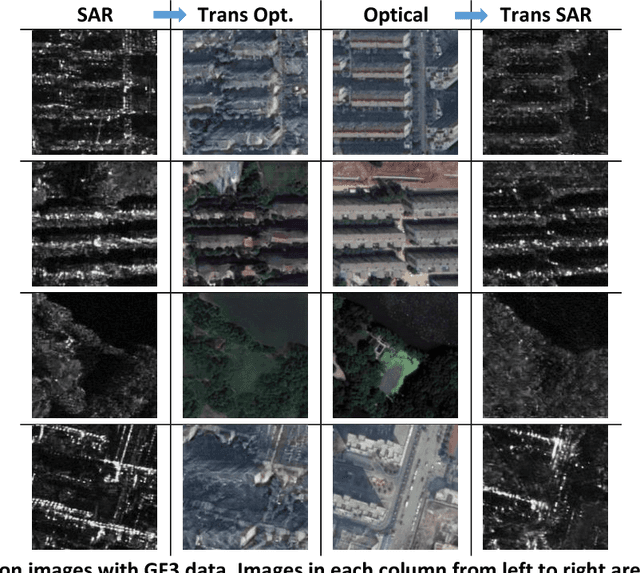

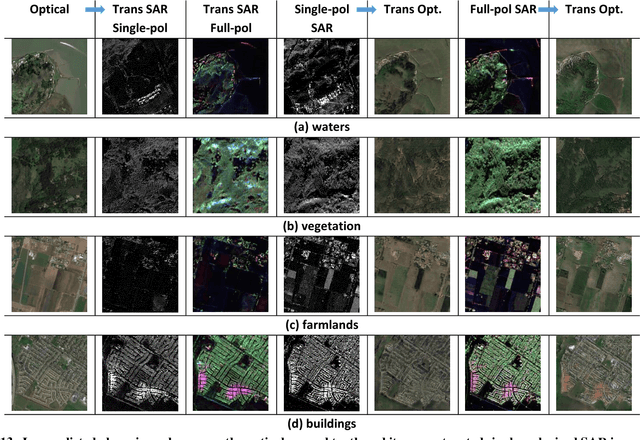

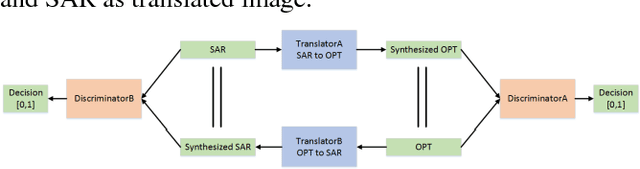

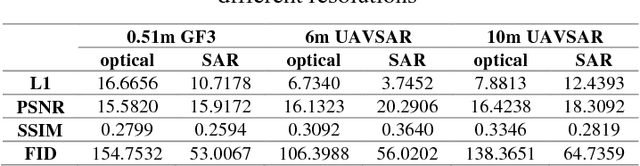

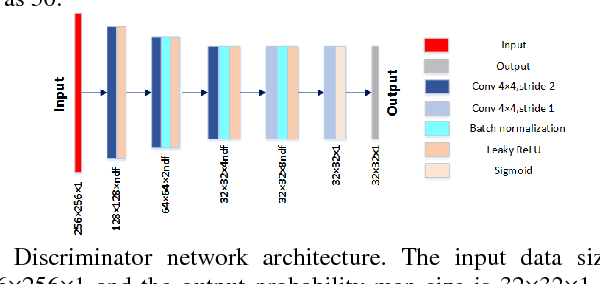

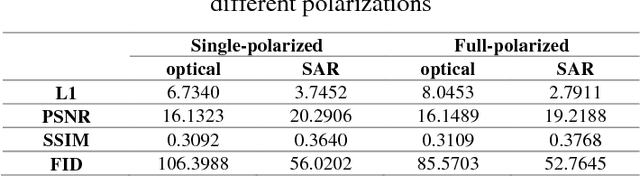

Abstract:Despite the advantages of all-weather and all-day high-resolution imaging, synthetic aperture radar (SAR) images are much less viewed and used by general people because human vision is not adapted to microwave scattering phenomenon. However, expert interpreters can be trained by comparing side-by-side SAR and optical images to learn the mapping rules from SAR to optical. This paper attempts to develop machine intelligence that are trainable with large-volume co-registered SAR and optical images to translate SAR image to optical version for assisted SAR image interpretation. Reciprocal SAR-Optical image translation is a challenging task because it is raw data translation between two physically very different sensing modalities. This paper proposes a novel reciprocal adversarial network scheme where cascaded residual connections and hybrid L1-GAN loss are employed. It is trained and tested on both spaceborne GF-3 and airborne UAVSAR images. Results are presented for datasets of different resolutions and polarizations and compared with other state-of-the-art methods. The FID is used to quantitatively evaluate the translation performance. The possibility of unsupervised learning with unpaired SAR and optical images is also explored. Results show that the proposed translation network works well under many scenarios and it could potentially be used for assisted SAR interpretation.

Translating SAR to Optical Images for Assisted Interpretation

Jan 08, 2019

Abstract:Despite the advantages of all-weather and all-day high-resolution imaging, SAR remote sensing images are much less viewed and used by general people because human vision is not adapted to microwave scattering phenomenon. However, expert interpreters can be trained by compare side-by-side SAR and optical images to learn the translation rules from SAR to optical. This paper attempts to develop machine intelligence that are trainable with large-volume co-registered SAR and optical images to translate SAR image to optical version for assisted SAR interpretation. A novel reciprocal GAN scheme is proposed for this translation task. It is trained and tested on both spaceborne GF-3 and airborne UAVSAR images. Comparisons and analyses are presented for datasets of different resolutions and polarizations. Results show that the proposed translation network works well under many scenarios and it could potentially be used for assisted SAR interpretation.

SAR Image Colorization: Converting Single-Polarization to Fully Polarimetric Using Deep Neural Networks

Jul 22, 2017Abstract:A deep neural networks based method is proposed to convert single polarization grayscale SAR image to fully polarimetric. It consists of two components: a feature extractor network to extract hierarchical multi-scale spatial features of grayscale SAR image, followed by a feature translator network to map spatial feature to polarimetric feature with which the polarimetric covariance matrix of each pixel can be reconstructed. Both qualitative and quantitative experiments with real fully polarimetric data are conducted to show the efficacy of the proposed method. The reconstructed full-pol SAR image agrees well with the true full-pol image. Existing PolSAR applications such as model-based decomposition and unsupervised classification can be applied directly to the reconstructed full-pol SAR images. This framework can be easily extended to reconstruction of full-pol data from compact-pol data. The experiment results also show that the proposed method could be potentially used for interference removal on the cross-polarization channel.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge