Xiong-lin Luo

GMM Discriminant Analysis with Noisy Label for Each Class

Jan 25, 2022

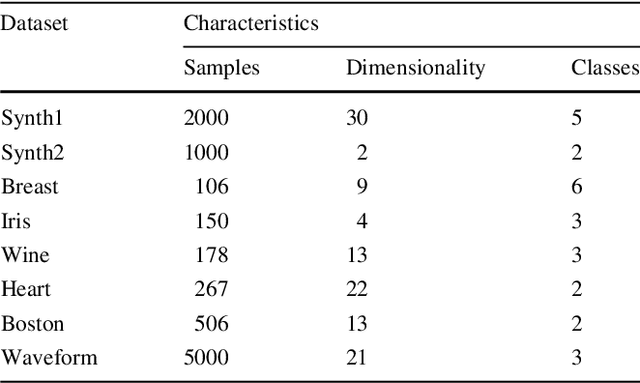

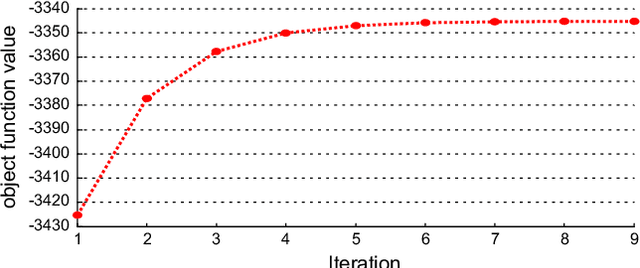

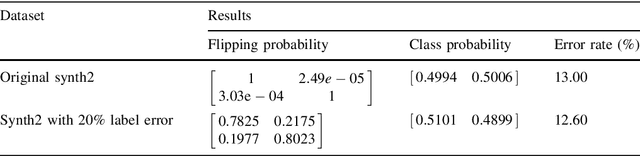

Abstract:Real world datasets often contain noisy labels, and learning from such datasets using standard classification approaches may not produce the desired performance. In this paper, we propose a Gaussian Mixture Discriminant Analysis (GMDA) with noisy label for each class. We introduce flipping probability and class probability and use EM algorithms to solve the discriminant problem with label noise. We also provide the detail proofs of convergence. Experimental results on synthetic and real-world datasets show that the proposed approach notably outperforms other four state-of-art methods.

* 35 pages

Bilevel Online Deep Learning in Non-stationary Environment

Jan 25, 2022Abstract:Recent years have witnessed enormous progress of online learning. However, a major challenge on the road to artificial agents is concept drift, that is, the data probability distribution would change where the data instance arrives sequentially in a stream fashion, which would lead to catastrophic forgetting and degrade the performance of the model. In this paper, we proposed a new Bilevel Online Deep Learning (BODL) framework, which combine bilevel optimization strategy and online ensemble classifier. In BODL algorithm, we use an ensemble classifier, which use the output of different hidden layers in deep neural network to build multiple base classifiers, the important weights of the base classifiers are updated according to exponential gradient descent method in an online manner. Besides, we apply the similar constraint to overcome the convergence problem of online ensemble framework. Then an effective concept drift detection mechanism utilizing the error rate of classifier is designed to monitor the change of the data probability distribution. When the concept drift is detected, our BODL algorithm can adaptively update the model parameters via bilevel optimization and then circumvent the large drift and encourage positive transfer. Finally, the extensive experiments and ablation studies are conducted on various datasets and the competitive numerical results illustrate that our BODL algorithm is a promising approach.

Multi-view Subspace Adaptive Learning via Autoencoder and Attention

Jan 01, 2022

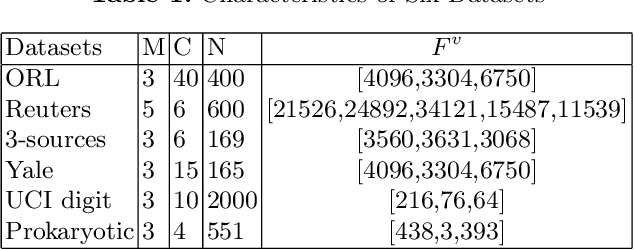

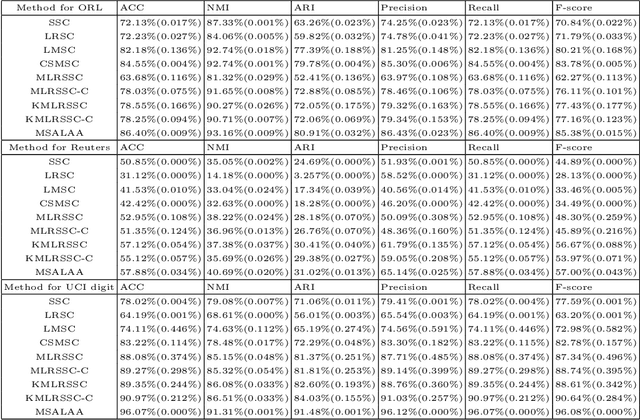

Abstract:Multi-view learning can cover all features of data samples more comprehensively, so multi-view learning has attracted widespread attention. Traditional subspace clustering methods, such as sparse subspace clustering (SSC) and low-ranking subspace clustering (LRSC), cluster the affinity matrix for a single view, thus ignoring the problem of fusion between views. In our article, we propose a new Multiview Subspace Adaptive Learning based on Attention and Autoencoder (MSALAA). This method combines a deep autoencoder and a method for aligning the self-representations of various views in Multi-view Low-Rank Sparse Subspace Clustering (MLRSSC), which can not only increase the capability to non-linearity fitting, but also can meets the principles of consistency and complementarity of multi-view learning. We empirically observe significant improvement over existing baseline methods on six real-life datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge