Xingguo Jiang

Bi-Mamba4TS: Bidirectional Mamba for Time Series Forecasting

Apr 24, 2024

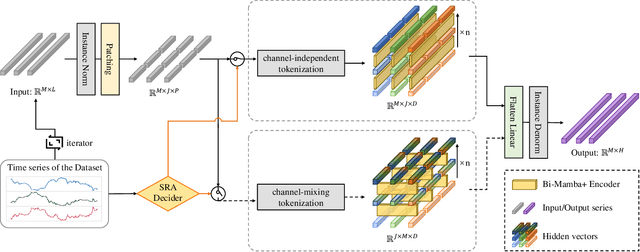

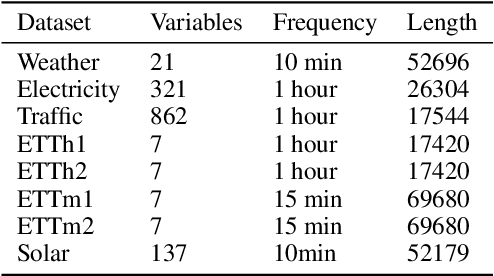

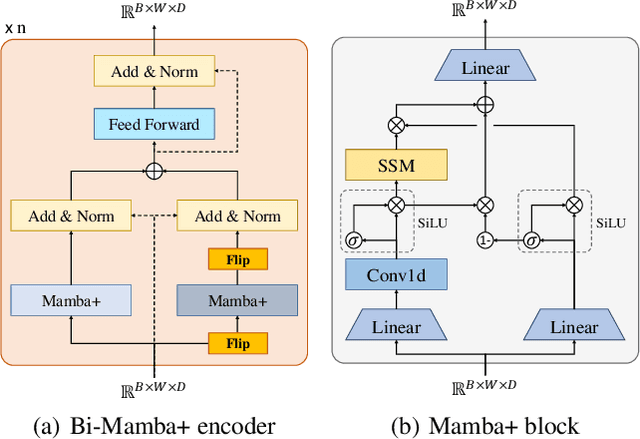

Abstract:Long-term time series forecasting (LTSF) provides longer insights into future trends and patterns. In recent years, deep learning models especially Transformers have achieved advanced performance in LTSF tasks. However, the quadratic complexity of Transformers rises the challenge of balancing computaional efficiency and predicting performance. Recently, a new state space model (SSM) named Mamba is proposed. With the selective capability on input data and the hardware-aware parallel computing algorithm, Mamba can well capture long-term dependencies while maintaining linear computational complexity. Mamba has shown great ability for long sequence modeling and is a potential competitor to Transformer-based models in LTSF. In this paper, we propose Bi-Mamba4TS, a bidirectional Mamba for time series forecasting. To address the sparsity of time series semantics, we adopt the patching technique to enrich the local information while capturing the evolutionary patterns of time series in a finer granularity. To select more appropriate modeling method based on the characteristics of the dataset, our model unifies the channel-independent and channel-mixing tokenization strategies and uses a series-relation-aware decider to control the strategy choosing process. Extensive experiments on seven real-world datasets show that our model achieves more accurate predictions compared with state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge