Xiaozhou Ren

Detecting Lane and Road Markings at A Distance with Perspective Transformer Layers

Mar 19, 2020

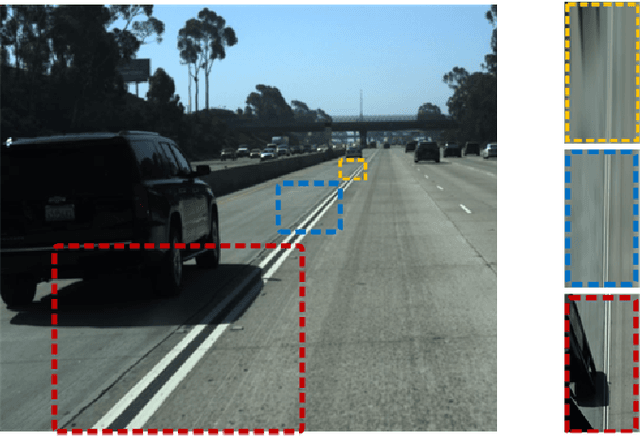

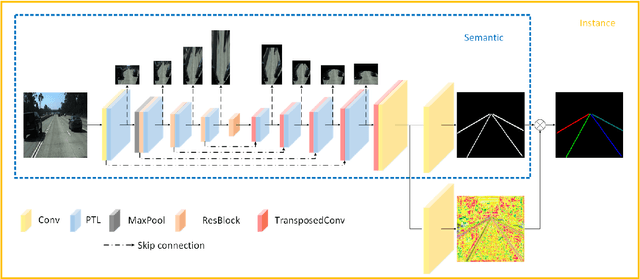

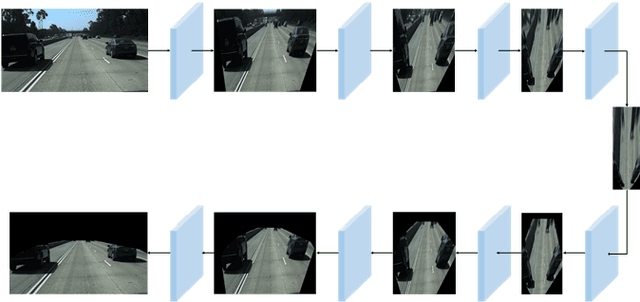

Abstract:Accurate detection of lane and road markings is a task of great importance for intelligent vehicles. In existing approaches, the detection accuracy often degrades with the increasing distance. This is due to the fact that distant lane and road markings occupy a small number of pixels in the image, and scales of lane and road markings are inconsistent at various distances and perspectives. The Inverse Perspective Mapping (IPM) can be used to eliminate the perspective distortion, but the inherent interpolation can lead to artifacts especially around distant lane and road markings and thus has a negative impact on the accuracy of lane marking detection and segmentation. To solve this problem, we adopt the Encoder-Decoder architecture in Fully Convolutional Networks and leverage the idea of Spatial Transformer Networks to introduce a novel semantic segmentation neural network. This approach decomposes the IPM process into multiple consecutive differentiable homographic transform layers, which are called "Perspective Transformer Layers". Furthermore, the interpolated feature map is refined by subsequent convolutional layers thus reducing the artifacts and improving the accuracy. The effectiveness of the proposed method in lane marking detection is validated on two public datasets: TuSimple and ApolloScape

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge