William Yik

Enforcing Equity in Neural Climate Emulators

Jun 28, 2024Abstract:Neural network emulators have become an invaluable tool for a wide variety of climate and weather prediction tasks. While showing incredibly promising results, these networks do not have an inherent ability to produce equitable predictions. That is, they are not guaranteed to provide a uniform quality of prediction along any particular class or group of people. This potential for inequitable predictions motivates the need for explicit representations of fairness in these neural networks. To that end, we draw on methods for enforcing analytical physical constraints in neural networks to bias networks towards more equitable predictions. We demonstrate the promise of this methodology using the task of climate model emulation. Specifically, we propose a custom loss function which punishes emulators with unequal quality of predictions across any prespecified regions or category, here defined using human development index (HDI). This loss function weighs a standard loss metric such as mean squared error against another metric which captures inequity along the equity category (HDI), allowing us to adjust the priority of each term before training. Importantly, the loss function does not specify a particular definition of equity to bias the neural network towards, opening the door for custom fairness metrics. Our results show that neural climate emulators trained with our loss function provide more equitable predictions and that the equity metric improves with greater weighting in the loss function. We empirically demonstrate that while there is a tradeoff between accuracy and equity when prioritizing the latter during training, an appropriate selection of the equity priority hyperparameter can minimize loss of performance.

Southern Ocean Dynamics Under Climate Change: New Knowledge Through Physics-Guided Machine Learning

Oct 21, 2023

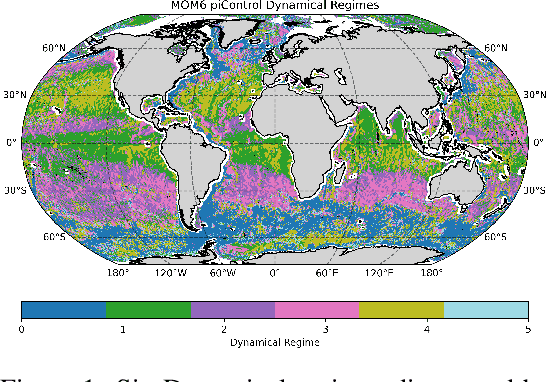

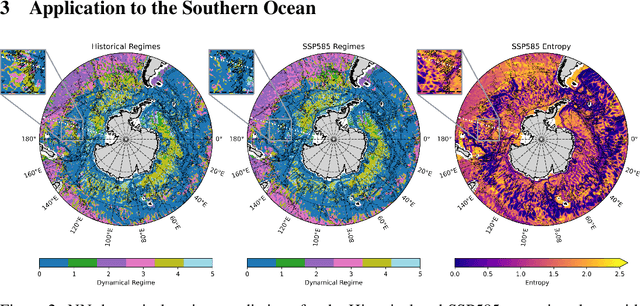

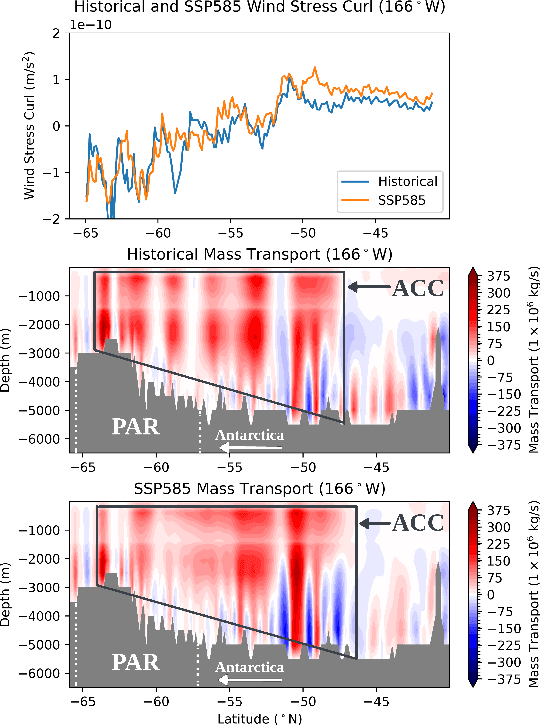

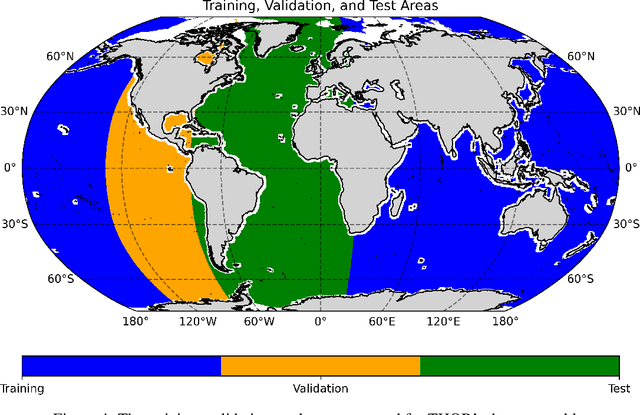

Abstract:Complex ocean systems such as the Antarctic Circumpolar Current play key roles in the climate, and current models predict shifts in their strength and area under climate change. However, the physical processes underlying these changes are not well understood, in part due to the difficulty of characterizing and tracking changes in ocean physics in complex models. To understand changes in the Antarctic Circumpolar Current, we extend the method Tracking global Heating with Ocean Regimes (THOR) to a mesoscale eddy permitting climate model and identify regions of the ocean characterized by similar physics, called dynamical regimes, using readily accessible fields from climate models. To this end, we cluster grid cells into dynamical regimes and train an ensemble of neural networks to predict these regimes and track them under climate change. Finally, we leverage this new knowledge to elucidate the dynamics of regime shifts. Here we illustrate the value of this high-resolution version of THOR, which allows for mesoscale turbulence, with a case study of the Antarctic Circumpolar Current and its interactions with the Pacific-Antarctic Ridge. In this region, THOR specifically reveals a shift in dynamical regime under climate change driven by changes in wind stress and interactions with bathymetry. Using this knowledge to guide further exploration, we find that as the Antarctic Circumpolar Current shifts north under intensifying wind stress, the dominant dynamical role of bathymetry weakens and the flow strengthens.

Exploring Randomly Wired Neural Networks for Climate Model Emulation

Dec 09, 2022

Abstract:Exploring the climate impacts of various anthropogenic emissions scenarios is key to making informed decisions for climate change mitigation and adaptation. State-of-the-art Earth system models can provide detailed insight into these impacts, but have a large associated computational cost on a per-scenario basis. This large computational burden has driven recent interest in developing cheap machine learning models for the task of climate model emulation. In this manuscript, we explore the efficacy of randomly wired neural networks for this task. We describe how they can be constructed and compare them to their standard feedforward counterparts using the ClimateBench dataset. Specifically, we replace the serially connected dense layers in multilayer perceptrons, convolutional neural networks, and convolutional long short-term memory networks with randomly wired dense layers and assess the impact on model performance for models with 1 million and 10 million parameters. We find average performance improvements of 4.2% across model complexities and prediction tasks, with substantial performance improvements of up to 16.4% in some cases. Furthermore, we find no significant difference in prediction speed between networks with standard feedforward dense layers and those with randomly wired layers. These findings indicate that randomly wired neural networks may be suitable direct replacements for traditional dense layers in many standard models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge