Vivek Khimani

TorchFL: A Performant Library for Bootstrapping Federated Learning Experiments

Nov 01, 2022Abstract:With the increased legislation around data privacy, federated learning (FL) has emerged as a promising technique that allows the clients (end-user) to collaboratively train deep learning (DL) models without transferring and storing the data in a centralized, third-party server. Despite the theoretical success, FL is yet to be adopted in real-world systems due to the hardware, computing, and various infrastructure constraints presented by the edge and mobile devices of the clients. As a result, simulated datasets, models, and experiments are heavily used by the FL research community to validate their theories and findings. We introduce TorchFL, a performant library for (i) bootstrapping the FL experiments, (ii) executing them using various hardware accelerators, (iii) profiling the performance, and (iv) logging the overall and agent-specific results on the go. Being built on a bottom-up design using PyTorch and Lightning, TorchFL provides ready-to-use abstractions for models, datasets, and FL algorithms, while allowing the developers to customize them as and when required.

SplitEasy: A Practical Approach for Training ML models on Mobile Devices in a split second

Nov 09, 2020

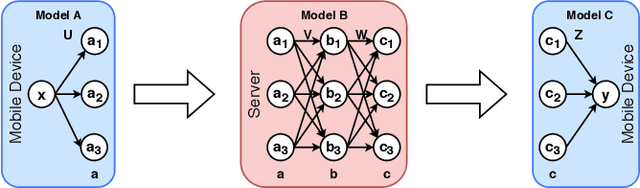

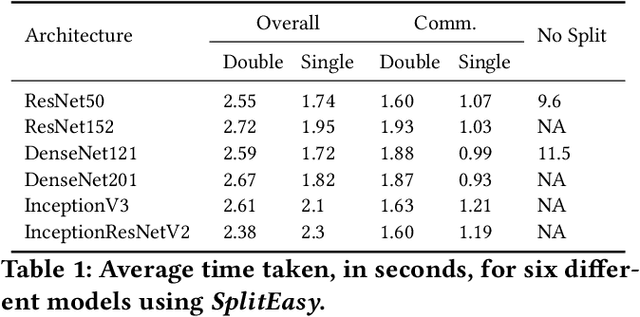

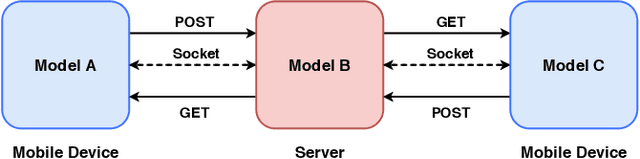

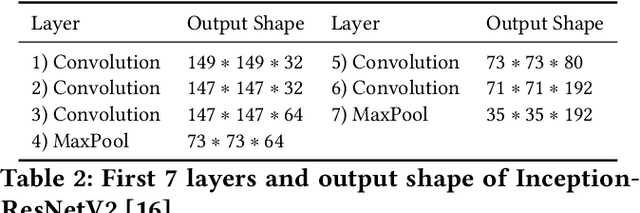

Abstract:Modern mobile devices, although resourceful, cannot train state-of-the-art machine learning models without the assistance of servers, which require access to privacy-sensitive user data. Split learning has recently emerged as a promising technique for training complex deep learning (DL) models on low-powered mobile devices. The core idea behind this technique is to train the sensitive layers of a DL model on the mobile devices while offloading the computationally intensive layers to a server. Although a lot of works have already explored the effectiveness of split learning in simulated settings, a usable toolkit for this purpose does not exist. In this work, we propose SplitEasy, a framework for training ML models on mobile devices using split learning. Using the abstraction provided by SplitEasy, developers can run various DL models under split learning setting by making minimal modifications. We provide a detailed explanation of SplitEasy and perform experiments under varying networks to demonstrate its versatility. We demonstrate how SplitEasy can be used to train state-of-the-art models while incurring nearly constant computational cost on mobile devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge