Ville Vakkuri

GPT versus Humans: Uncovering Ethical Concerns in Conversational Generative AI-empowered Multi-Robot Systems

Nov 21, 2024

Abstract:The emergence of generative artificial intelligence (GAI) and large language models (LLMs) such ChatGPT has enabled the realization of long-harbored desires in software and robotic development. The technology however, has brought with it novel ethical challenges. These challenges are compounded by the application of LLMs in other machine learning systems, such as multi-robot systems. The objectives of the study were to examine novel ethical issues arising from the application of LLMs in multi-robot systems. Unfolding ethical issues in GPT agent behavior (deliberation of ethical concerns) was observed, and GPT output was compared with human experts. The article also advances a model for ethical development of multi-robot systems. A qualitative workshop-based method was employed in three workshops for the collection of ethical concerns: two human expert workshops (N=16 participants) and one GPT-agent-based workshop (N=7 agents; two teams of 6 agents plus one judge). Thematic analysis was used to analyze the qualitative data. The results reveal differences between the human-produced and GPT-based ethical concerns. Human experts placed greater emphasis on new themes related to deviance, data privacy, bias and unethical corporate conduct. GPT agents emphasized concerns present in existing AI ethics guidelines. The study contributes to a growing body of knowledge in context-specific AI ethics and GPT application. It demonstrates the gap between human expert thinking and LLM output, while emphasizing new ethical concerns emerging in novel technology.

Business and ethical concerns in domestic Conversational Generative AI-empowered multi-robot systems

Jan 12, 2024

Abstract:Business and technology are intricately connected through logic and design. They are equally sensitive to societal changes and may be devastated by scandal. Cooperative multi-robot systems (MRSs) are on the rise, allowing robots of different types and brands to work together in diverse contexts. Generative artificial intelligence has been a dominant topic in recent artificial intelligence (AI) discussions due to its capacity to mimic humans through the use of natural language and the production of media, including deep fakes. In this article, we focus specifically on the conversational aspects of generative AI, and hence use the term Conversational Generative artificial intelligence (CGI). Like MRSs, CGIs have enormous potential for revolutionizing processes across sectors and transforming the way humans conduct business. From a business perspective, cooperative MRSs alone, with potential conflicts of interest, privacy practices, and safety concerns, require ethical examination. MRSs empowered by CGIs demand multi-dimensional and sophisticated methods to uncover imminent ethical pitfalls. This study focuses on ethics in CGI-empowered MRSs while reporting the stages of developing the MORUL model.

The Key Concepts of Ethics of Artificial Intelligence - A Keyword based Systematic Mapping Study

Sep 19, 2018

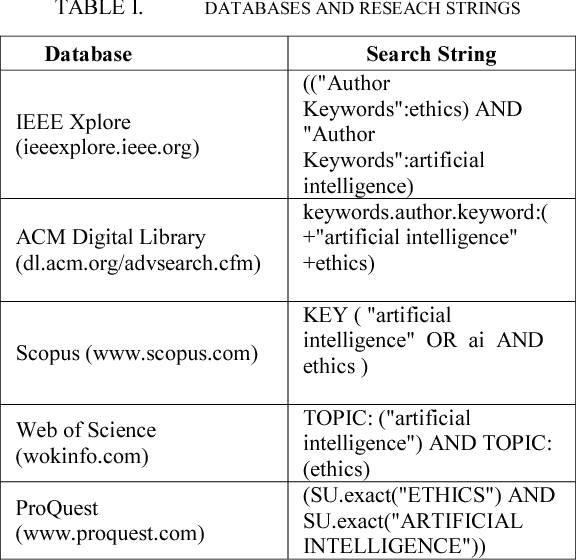

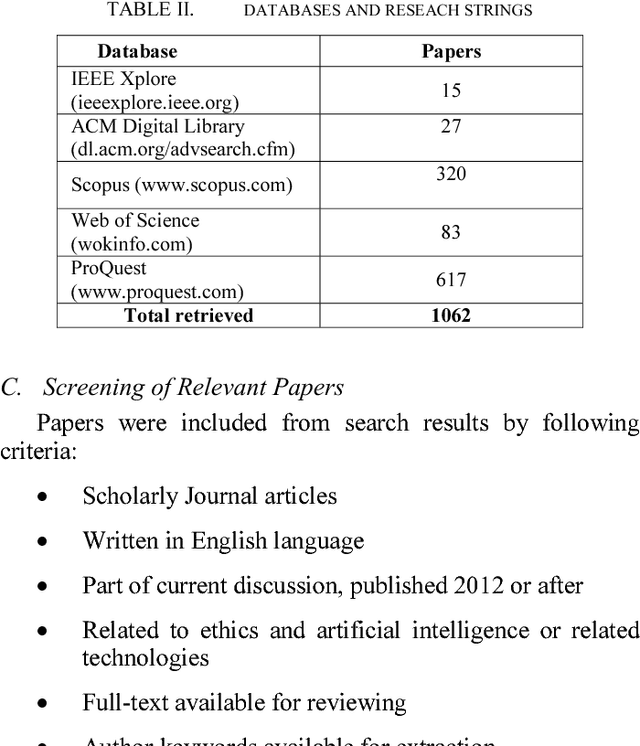

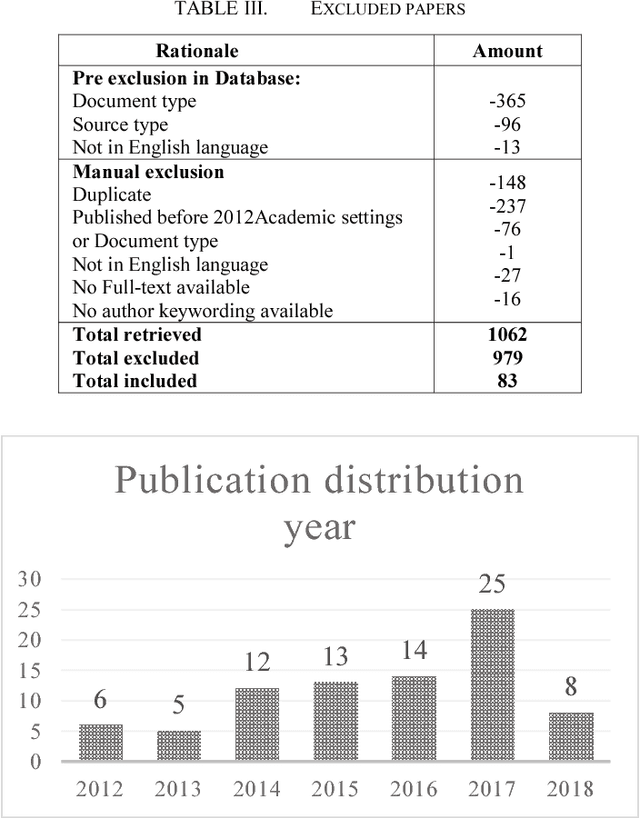

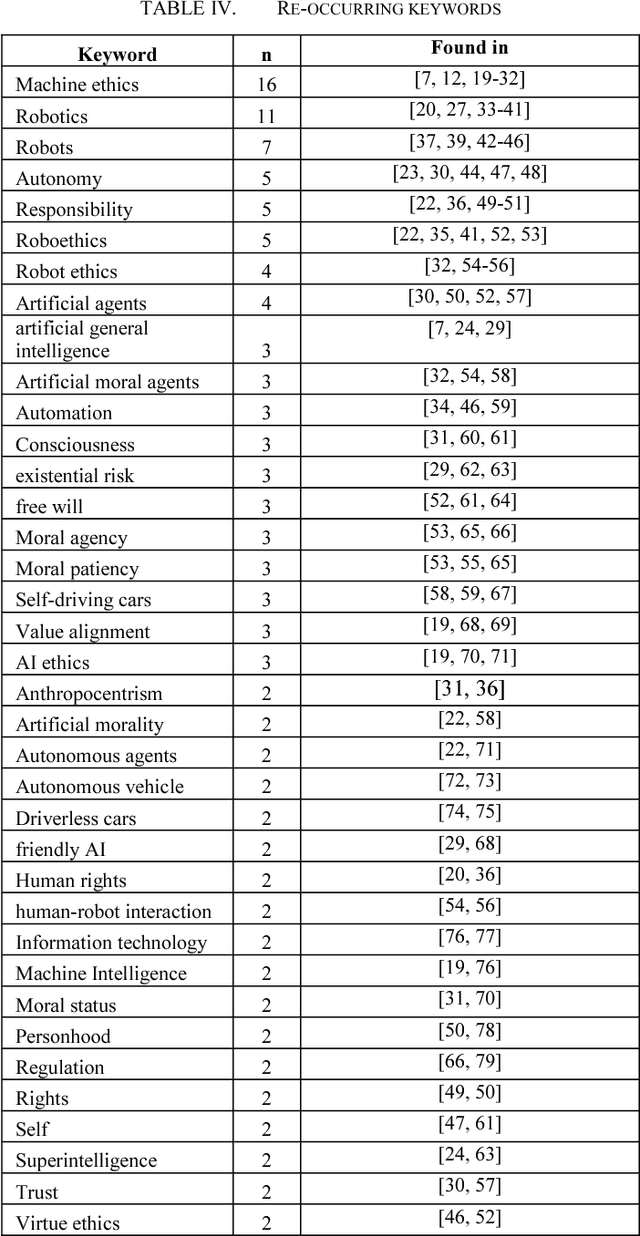

Abstract:The growing influence and decision-making capacities of Autonomous systems and Artificial Intelligence in our lives force us to consider the values embedded in these systems. But how ethics should be implemented into these systems? In this study, the solution is seen on philosophical conceptualization as a framework to form practical implementation model for ethics of AI. To take the first steps on conceptualization main concepts used on the field needs to be identified. A keyword based Systematic Mapping Study (SMS) on the keywords used in AI and ethics was conducted to help in identifying, defying and comparing main concepts used in current AI ethics discourse. Out of 1062 papers retrieved SMS discovered 37 re-occurring keywords in 83 academic papers. We suggest that the focus on finding keywords is the first step in guiding and providing direction for future research in the AI ethics field.

* This is the author's version of the work. The copyright holder's version can be found at http://dx.doi.org/10.1109/ICE.2018.8436265

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge