Vikas Bishnoi

KARL-Trans-NER: Knowledge Aware Representation Learning for Named Entity Recognition using Transformers

Nov 30, 2021

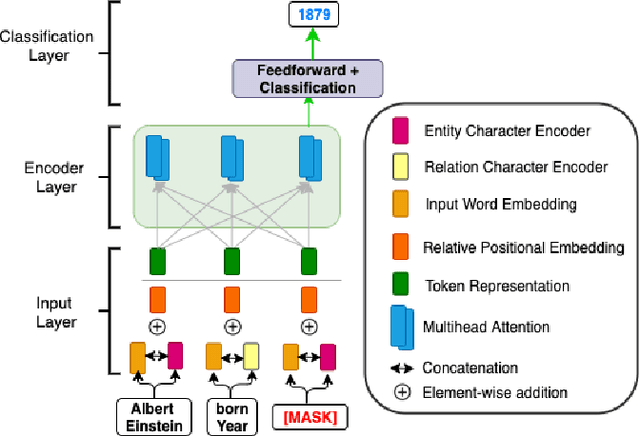

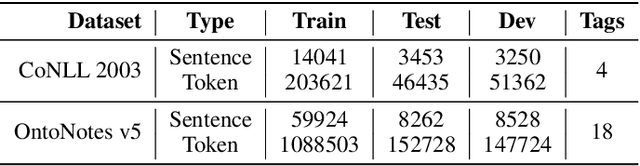

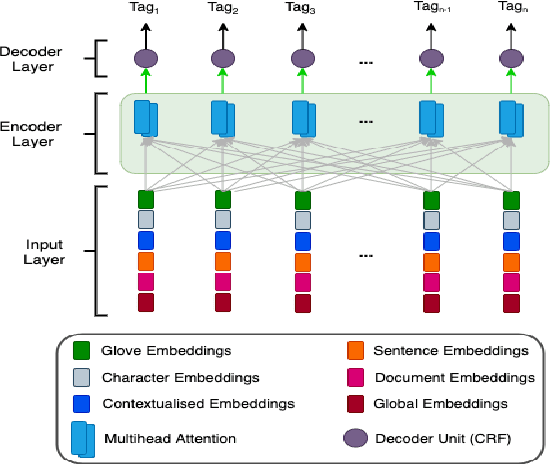

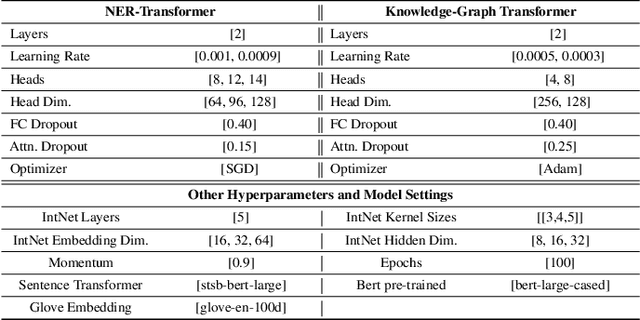

Abstract:The inception of modeling contextual information using models such as BERT, ELMo, and Flair has significantly improved representation learning for words. It has also given SOTA results in almost every NLP task - Machine Translation, Text Summarization and Named Entity Recognition, to name a few. In this work, in addition to using these dominant context-aware representations, we propose a Knowledge Aware Representation Learning (KARL) Network for Named Entity Recognition (NER). We discuss the challenges of using existing methods in incorporating world knowledge for NER and show how our proposed methods could be leveraged to overcome those challenges. KARL is based on a Transformer Encoder that utilizes large knowledge bases represented as fact triplets, converts them to a graph context, and extracts essential entity information residing inside to generate contextualized triplet representation for feature augmentation. Experimental results show that the augmentation done using KARL can considerably boost the performance of our NER system and achieve significantly better results than existing approaches in the literature on three publicly available NER datasets, namely CoNLL 2003, CoNLL++, and OntoNotes v5. We also observe better generalization and application to a real-world setting from KARL on unseen entities.

A Comparative Study of Transformers on Word Sense Disambiguation

Nov 30, 2021

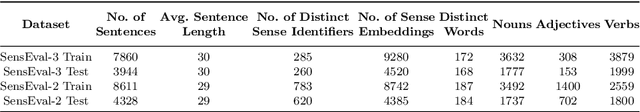

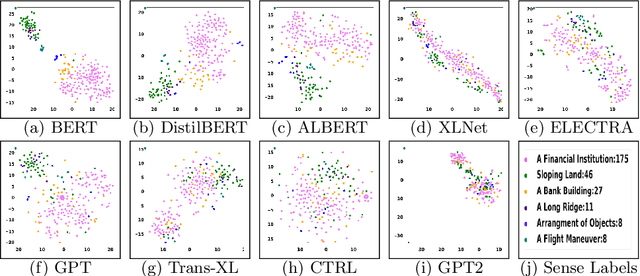

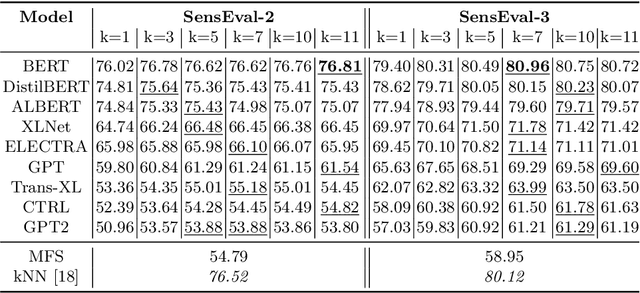

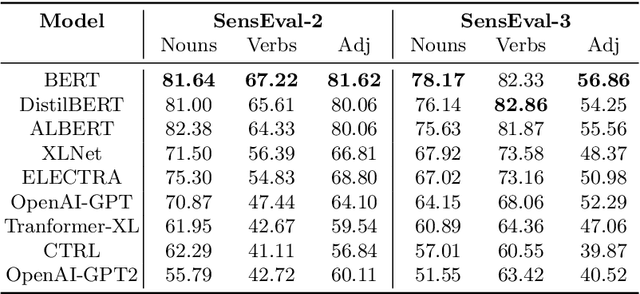

Abstract:Recent years of research in Natural Language Processing (NLP) have witnessed dramatic growth in training large models for generating context-aware language representations. In this regard, numerous NLP systems have leveraged the power of neural network-based architectures to incorporate sense information in embeddings, resulting in Contextualized Word Embeddings (CWEs). Despite this progress, the NLP community has not witnessed any significant work performing a comparative study on the contextualization power of such architectures. This paper presents a comparative study and an extensive analysis of nine widely adopted Transformer models. These models are BERT, CTRL, DistilBERT, OpenAI-GPT, OpenAI-GPT2, Transformer-XL, XLNet, ELECTRA, and ALBERT. We evaluate their contextualization power using two lexical sample Word Sense Disambiguation (WSD) tasks, SensEval-2 and SensEval-3. We adopt a simple yet effective approach to WSD that uses a k-Nearest Neighbor (kNN) classification on CWEs. Experimental results show that the proposed techniques also achieve superior results over the current state-of-the-art on both the WSD tasks

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge