Uday Shankar Shanthamallu

Uncertainty-Matching Graph Neural Networks to Defend Against Poisoning Attacks

Sep 30, 2020

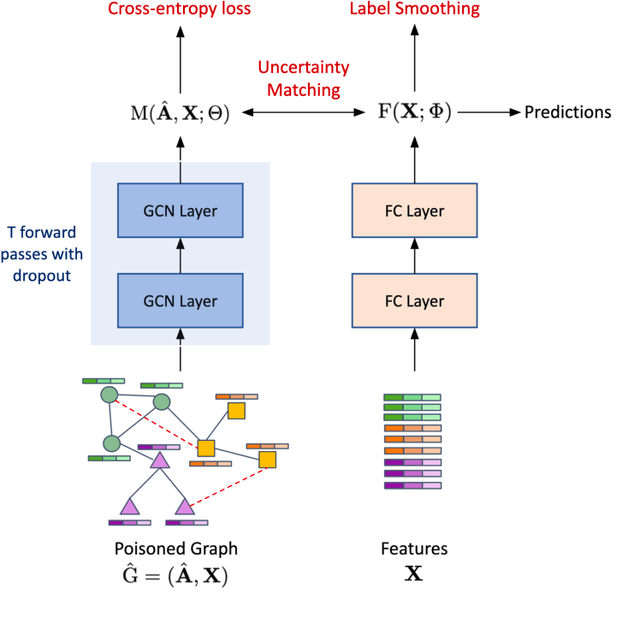

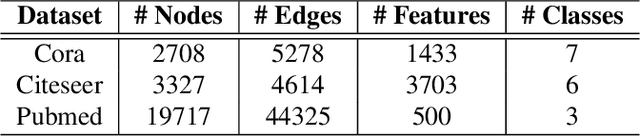

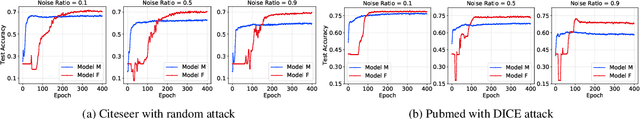

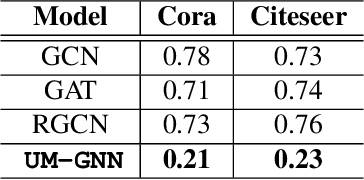

Abstract:Graph Neural Networks (GNNs), a generalization of neural networks to graph-structured data, are often implemented using message passes between entities of a graph. While GNNs are effective for node classification, link prediction and graph classification, they are vulnerable to adversarial attacks, i.e., a small perturbation to the structure can lead to a non-trivial performance degradation. In this work, we propose Uncertainty Matching GNN (UM-GNN), that is aimed at improving the robustness of GNN models, particularly against poisoning attacks to the graph structure, by leveraging epistemic uncertainties from the message passing framework. More specifically, we propose to build a surrogate predictor that does not directly access the graph structure, but systematically extracts reliable knowledge from a standard GNN through a novel uncertainty-matching strategy. Interestingly, this uncoupling makes UM-GNN immune to evasion attacks by design, and achieves significantly improved robustness against poisoning attacks. Using empirical studies with standard benchmarks and a suite of global and target attacks, we demonstrate the effectiveness of UM-GNN, when compared to existing baselines including the state-of-the-art robust GCN.

Improving Robustness of Attention Models on Graphs

Nov 01, 2018

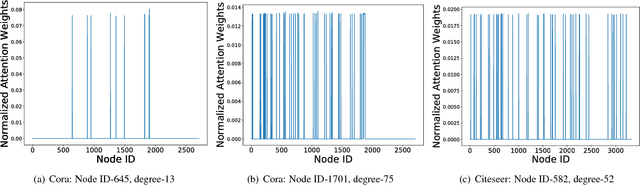

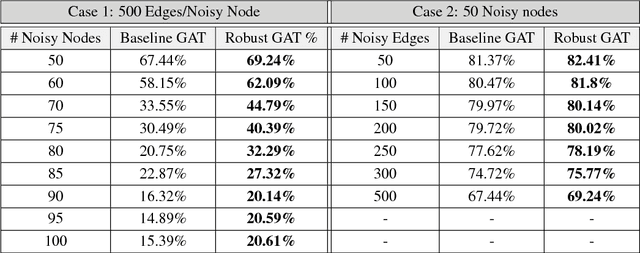

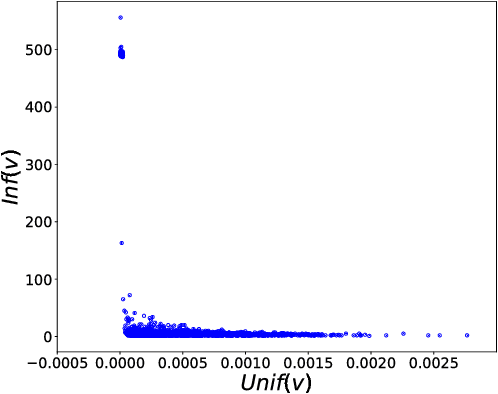

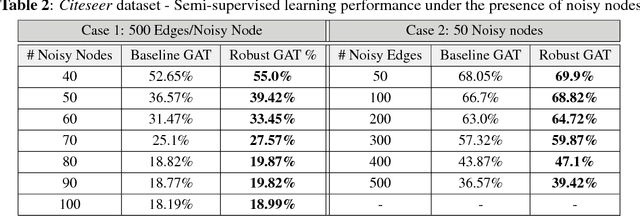

Abstract:Machine learning models that can exploit the inherent structure in data have gained prominence. In particular, there is a surge in deep learning solutions for graph-structured data, due to its wide-spread applicability in several fields. Graph attention networks (GAT), a recent addition to the broad class of feature learning models in graphs, utilizes the attention mechanism to efficiently learn continuous vector representations for semi-supervised learning problems. In this paper, we perform a detailed analysis of GAT models, and present interesting insights into their behavior. In particular, we show that the models are vulnerable to adversaries (rogue nodes) and hence propose novel regularization strategies to improve the robustness of GAT models. Using benchmark datasets, we demonstrate performance improvements on semi-supervised learning, using the proposed robust variant of GAT.

Attention Models with Random Features for Multi-layered Graph Embeddings

Oct 02, 2018

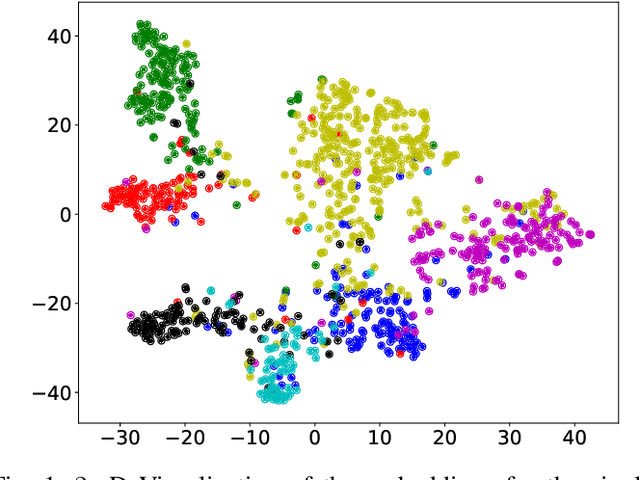

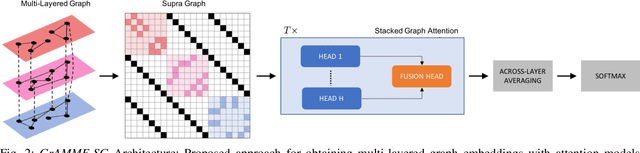

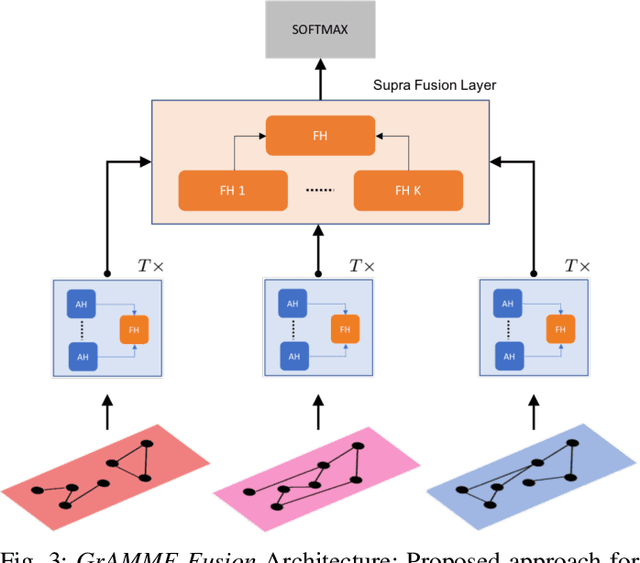

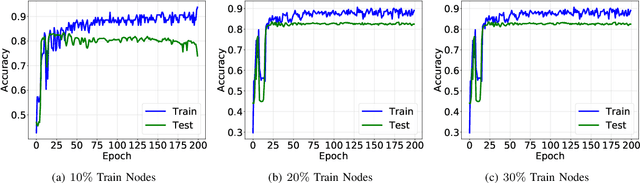

Abstract:Modern data analysis pipelines are becoming increasingly complex due to the presence of multi-view information sources. While graphs are effective in modeling complex relationships, in many scenarios a single graph is rarely sufficient to succinctly represent all interactions, and hence multi-layered graphs have become popular. Though this leads to richer representations, extending solutions from the single-graph case is not straightforward. Consequently, there is a strong need for novel solutions to solve classical problems, such as node classification, in the multi-layered case. In this paper, we consider the problem of semi-supervised learning with multi-layered graphs. Though deep network embeddings, e.g. DeepWalk, are widely adopted for community discovery, we argue that feature learning with random node attributes, using graph neural networks, can be more effective. To this end, we propose to use attention models for effective feature learning, and develop two novel architectures, GrAMME-SG and GrAMME-Fusion, that exploit the inter-layer dependencies for building multi-layered graph embeddings. Using empirical studies on several benchmark datasets, we evaluate the proposed approaches and demonstrate significant performance improvements in comparison to state-of-the-art network embedding strategies. The results also show that using simple random features is an effective choice, even in cases where explicit node attributes are not available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge