Tobias Schlosser

Attention Modules Improve Modern Image-Level Anomaly Detection: A DifferNet Case Study

Jan 13, 2024Abstract:Within (semi-)automated visual inspection, learning-based approaches for assessing visual defects, including deep neural networks, enable the processing of otherwise small defect patterns in pixel size on high-resolution imagery. The emergence of these often rarely occurring defect patterns explains the general need for labeled data corpora. To not only alleviate this issue but to furthermore advance the current state of the art in unsupervised visual inspection, this contribution proposes a DifferNet-based solution enhanced with attention modules utilizing SENet and CBAM as backbone - AttentDifferNet - to improve the detection and classification capabilities on three different visual inspection and anomaly detection datasets: MVTec AD, InsPLAD-fault, and Semiconductor Wafer. In comparison to the current state of the art, it is shown that AttentDifferNet achieves improved results, which are, in turn, highlighted throughout our quantitative as well as qualitative evaluation, indicated by a general improvement in AUC of 94.34 vs. 92.46, 96.67 vs. 94.69, and 90.20 vs. 88.74%. As our variants to AttentDifferNet show great prospects in the context of currently investigated approaches, a baseline is formulated, emphasizing the importance of attention for anomaly detection.

Attention Modules Improve Image-Level Anomaly Detection for Industrial Inspection: A DifferNet Case Study

Nov 07, 2023

Abstract:Within (semi-)automated visual industrial inspection, learning-based approaches for assessing visual defects, including deep neural networks, enable the processing of otherwise small defect patterns in pixel size on high-resolution imagery. The emergence of these often rarely occurring defect patterns explains the general need for labeled data corpora. To alleviate this issue and advance the current state of the art in unsupervised visual inspection, this work proposes a DifferNet-based solution enhanced with attention modules: AttentDifferNet. It improves image-level detection and classification capabilities on three visual anomaly detection datasets for industrial inspection: InsPLAD-fault, MVTec AD, and Semiconductor Wafer. In comparison to the state of the art, AttentDifferNet achieves improved results, which are, in turn, highlighted throughout our quali-quantitative study. Our quantitative evaluation shows an average improvement - compared to DifferNet - of 1.77 +/- 0.25 percentage points in overall AUROC considering all three datasets, reaching SOTA results in InsPLAD-fault, an industrial inspection in-the-wild dataset. As our variants to AttentDifferNet show great prospects in the context of currently investigated approaches, a baseline is formulated, emphasizing the importance of attention for industrial anomaly detection both in the wild and in controlled environments.

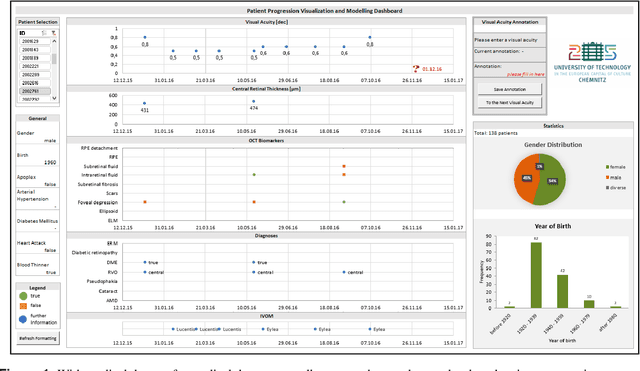

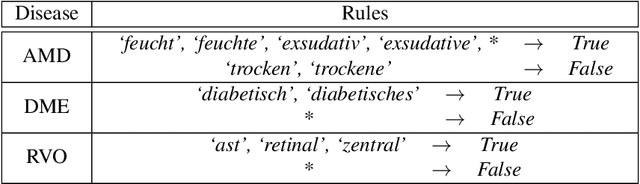

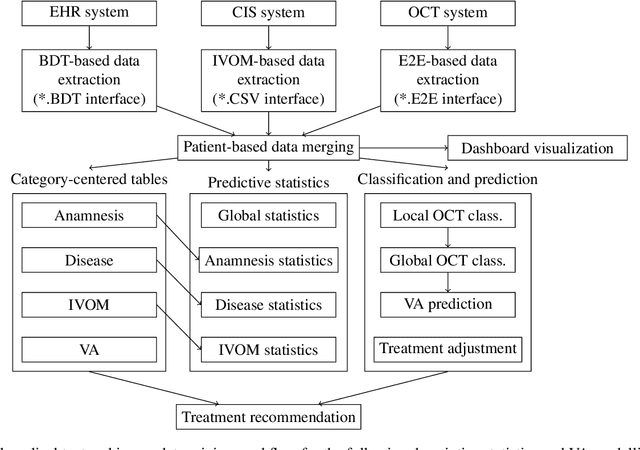

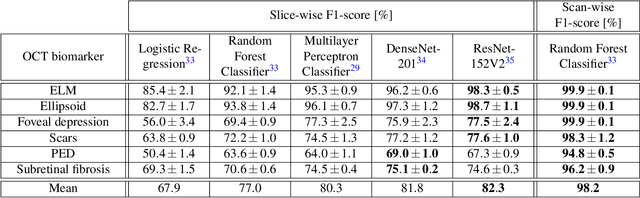

Visual Acuity Prediction on Real-Life Patient Data Using a Machine Learning Based Multistage System

Apr 25, 2022

Abstract:In ophthalmology, intravitreal operative medication therapy (IVOM) is widespread treatment for diseases such as the age-related macular degeneration (AMD), the diabetic macular edema (DME), as well as the retinal vein occlusion (RVO). However, in real-world settings, patients often suffer from loss of vision on time scales of years despite therapy, whereas the prediction of the visual acuity (VA) and the earliest possible detection of deterioration under real-life conditions is challenging due to heterogeneous and incomplete data. In this contribution, we present a workflow for the development of a research-compatible data corpus fusing different IT systems of the department of ophthalmology of a German maximum care hospital. The extensive data corpus allows predictive statements of the expected progression of a patient and his or her VA in each of the three diseases. Within our proposed multistage system, we classify the VA progression into the three groups of therapy "winners", "stabilizers", and "losers" (WSL scheme). Our OCT biomarker classification using an ensemble of deep neural networks results in a classification accuracy (F1-score) of over 98 %, enabling us to complete incomplete OCT documentations while allowing us to exploit them for a more precise VA modelling process. Our VA prediction requires at least four VA examinations and optionally OCT biomarkers from the same time period to predict the VA progression within a forecasted time frame. While achieving a prediction accuracy of up to 69 % (macro average F1-score) when considering all three WSL-based progression groups, this corresponds to an improvement by 11 % in comparison to our ophthalmic expertise (58 %).

Improving Automated Visual Fault Detection by Combining a Biologically Plausible Model of Visual Attention with Deep Learning

Feb 13, 2021

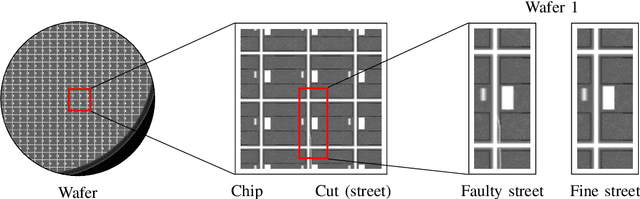

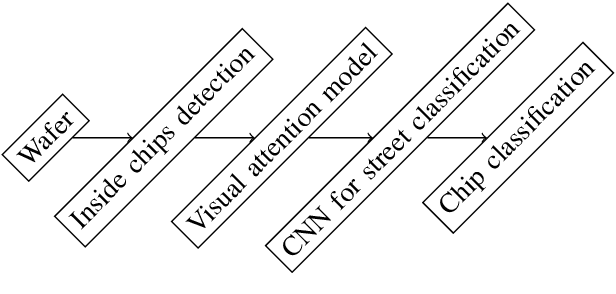

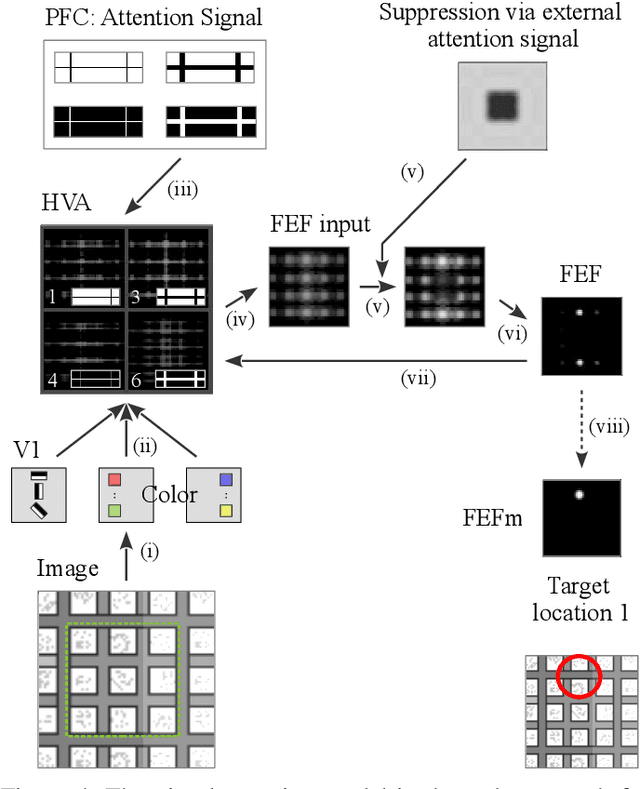

Abstract:It is a long-term goal to transfer biological processing principles as well as the power of human recognition into machine vision and engineering systems. One of such principles is visual attention, a smart human concept which focuses processing on a part of a scene. In this contribution, we utilize attention to improve the automatic detection of defect patterns for wafers within the domain of semiconductor manufacturing. Previous works in the domain have often utilized classical machine learning approaches such as KNNs, SVMs, or MLPs, while a few have already used modern approaches like deep neural networks (DNNs). However, one problem in the domain is that the faults are often very small and have to be detected within a larger size of the chip or even the wafer. Therefore, small structures in the size of pixels have to be detected in a vast amount of image data. One interesting principle of the human brain for solving this problem is visual attention. Hence, we employ here a biologically plausible model of visual attention for automatic visual inspection. We propose a hybrid system of visual attention and a deep neural network. As demonstrated, our system achieves among other decisive advantages an improvement in accuracy from 81% to 92%, and an increase in accuracy for detecting faults from 67% to 88%. Hence, the error rates are reduced from 19% to 8%, and notably from 33% to 12% for detecting a fault in a chip. These results show that attention can greatly improve the performance of visual inspection systems. Furthermore, we conduct a broad evaluation, identifying specific advantages of the biological attention model in this application, and benchmarks standard deep learning approaches as an alternative with and without attention. This work is an extended arXiv version of the original conference article published in "IECON 2020", which has been extended regarding visual attention.

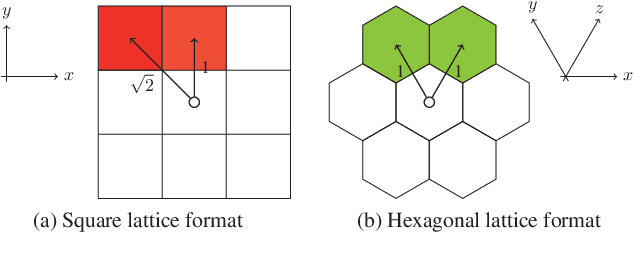

Biologically Inspired Hexagonal Deep Learning for Hexagonal Image Generation

Jan 01, 2021

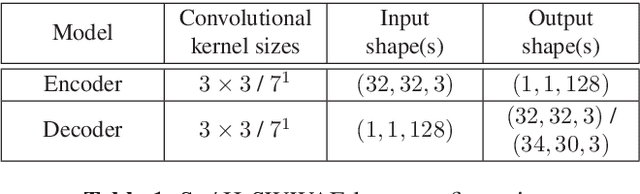

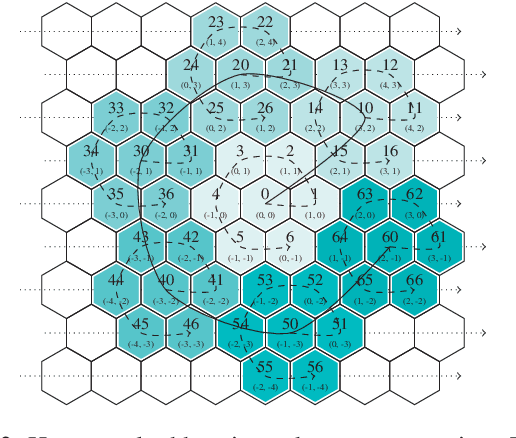

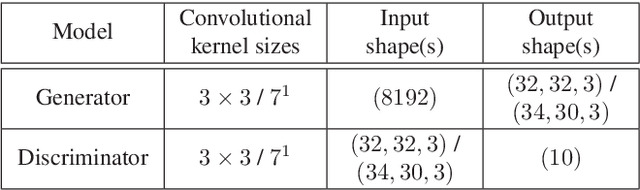

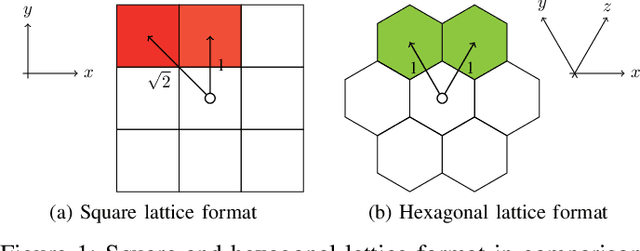

Abstract:Whereas conventional state-of-the-art image processing systems of recording and output devices almost exclusively utilize square arranged methods, biological models, however, suggest an alternative, evolutionarily-based structure. Inspired by the human visual perception system, hexagonal image processing in the context of machine learning offers a number of key advantages that can benefit both researchers and users alike. The hexagonal deep learning framework Hexnet leveraged in this contribution serves therefore the generation of hexagonal images by utilizing hexagonal deep neural networks (H-DNN). As the results of our created test environment show, the proposed models can surpass current approaches of conventional image generation. While resulting in a reduction of the models' complexity in the form of trainable parameters, they furthermore allow an increase of test rates in comparison to their square counterparts.

Hexagonal Image Processing in the Context of Machine Learning: Conception of a Biologically Inspired Hexagonal Deep Learning Framework

Dec 31, 2019

Abstract:Inspired by the human visual perception system, hexagonal image processing in the context of machine learning deals with the development of image processing systems that combine the advantages of evolutionary motivated structures based on biological models. While conventional state of the art image processing systems of recording and output devices almost exclusively utilize square arranged methods, their hexagonal counterparts offer a number of key advantages that can benefit both researchers and users. This contribution serves as a general application-oriented approach with the synthesis of the therefor designed hexagonal image processing framework, called Hexnet, the processing steps of hexagonal image transformation, and dependent methods. The results of our created test environment show that the realized framework surpasses current approaches of hexagonal image processing systems, while hexagonal artificial neural networks can benefit from the implemented hexagonal architecture. As hexagonal lattice format based deep neural networks, also called H-DNN, can be compared to their square counterpart by transforming classical square lattice based data sets into their hexagonal representation, they can also result in a reduction of trainable parameters as well as result in increased training and test rates.

A Novel Visual Fault Detection and Classification System for Semiconductor Manufacturing Using Stacked Hybrid Convolutional Neural Networks

Dec 31, 2019

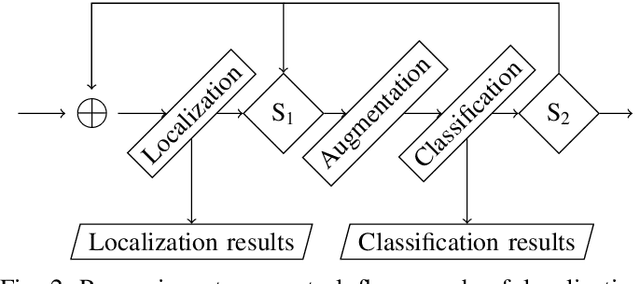

Abstract:Automated visual inspection in the semiconductor industry aims to detect and classify manufacturing defects utilizing modern image processing techniques. While an earliest possible detection of defect patterns allows quality control and automation of manufacturing chains, manufacturers benefit from an increased yield and reduced manufacturing costs. Since classical image processing systems are limited in their ability to detect novel defect patterns, and machine learning approaches often involve a tremendous amount of computational effort, this contribution introduces a novel deep neural network-based hybrid approach. Unlike classical deep neural networks, a multi-stage system allows the detection and classification of the finest structures in pixel size within high-resolution imagery. Consisting of stacked hybrid convolutional neural networks (SH-CNN) and inspired by current approaches of visual attention, the realized system draws the focus over the level of detail from its structures to more task-relevant areas of interest. The results of our test environment show that the SH-CNN outperforms current approaches of learning-based automated visual inspection, whereas a distinction depending on the level of detail enables the elimination of defect patterns in earlier stages of the manufacturing process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge