Tianyunxi Wei

Saliency-guided and Patch-based Mixup for Long-tailed Skin Cancer Image Classification

Jun 16, 2024

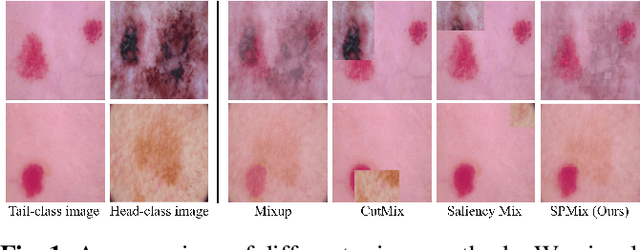

Abstract:Medical image datasets often exhibit long-tailed distributions due to the inherent challenges in medical data collection and annotation. In long-tailed contexts, some common disease categories account for most of the data, while only a few samples are available in the rare disease categories, resulting in poor performance of deep learning methods. To address this issue, previous approaches have employed class re-sampling or re-weighting techniques, which often encounter challenges such as overfitting to tail classes or difficulties in optimization during training. In this work, we propose a novel approach, namely \textbf{S}aliency-guided and \textbf{P}atch-based \textbf{Mix}up (SPMix) for long-tailed skin cancer image classification. Specifically, given a tail-class image and a head-class image, we generate a new tail-class image by mixing them under the guidance of saliency mapping, which allows for preserving and augmenting the discriminative features of the tail classes without any interference of the head-class features. Extensive experiments are conducted on the ISIC2018 dataset, demonstrating the superiority of SPMix over existing state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge