Ti Zhou

Energy-Efficient Computation with DVFS using Deep Reinforcement Learning for Multi-Task Systems in Edge Computing

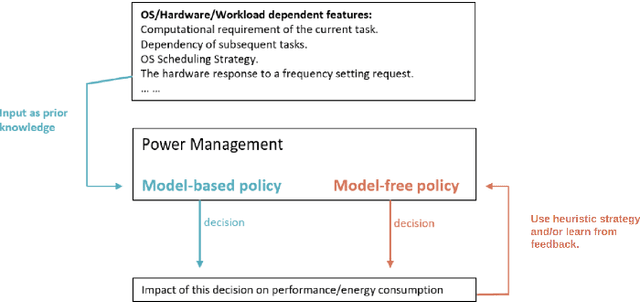

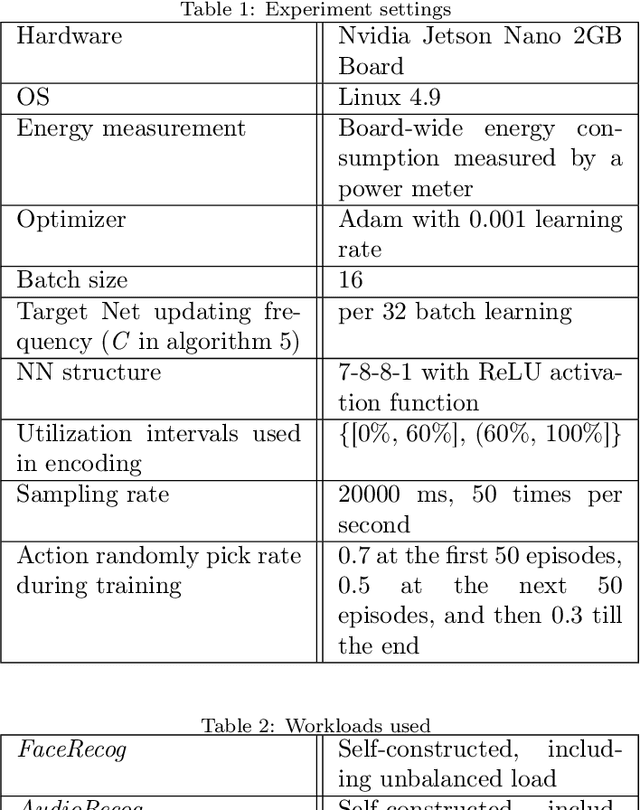

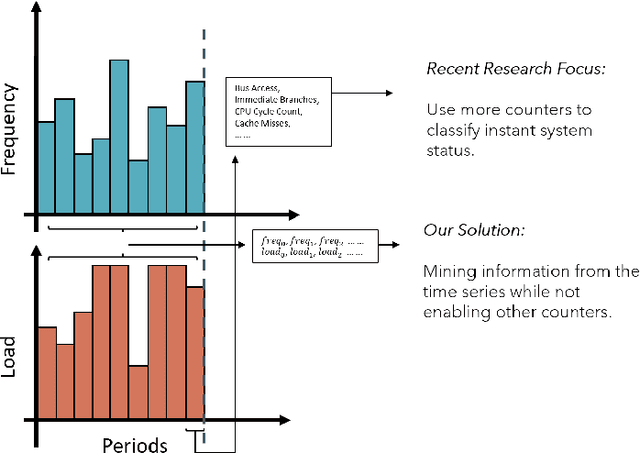

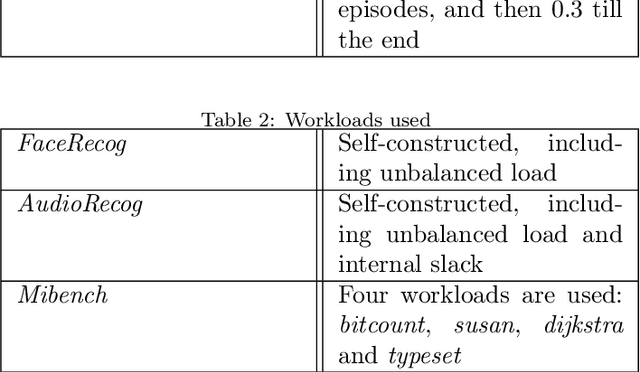

Sep 28, 2024Abstract:Periodic soft real-time systems have broad applications in many areas, such as IoT. Finding an optimal energy-efficient policy that is adaptable to underlying edge devices while meeting deadlines for tasks has always been challenging. This research studies generalized systems with multi-task, multi-deadline scenarios with reinforcement learning-based DVFS for energy saving. This work addresses the limitation of previous work that models a periodic system as a single task and single-deadline scenario, which is too simplified to cope with complex situations. The method encodes time series information in the Linux kernel into information that is easy to use for reinforcement learning, allowing the system to generate DVFS policies to adapt system patterns based on the general workload. For encoding, we present two different methods for comparison. Both methods use only one performance counter: system utilization and the kernel only needs minimal information from the userspace. Our method is implemented on Jetson Nano Board (2GB) and is tested with three fixed multitask workloads, which are three, five, and eight tasks in the workload, respectively. For randomness and generalization, we also designed a random workload generator to build different multitask workloads to test. Based on the test results, our method could save 3%-10% power compared to Linux built-in governors.

CPU frequency scheduling of real-time applications on embedded devices with temporal encoding-based deep reinforcement learning

Sep 07, 2023

Abstract:Small devices are frequently used in IoT and smart-city applications to perform periodic dedicated tasks with soft deadlines. This work focuses on developing methods to derive efficient power-management methods for periodic tasks on small devices. We first study the limitations of the existing Linux built-in methods used in small devices. We illustrate three typical workload/system patterns that are challenging to manage with Linux's built-in solutions. We develop a reinforcement-learning-based technique with temporal encoding to derive an effective DVFS governor even with the presence of the three system patterns. The derived governor uses only one performance counter, the same as the built-in Linux mechanism, and does not require an explicit task model for the workload. We implemented a prototype system on the Nvidia Jetson Nano Board and experimented with it with six applications, including two self-designed and four benchmark applications. Under different deadline constraints, our approach can quickly derive a DVFS governor that can adapt to performance requirements and outperform the built-in Linux approach in energy saving. On Mibench workloads, with performance slack ranging from 0.04 s to 0.4 s, the proposed method can save 3% - 11% more energy compared to Ondemand. AudioReg and FaceReg applications tested have 5%- 14% energy-saving improvement. We have open-sourced the implementation of our in-kernel quantized neural network engine. The codebase can be found at: https://github.com/coladog/tinyagent.

* Accepted to Journal of Systems Architecture

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge