Thomas Pinder

Gaussian Processes on Hypergraphs

Jun 03, 2021

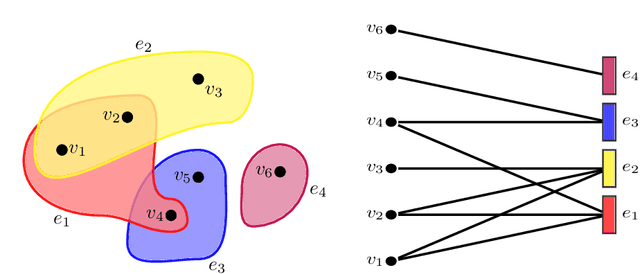

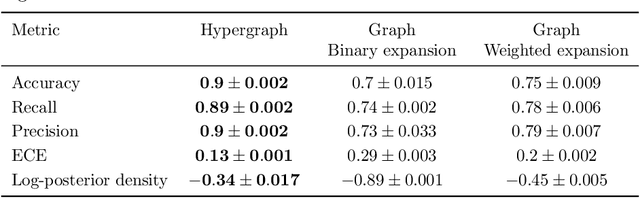

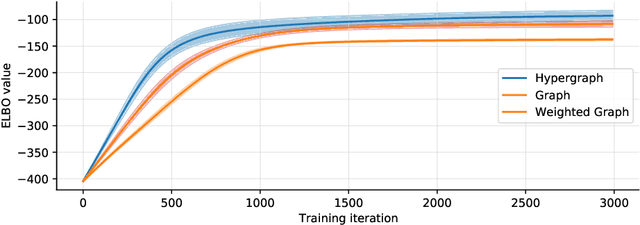

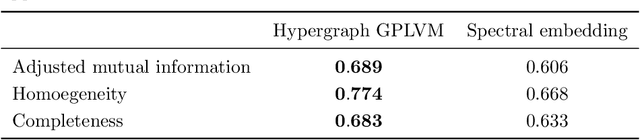

Abstract:We derive a Matern Gaussian process (GP) on the vertices of a hypergraph. This enables estimation of regression models of observed or latent values associated with the vertices, in which the correlation and uncertainty estimates are informed by the hypergraph structure. We further present a framework for embedding the vertices of a hypergraph into a latent space using the hypergraph GP. Finally, we provide a scheme for identifying a small number of representative inducing vertices that enables scalable inference through sparse GPs. We demonstrate the utility of our framework on three challenging real-world problems that concern multi-class classification for the political party affiliation of legislators on the basis of voting behaviour, probabilistic matrix factorisation of movie reviews, and embedding a hypergraph of animals into a low-dimensional latent space.

Stein Variational Gaussian Processes

Sep 25, 2020

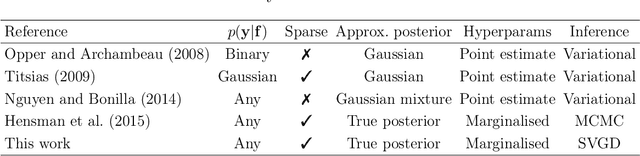

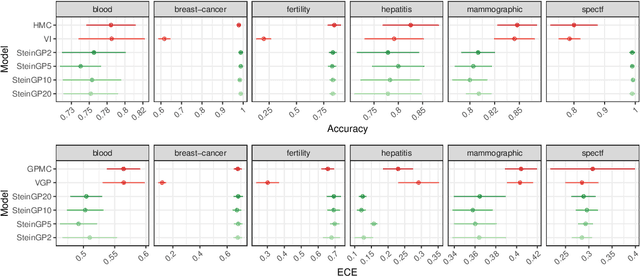

Abstract:We show how to use Stein variational gradient descent (SVGD) to carry out inference in Gaussian process (GP) models with non-Gaussian likelihoods and large data volumes. Markov chain Monte Carlo (MCMC) is extremely computationally intensive for these situations, but the parametric assumptions required for efficient variational inference (VI) result in incorrect inference when they encounter the multi-modal posterior distributions that are common for such models. SVGD provides a non-parametric alternative to variational inference which is substantially faster than MCMC but unhindered by parametric assumptions. We prove that for GP models with Lipschitz gradients the SVGD algorithm monotonically decreases the Kullback-Leibler divergence from the sampling distribution to the true posterior. Our method is demonstrated on benchmark problems in both regression and classification, and a real air quality example with 11440 spatiotemporal observations, showing substantial performance improvements over MCMC and VI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge