Thanh Nguyen Canh

SafeGuard ASF: SR Agentic Humanoid Robot System for Autonomous Industrial Safety

Mar 26, 2026Abstract:The rise of unmanned ``dark factories'' operating without human presence demands autonomous safety systems capable of detecting and responding to multiple hazard types. We present SafeGuard ASF (Agentic Security Fleet), a comprehensive framework deploying humanoid robots for autonomous hazard detection in industrial environments. Our system integrates multi-modal perception (RGB-D imaging), a ReAct-based agentic reasoning framework, and learned locomotion policies on the Unitree G1 humanoid platform. We address three critical hazard scenarios: fire and smoke detection, abnormal temperature monitoring in pipelines, and intruder detection in restricted zones. Our perception pipeline achieves 94.2% mAP for fire or smoke detection with 127ms latency. We train multiple locomotion policies, including dance motion tracking and velocity control, using Unitree RL Lab with PPO, demonstrating stable convergence within 80,000 training iterations. We validate our system in both simulation and real-world environments, demonstrating autonomous patrol, human detection with visual perception, and obstacle avoidance capabilities. The proposed ToolOrchestra action framework enables structured decision-making through perception, reasoning, and actuation tools.

Hybrid TD3: Overestimation Bias Analysis and Stable Policy Optimization for Hybrid Action Space

Mar 01, 2026Abstract:Reinforcement learning in discrete-continuous hybrid action spaces presents fundamental challenges for robotic manipulation, where high-level task decisions and low-level joint-space execution must be jointly optimized. Existing approaches either discretize continuous components or relax discrete choices into continuous approximations, which suffer from scalability limitations and training instability in high-dimensional action spaces and under domain randomization. In this paper, we propose Hybrid TD3, an extension of Twin Delayed Deep Deterministic Policy Gradient (TD3) that natively handles parameterized hybrid action spaces in a principled manner. We conduct a rigorous theoretical analysis of overestimation bias in hybrid action settings, deriving formal bounds under twin-critic architectures and establishing a complete bias ordering across five algorithmic variants. Building on this analysis, we introduce a weighted clipped Q-learning target that marginalizes over the discrete action distribution, achieving equivalent bias reduction to standard clipped minimization while improving policy smoothness. Experimental results demonstrate that Hybrid TD3 achieves superior training stability and competitive performance against state-of-the-art hybrid action baselines

Human-to-Robot Interaction: Learning from Video Demonstration for Robot Imitation

Feb 22, 2026Abstract:Learning from Demonstration (LfD) offers a promising paradigm for robot skill acquisition. Recent approaches attempt to extract manipulation commands directly from video demonstrations, yet face two critical challenges: (1) general video captioning models prioritize global scene features over task-relevant objects, producing descriptions unsuitable for precise robotic execution, and (2) end-to-end architectures coupling visual understanding with policy learning require extensive paired datasets and struggle to generalize across objects and scenarios. To address these limitations, we propose a novel ``Human-to-Robot'' imitation learning pipeline that enables robots to acquire manipulation skills directly from unstructured video demonstrations, inspired by the human ability to learn by watching and imitating. Our key innovation is a modular framework that decouples the learning process into two distinct stages: (1) Video Understanding, which combines Temporal Shift Modules (TSM) with Vision-Language Models (VLMs) to extract actions and identify interacted objects, and (2) Robot Imitation, which employs TD3-based deep reinforcement learning to execute the demonstrated manipulations. We validated our approach in PyBullet simulation environments with a UR5e manipulator and in a real-world experiment with a UF850 manipulator across four fundamental actions: reach, pick, move, and put. For video understanding, our method achieves 89.97% action classification accuracy and BLEU-4 scores of 0.351 on standard objects and 0.265 on novel objects, representing improvements of 76.4% and 128.4% over the best baseline, respectively. For robot manipulation, our framework achieves an average success rate of 87.5% across all actions, with 100% success on reaching tasks and up to 90% on complex pick-and-place operations. The project website is available at https://thanhnguyencanh.github.io/LfD4hri.

IRAF-SLAM: An Illumination-Robust and Adaptive Feature-Culling Front-End for Visual SLAM in Challenging Environments

Jul 10, 2025

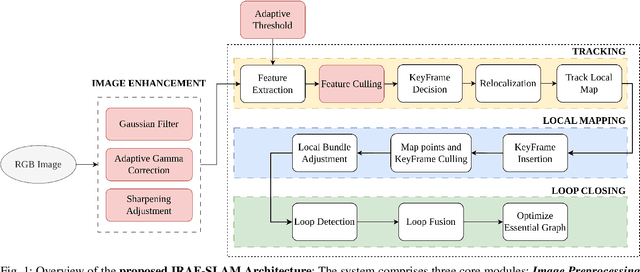

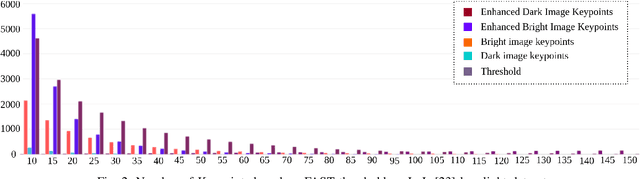

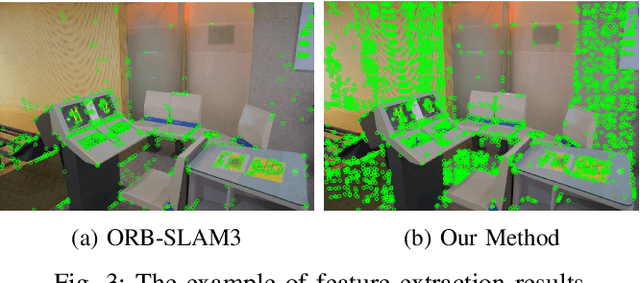

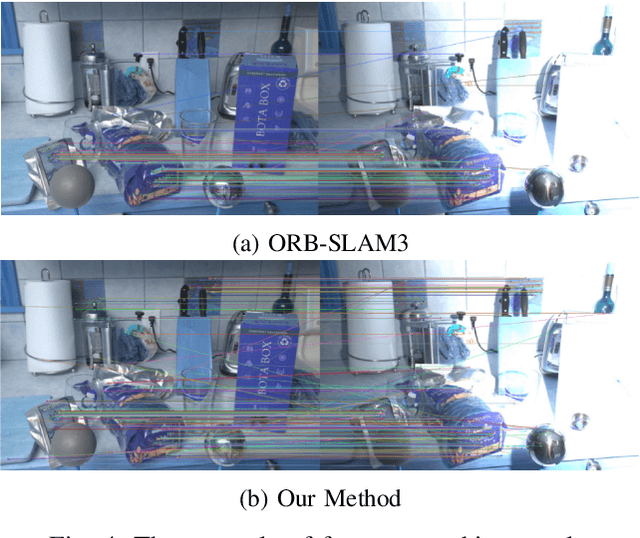

Abstract:Robust Visual SLAM (vSLAM) is essential for autonomous systems operating in real-world environments, where challenges such as dynamic objects, low texture, and critically, varying illumination conditions often degrade performance. Existing feature-based SLAM systems rely on fixed front-end parameters, making them vulnerable to sudden lighting changes and unstable feature tracking. To address these challenges, we propose ``IRAF-SLAM'', an Illumination-Robust and Adaptive Feature-Culling front-end designed to enhance vSLAM resilience in complex and challenging environments. Our approach introduces: (1) an image enhancement scheme to preprocess and adjust image quality under varying lighting conditions; (2) an adaptive feature extraction mechanism that dynamically adjusts detection sensitivity based on image entropy, pixel intensity, and gradient analysis; and (3) a feature culling strategy that filters out unreliable feature points using density distribution analysis and a lighting impact factor. Comprehensive evaluations on the TUM-VI and European Robotics Challenge (EuRoC) datasets demonstrate that IRAF-SLAM significantly reduces tracking failures and achieves superior trajectory accuracy compared to state-of-the-art vSLAM methods under adverse illumination conditions. These results highlight the effectiveness of adaptive front-end strategies in improving vSLAM robustness without incurring significant computational overhead. The implementation of IRAF-SLAM is publicly available at https://thanhnguyencanh. github.io/IRAF-SLAM/.

ESRPCB: an Edge guided Super-Resolution model and Ensemble learning for tiny Printed Circuit Board Defect detection

Jun 16, 2025Abstract:Printed Circuit Boards (PCBs) are critical components in modern electronics, which require stringent quality control to ensure proper functionality. However, the detection of defects in small-scale PCBs images poses significant challenges as a result of the low resolution of the captured images, leading to potential confusion between defects and noise. To overcome these challenges, this paper proposes a novel framework, named ESRPCB (edgeguided super-resolution for PCBs defect detection), which combines edgeguided super-resolution with ensemble learning to enhance PCBs defect detection. The framework leverages the edge information to guide the EDSR (Enhanced Deep Super-Resolution) model with a novel ResCat (Residual Concatenation) structure, enabling it to reconstruct high-resolution images from small PCBs inputs. By incorporating edge features, the super-resolution process preserves critical structural details, ensuring that tiny defects remain distinguishable in the enhanced image. Following this, a multi-modal defect detection model employs ensemble learning to analyze the super-resolved

Quadrotor Trajectory Tracking Using Linear and Nonlinear Model Predictive Control

Nov 11, 2024Abstract:Accurate trajectory tracking is an essential characteristic for the safe navigation of a quadrotor in cluttered or disturbed environments. In this paper, we present in detail two state-of-the-art model-based control frameworks for trajectory tracking: the Linear Model Predictive Controller (LMPC) and the Nonlinear Model Predictive Controller (NMPC). Additionally, the kinematic and dynamic models of the quadrotor are comprehensively described. Finally, a simulation system is implemented to verify feasibility, demonstrating the effectiveness of both controllers.

Flight Time Improvement Using Adaptive Model Predictive Control for Unmanned Aerial Vehicles

Nov 11, 2024Abstract:Intelligent aerial platforms such as Unmanned Aerial Vehicles (UAVs) are expected to revolutionize various fields, including transportation, traffic management, field monitoring, industrial production, and agricultural management. Among these, precise control is a critical task that determines the performance and capabilities of UAV systems. However, current research primarily focuses on trajectory tracking and minimizing flight errors, with limited attention to improving flight time. In this paper, we propose a Model Predictive Control (MPC) approach aimed at minimizing flight time while addressing the limitations of the commonly used classical MPC controllers. Furthermore, the MPC method and its application for UAV control are presented in detail. Finally, the results demonstrate that the proposed controller outperforms the standard MPC in terms of efficiency. Moreover, this approach shows potential to become a foundation for integrating intelligent algorithms into basic controllers.

Development of a Human-Robot Interaction Platform for Dual-Arm Robots Based on ROS and Multimodal Artificial Intelligence

Nov 08, 2024Abstract:In this paper, we propose the development of an interactive platform between humans and a dual-arm robotic system based on the Robot Operating System (ROS) and a multimodal artificial intelligence model. Our proposed platform consists of two main components: a dual-arm robotic hardware system and software that includes image processing tasks and natural language processing using a 3D camera and embedded computing. First, we designed and developed a dual-arm robotic system with a positional accuracy of less than 2 cm, capable of operating independently, performing industrial and service tasks while simultaneously simulating and modeling the robot in the ROS environment. Second, artificial intelligence models for image processing are integrated to execute object picking and classification tasks with an accuracy of over 90%. Finally, we developed remote control software using voice commands through a natural language processing model. Experimental results demonstrate the accuracy of the multimodal artificial intelligence model and the flexibility of the dual-arm robotic system in interactive human environments.

Enhancing Depth Image Estimation for Underwater Robots by Combining Image Processing and Machine Learning

Nov 08, 2024Abstract:Depth information plays a crucial role in autonomous systems for environmental perception and robot state estimation. With the rapid development of deep neural network technology, depth estimation has been extensively studied and shown potential for practical applications. However, in particularly challenging environments such as low-light and noisy underwater conditions, direct application of machine learning models may not yield the desired results. Therefore, in this paper, we present an approach to enhance underwater image quality to improve depth estimation effectiveness. First, underwater images are processed through methods such as color compensation, brightness equalization, and enhancement of contrast and sharpness of objects in the image. Next, we perform depth estimation using the Udepth model on the enhanced images. Finally, the results are evaluated and presented to verify the effectiveness and accuracy of the enhanced depth image quality approach for underwater robots.

Development of an indoor localization and navigation system based on monocular SLAM for mobile robots

Nov 08, 2024Abstract:Localization and navigation are two crucial issues for mobile robots. In this paper, we propose an approach for localization and navigation systems for a differential-drive robot based on monocular SLAM. The system is implemented on the Robot Operating System (ROS). The hardware includes a differential-drive robot with an embedded computing platform (Jetson Xavier AGX), a 2D camera, and a LiDAR sensor for collecting external environmental information. The A* algorithm and Dynamic Window Approach (DWA) are used for path planning based on a 2D grid map. The ORB_SLAM3 algorithm is utilized to extract environmental features, providing the robot's pose for the localization and navigation processes. Finally, the system is tested in the Gazebo simulation environment and visualized through Rviz, demonstrating the efficiency and potential of the system for indoor localization and navigation of mobile robots.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge