Tetsu Kasanishi

SciReviewGen: A Large-scale Dataset for Automatic Literature Review Generation

May 24, 2023Abstract:Automatic literature review generation is one of the most challenging tasks in natural language processing. Although large language models have tackled literature review generation, the absence of large-scale datasets has been a stumbling block to the progress. We release SciReviewGen, consisting of over 10,000 literature reviews and 690,000 papers cited in the reviews. Based on the dataset, we evaluate recent transformer-based summarization models on the literature review generation task, including Fusion-in-Decoder extended for literature review generation. Human evaluation results show that some machine-generated summaries are comparable to human-written reviews, while revealing the challenges of automatic literature review generation such as hallucinations and a lack of detailed information. Our dataset and code are available at https://github.com/tetsu9923/SciReviewGen.

Edge-Level Explanations for Graph Neural Networks by Extending Explainability Methods for Convolutional Neural Networks

Nov 01, 2021

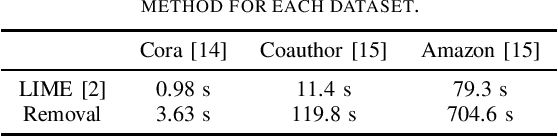

Abstract:Graph Neural Networks (GNNs) are deep learning models that take graph data as inputs, and they are applied to various tasks such as traffic prediction and molecular property prediction. However, owing to the complexity of the GNNs, it has been difficult to analyze which parts of inputs affect the GNN model's outputs. In this study, we extend explainability methods for Convolutional Neural Networks (CNNs), such as Local Interpretable Model-Agnostic Explanations (LIME), Gradient-Based Saliency Maps, and Gradient-Weighted Class Activation Mapping (Grad-CAM) to GNNs, and predict which edges in the input graphs are important for GNN decisions. The experimental results indicate that the LIME-based approach is the most efficient explainability method for multiple tasks in the real-world situation, outperforming even the state-of-the-art method in GNN explainability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge