Tatsuya Sakai

Estimation of User's World Model Using Graph2vec

Jan 10, 2023

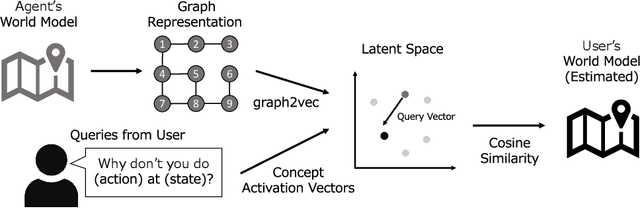

Abstract:To obtain advanced interaction between autonomous robots and users, robots should be able to distinguish their state space representations (i.e., world models). Herein, a novel method was proposed for estimating the user's world model based on queries. In this method, the agent learns the distributed representation of world models using graph2vec and generates concept activation vectors that represent the meaning of queries in the latent space. Experimental results revealed that the proposed method can estimate the user's world model more efficiently than the simple method of using the ``AND'' search of queries.

A Framework of Explanation Generation toward Reliable Autonomous Robots

May 06, 2021

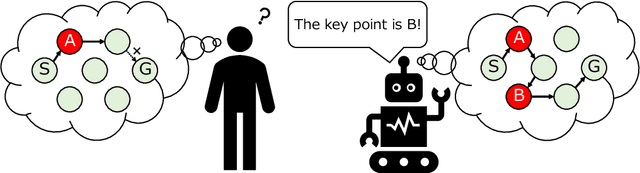

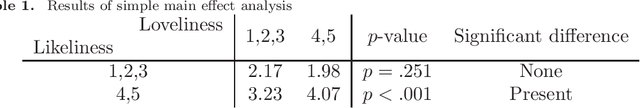

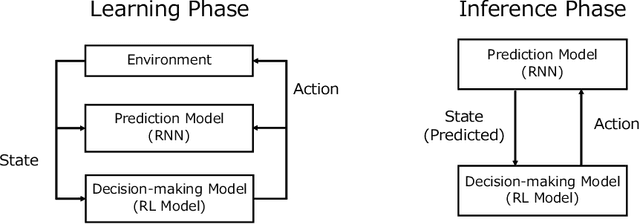

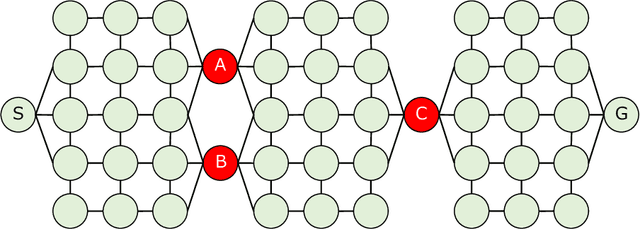

Abstract:To realize autonomous collaborative robots, it is important to increase the trust that users have in them. Toward this goal, this paper proposes an algorithm which endows an autonomous agent with the ability to explain the transition from the current state to the target state in a Markov decision process (MDP). According to cognitive science, to generate an explanation that is acceptable to humans, it is important to present the minimum information necessary to sufficiently understand an event. To meet this requirement, this study proposes a framework for identifying important elements in the decision-making process using a prediction model for the world and generating explanations based on these elements. To verify the ability of the proposed method to generate explanations, we conducted an experiment using a grid environment. It was inferred from the result of a simulation experiment that the explanation generated using the proposed method was composed of the minimum elements important for understanding the transition from the current state to the target state. Furthermore, subject experiments showed that the generated explanation was a good summary of the process of state transition, and that a high evaluation was obtained for the explanation of the reason for an action.

Explainable Autonomous Robots: A Survey and Perspective

May 06, 2021

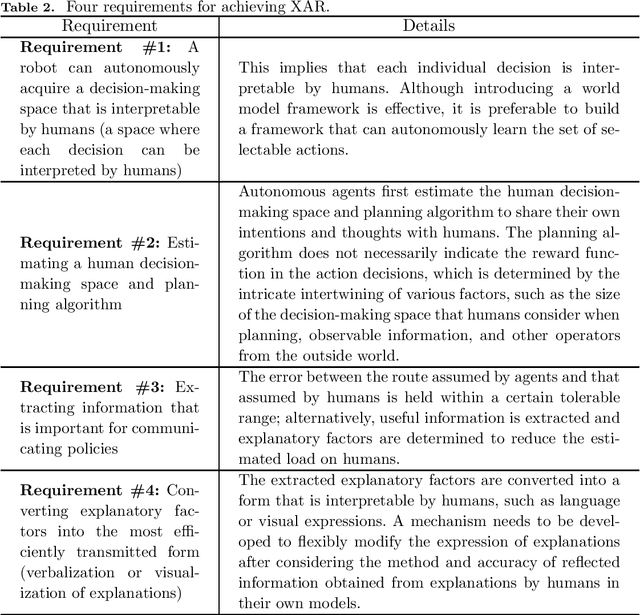

Abstract:Advanced communication protocols are critical to enable the coexistence of autonomous robots with humans. Thus, the development of explanatory capabilities is an urgent first step toward autonomous robots. This survey provides an overview of the various types of "explainability" discussed in machine learning research. Then, we discuss the definition of "explainability" in the context of autonomous robots (i.e., explainable autonomous robots) by exploring the question "what is an explanation?" We further conduct a research survey based on this definition and present some relevant topics for future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge