Tarje Nissen-Meyer

Gaussian Processes for Probabilistic Estimates of Earthquake Ground Shaking: A 1-D Proof-of-Concept

Dec 04, 2024

Abstract:Estimates of seismic wave speeds in the Earth (seismic velocity models) are key input parameters to earthquake simulations for ground motion prediction. Owing to the non-uniqueness of the seismic inverse problem, typically many velocity models exist for any given region. The arbitrary choice of which velocity model to use in earthquake simulations impacts ground motion predictions. However, current hazard analysis methods do not account for this source of uncertainty. We present a proof-of-concept ground motion prediction workflow for incorporating uncertainties arising from inconsistencies between existing seismic velocity models. Our analysis is based on the probabilistic fusion of overlapping seismic velocity models using scalable Gaussian process (GP) regression. Specifically, we fit a GP to two synthetic 1-D velocity profiles simultaneously, and show that the predictive uncertainty accounts for the differences between the models. We subsequently draw velocity model samples from the predictive distribution and estimate peak ground displacement using acoustic wave propagation through the velocity models. The resulting distribution of possible ground motion amplitudes is much wider than would be predicted by simulating shaking using only the two input velocity models. This proof-of-concept illustrates the importance of probabilistic methods for physics-based seismic hazard analysis.

Finite Basis Physics-Informed Neural Networks (FBPINNs): a scalable domain decomposition approach for solving differential equations

Jul 16, 2021

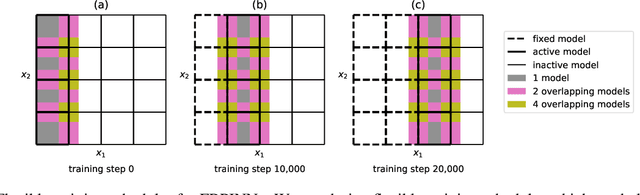

Abstract:Recently, physics-informed neural networks (PINNs) have offered a powerful new paradigm for solving problems relating to differential equations. Compared to classical numerical methods PINNs have several advantages, for example their ability to provide mesh-free solutions of differential equations and their ability to carry out forward and inverse modelling within the same optimisation problem. Whilst promising, a key limitation to date is that PINNs have struggled to accurately and efficiently solve problems with large domains and/or multi-scale solutions, which is crucial for their real-world application. Multiple significant and related factors contribute to this issue, including the increasing complexity of the underlying PINN optimisation problem as the problem size grows and the spectral bias of neural networks. In this work we propose a new, scalable approach for solving large problems relating to differential equations called Finite Basis PINNs (FBPINNs). FBPINNs are inspired by classical finite element methods, where the solution of the differential equation is expressed as the sum of a finite set of basis functions with compact support. In FBPINNs neural networks are used to learn these basis functions, which are defined over small, overlapping subdomains. FBINNs are designed to address the spectral bias of neural networks by using separate input normalisation over each subdomain, and reduce the complexity of the underlying optimisation problem by using many smaller neural networks in a parallel divide-and-conquer approach. Our numerical experiments show that FBPINNs are effective in solving both small and larger, multi-scale problems, outperforming standard PINNs in both accuracy and computational resources required, potentially paving the way to the application of PINNs on large, real-world problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge