Tanuja Bompada

A Unified Batch Online Learning Framework for Click Prediction

Sep 12, 2018

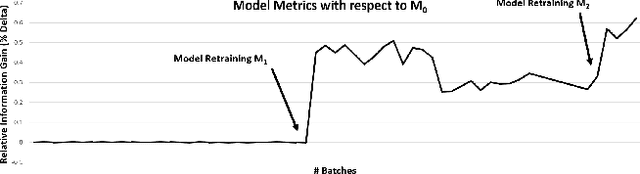

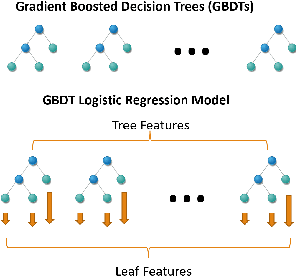

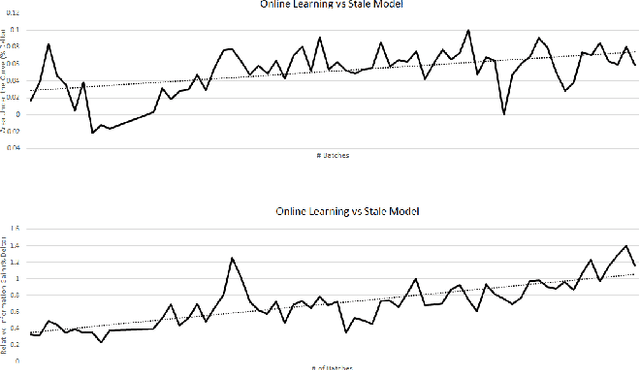

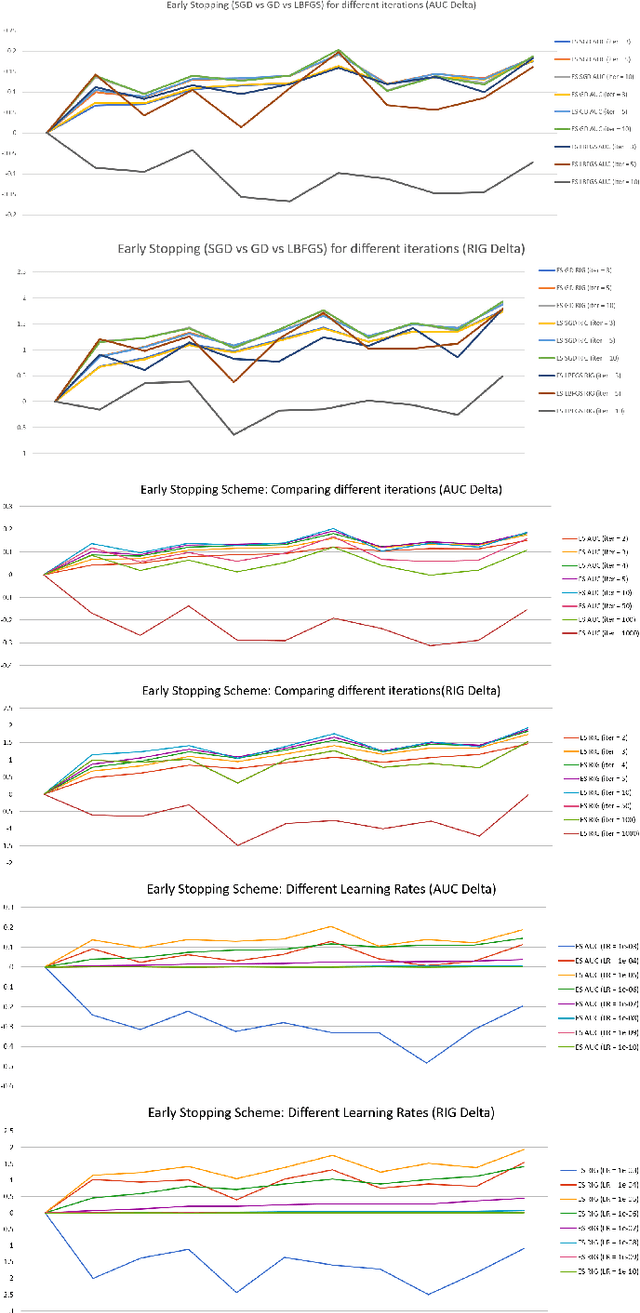

Abstract:We present a unified framework for Batch Online Learning (OL) for Click Prediction in Search Advertisement. Machine Learning models once deployed, show non-trivial accuracy and calibration degradation over time due to model staleness. It is therefore necessary to regularly update models, and do so automatically. This paper presents two paradigms of Batch Online Learning, one which incrementally updates the model parameters via an early stopping mechanism, and another which does so through a proximal regularization. We argue how both these schemes naturally trade-off between old and new data. We then theoretically and empirically show that these two seemingly different schemes are closely related. Through extensive experiments, we demonstrate the utility of of our OL framework; how the two OL schemes relate to each other and how they trade-off between the new and historical data. We then compare batch OL to full model retrains, and show how online learning is more robust to data issues. We also demonstrate the long term impact of Online Learning, the role of the initial Models in OL, the impact of delays in the update, and finally conclude with some implementation details and challenges in deploying a real world online learning system in production. While this paper mostly focuses on application of click prediction for search advertisement, we hope that the lessons learned here can be carried over to other problem domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge