Svein-Erik Måsøy

Coherence Based Sound Speed Aberration Correction -- with clinical validation in obstetric ultrasound

Nov 25, 2024

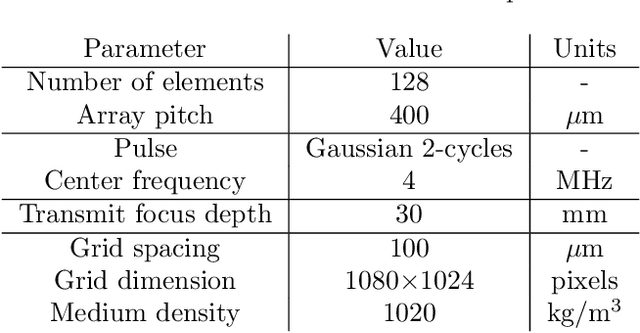

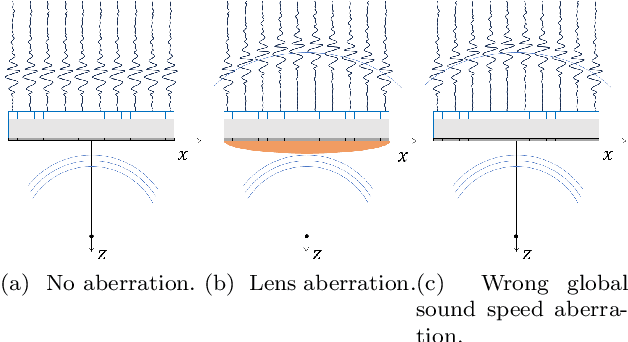

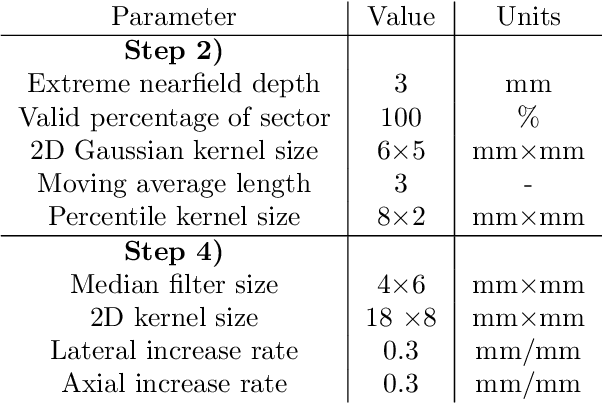

Abstract:The purpose of this work is to demonstrate a robust and clinically validated method for correcting sound speed aberrations in medical ultrasound. We propose a correction method that calculates focusing delays directly from the observed two-way distributed average sound speed. The method beamforms multiple coherence images and selects the sound speed that maximizes the coherence for each image pixel. The main contribution of this work is the direct estimation of aberration, without the ill-posed inversion of a local sound speed map, and the proposed processing of coherence images which adapts to in vivo situations where low coherent regions and off-axis scattering represents a challenge. The method is validated in vitro and in silico showing high correlation with ground truth speed of sound maps. Further, the method is clinically validated by being applied to channel data recorded from 172 obstetric Bmode images, and 12 case examples are presented and discussed in detail. The data is recorded with a GE HealthCare Voluson Expert 22 system with an eM6c matrix array probe. The images are evaluated by three expert clinicians, and the results show that the corrected images are preferred or gave equivalent quality to no correction (1540m/s) for 72.5% of the 172 images. In addition, a sharpness metric from digital photography is used to quantify image quality improvement. The increase in sharpness and the change in average sound speed are shown to be linearly correlated with a Pearson Correlation Coefficient of 0.67.

Regional quality estimation for echocardiography using deep learning

Aug 01, 2024Abstract:Automatic estimation of cardiac ultrasound image quality can be beneficial for guiding operators and ensuring the accuracy of clinical measurements. Previous work often fails to distinguish the view correctness of the echocardiogram from the image quality. Additionally, previous studies only provide a global image quality value, which limits their practical utility. In this work, we developed and compared three methods to estimate image quality: 1) classic pixel-based metrics like the generalized contrast-to-noise ratio (gCNR) on myocardial segments as region of interest and left ventricle lumen as background, obtained using a U-Net segmentation 2) local image coherence derived from a U-Net model that predicts coherence from B-Mode images 3) a deep convolutional network that predicts the quality of each region directly in an end-to-end fashion. We evaluate each method against manual regional image quality annotations by three experienced cardiologists. The results indicate poor performance of the gCNR metric, with Spearman correlation to the annotations of \r{ho} = 0.24. The end-to-end learning model obtains the best result, \r{ho} = 0.69, comparable to the inter-observer correlation, \r{ho} = 0.63. Finally, the coherence-based method, with \r{ho} = 0.58, outperformed the classical metrics and is more generic than the end-to-end approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge