Sung Ho Kang

ReCo-Diff: Residual-Conditioned Deterministic Sampling for Cold Diffusion in Sparse-View CT

Mar 03, 2026Abstract:Cold and generalized diffusion models have recently shown strong potential for sparse-view CT reconstruction by explicitly modeling deterministic degradation processes. However, existing sampling strategies often rely on ad hoc sampling controls or fixed schedules, which remain sensitive to error accumulation and sampling instability. We propose ReCo-Diff, a residual-conditioned diffusion framework that leverages observation residuals through residual-conditioned self-guided sampling. At each sampling step, ReCo-Diff first produces a null (unconditioned) baseline reconstruction and then conditions subsequent predictions on the observation residual between the predicted image and the measured sparse-view input. This residual-driven guidance provides continuous, measurement-aware correction while preserving a deterministic sampling schedule, without requiring heuristic interventions. Experimental results demonstrate that ReCo-Diff consistently outperforms existing cold diffusion sampling baselines, achieving higher reconstruction accuracy, improved stability, and enhanced robustness under severe sparsity.

Domain Adaptation based on Human Feedback for Enhancing Generative Model Denoising Abilities

Aug 01, 2023

Abstract:How can we apply human feedback into generative model? As answer of this question, in this paper, we show the method applied on denoising problem and domain adaptation using human feedback. Deep generative models have demonstrated impressive results in image denoising. However, current image denoising models often produce inappropriate results when applied to domains different from the ones they were trained on. If there are `Good' and `Bad' result for unseen data, how to raise up quality of `Bad' result. Most methods use an approach based on generalization of model. However, these methods require target image for training or adapting unseen domain. In this paper, to adapting domain, we deal with non-target image for unseen domain, and improve specific failed image. To address this, we propose a method for fine-tuning inappropriate results generated in a different domain by utilizing human feedback. First, we train a generator to denoise images using only the noisy MNIST digit '0' images. The denoising generator trained on the source domain leads to unintended results when applied to target domain images. To achieve domain adaptation, we construct a noise-image denoising generated image data set and train a reward model predict human feedback. Finally, we fine-tune the generator on the different domain using the reward model with auxiliary loss function, aiming to transfer denoising capabilities to target domain. Our approach demonstrates the potential to efficiently fine-tune a generator trained on one domain using human feedback from another domain, thereby enhancing denoising abilities in different domains.

Automatic Three-Dimensional Cephalometric Annotation System Using Three-Dimensional Convolutional Neural Networks

Nov 19, 2018

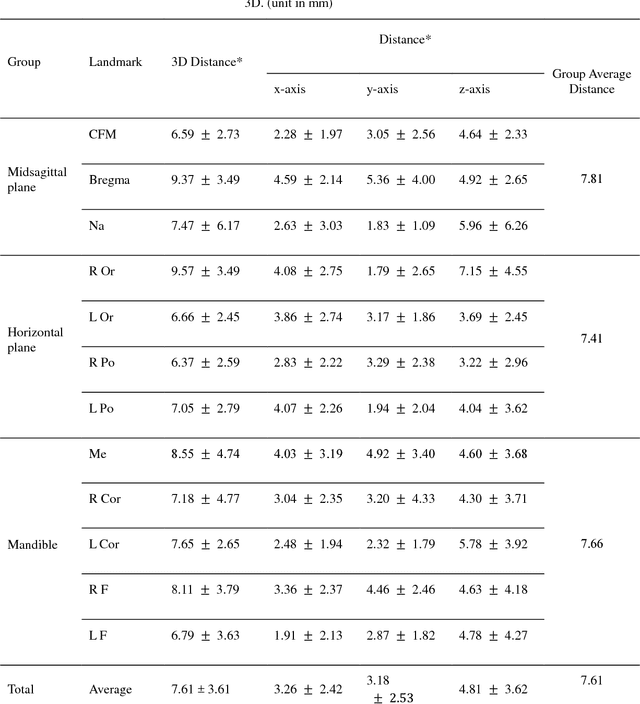

Abstract:Background: Three-dimensional (3D) cephalometric analysis using computerized tomography data has been rapidly adopted for dysmorphosis and anthropometry. Several different approaches to automatic 3D annotation have been proposed to overcome the limitations of traditional cephalometry. The purpose of this study was to evaluate the accuracy of our newly-developed system using a deep learning algorithm for automatic 3D cephalometric annotation. Methods: To overcome current technical limitations, some measures were developed to directly annotate 3D human skull data. Our deep learning-based model system mainly consisted of a 3D convolutional neural network and image data resampling. Results: The discrepancies between the referenced and predicted coordinate values in three axes and in 3D distance were calculated to evaluate system accuracy. Our new model system yielded prediction errors of 3.26, 3.18, and 4.81 mm (for three axes) and 7.61 mm (for 3D). Moreover, there was no difference among the landmarks of the three groups, including the midsagittal plane, horizontal plane, and mandible (p>0.05). Conclusion: A new 3D convolutional neural network-based automatic annotation system for 3D cephalometry was developed. The strategies used to implement the system were detailed and measurement results were evaluated for accuracy. Further development of this system is planned for full clinical application of automatic 3D cephalometric annotation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge