Summra Saleem

PassionNet: An Innovative Framework for Duplicate and Conflicting Requirements Identification

Dec 02, 2024

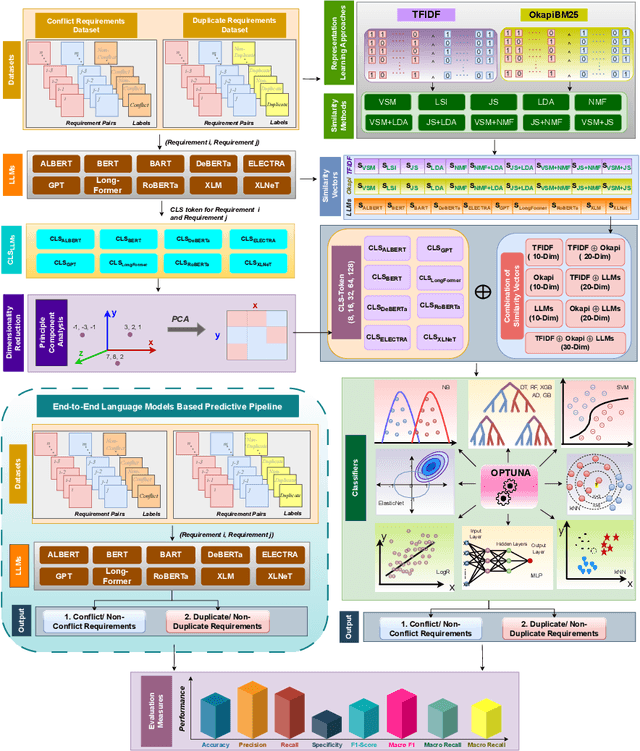

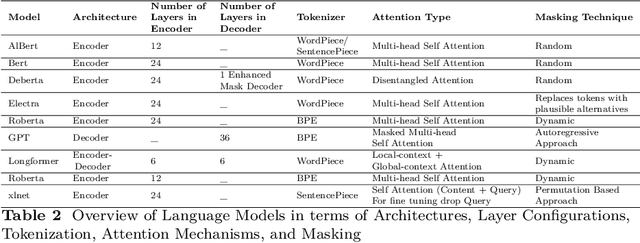

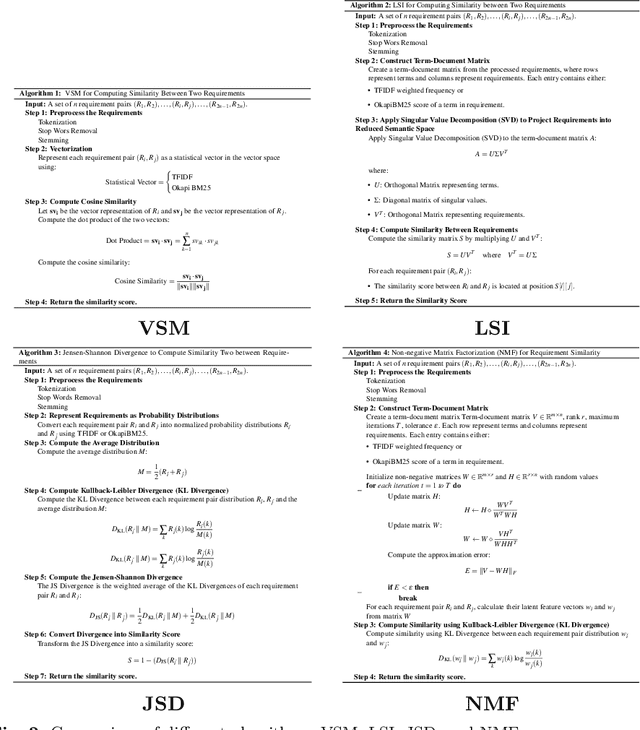

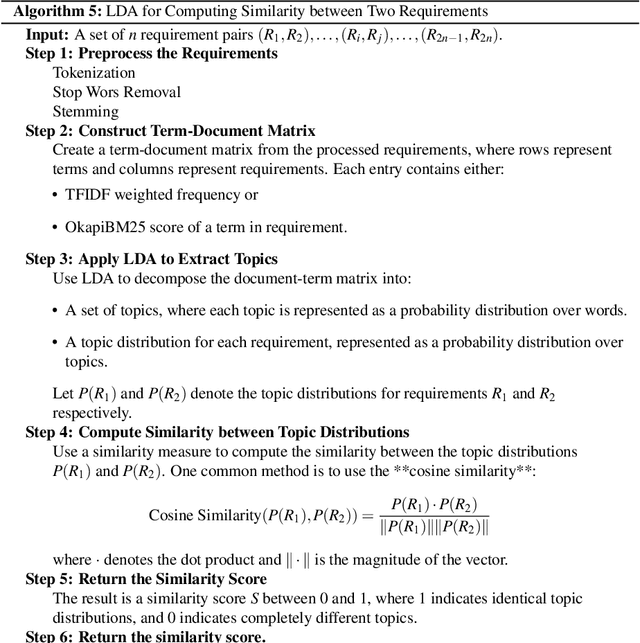

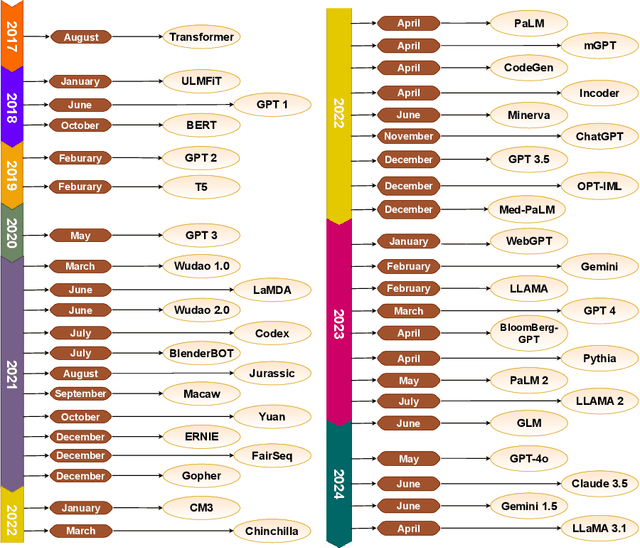

Abstract:Early detection and resolution of duplicate and conflicting requirements can significantly enhance project efficiency and overall software quality. Researchers have developed various computational predictors by leveraging Artificial Intelligence (AI) potential to detect duplicate and conflicting requirements. However, these predictors lack in performance and requires more effective approaches to empower software development processes. Following the need of a unique predictor that can accurately identify duplicate and conflicting requirements, this research offers a comprehensive framework that facilitate development of 3 different types of predictive pipelines: language models based, multi-model similarity knowledge-driven and large language models (LLMs) context + multi-model similarity knowledge-driven. Within first type predictive pipelines landscape, framework facilitates conflicting/duplicate requirements identification by leveraging 8 distinct types of LLMs. In second type, framework supports development of predictive pipelines that leverage multi-scale and multi-model similarity knowledge, ranging from traditional similarity computation methods to advanced similarity vectors generated by LLMs. In the third type, the framework synthesizes predictive pipelines by integrating contextual insights from LLMs with multi-model similarity knowledge. Across 6 public benchmark datasets, extensive testing of 760 distinct predictive pipelines demonstrates that hybrid predictive pipelines consistently outperforms other two types predictive pipelines in accurately identifying duplicate and conflicting requirements. This predictive pipeline outperformed existing state-of-the-art predictors performance with an overall performance margin of 13% in terms of F1-score

Generative Language Models Potential for Requirement Engineering Applications: Insights into Current Strengths and Limitations

Dec 01, 2024

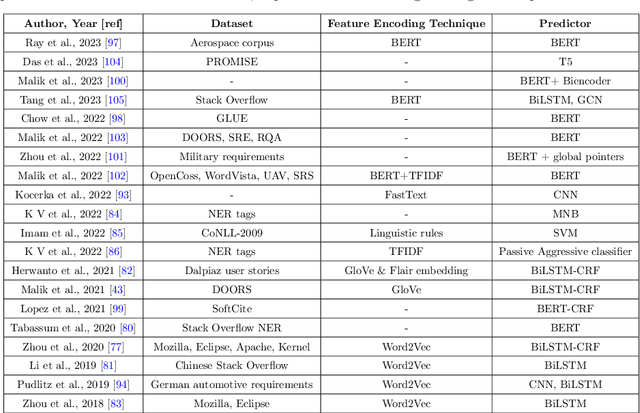

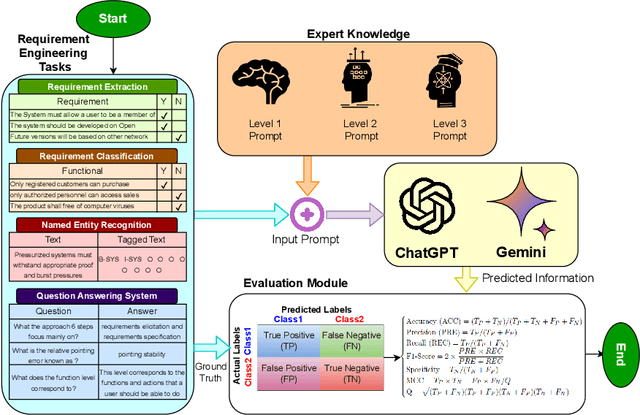

Abstract:Traditional language models have been extensively evaluated for software engineering domain, however the potential of ChatGPT and Gemini have not been fully explored. To fulfill this gap, the paper in hand presents a comprehensive case study to investigate the potential of both language models for development of diverse types of requirement engineering applications. It deeply explores impact of varying levels of expert knowledge prompts on the prediction accuracies of both language models. Across 4 different public benchmark datasets of requirement engineering tasks, it compares performance of both language models with existing task specific machine/deep learning predictors and traditional language models. Specifically, the paper utilizes 4 benchmark datasets; Pure (7,445 samples, requirements extraction),PROMISE (622 samples, requirements classification), REQuestA (300 question answer (QA) pairs) and Aerospace datasets (6347 words, requirements NER tagging). Our experiments reveal that, in comparison to ChatGPT, Gemini requires more careful prompt engineering to provide accurate predictions. Moreover, across requirement extraction benchmark dataset the state-of-the-art F1-score is 0.86 while ChatGPT and Gemini achieved 0.76 and 0.77,respectively. The State-of-the-art F1-score on requirements classification dataset is 0.96 and both language models 0.78. In name entity recognition (NER) task the state-of-the-art F1-score is 0.92 and ChatGPT managed to produce 0.36, and Gemini 0.25. Similarly, across question answering dataset the state-of-the-art F1-score is 0.90 and ChatGPT and Gemini managed to produce 0.91 and 0.88 respectively. Our experiments show that Gemini requires more precise prompt engineering than ChatGPT. Except for question-answering, both models under-perform compared to current state-of-the-art predictors across other tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge