Sultan Kenjeyev

Adversarial Conversational Shaping for Intelligent Agents

Jul 20, 2023

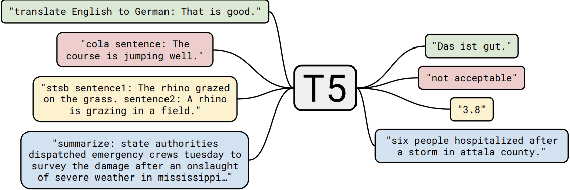

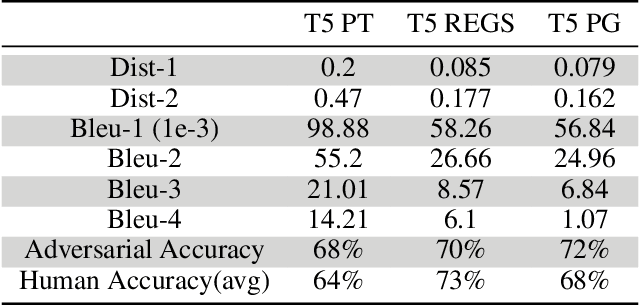

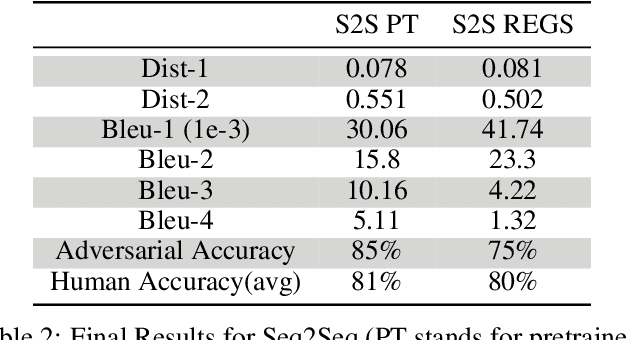

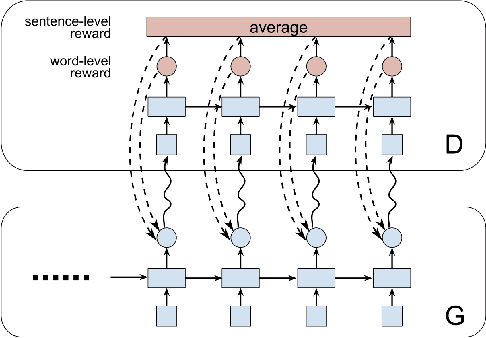

Abstract:The recent emergence of deep learning methods has enabled the research community to achieve state-of-the art results in several domains including natural language processing. However, the current robocall system remains unstable and inaccurate: text generator and chat-bots can be tedious and misunderstand human-like dialogue. In this work, we study the performance of two models able to enhance an intelligent conversational agent through adversarial conversational shaping: a generative adversarial network with policy gradient (GANPG) and a generative adversarial network with reward for every generation step (REGS) based on the REGS model presented in Li et al. [18] . This model is able to assign rewards to both partially and fully generated text sequences. We discuss performance with different training details : seq2seq [ 36] and transformers [37 ] in a reinforcement learning framework.

Out of Distribution Generalization via Interventional Style Transfer in Single-Cell Microscopy

Jun 15, 2023

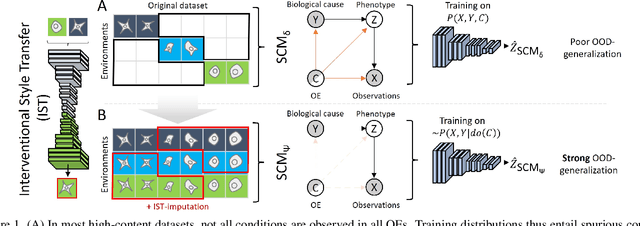

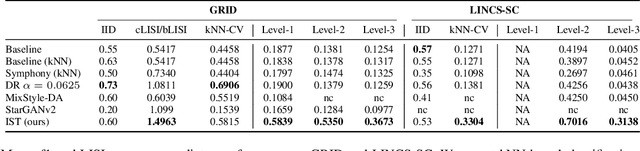

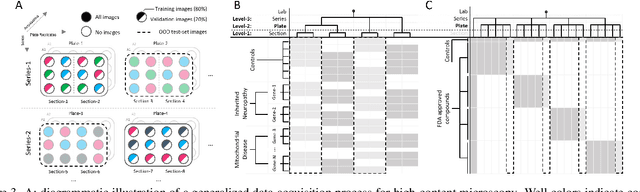

Abstract:Real-world deployment of computer vision systems, including in the discovery processes of biomedical research, requires causal representations that are invariant to contextual nuisances and generalize to new data. Leveraging the internal replicate structure of two novel single-cell fluorescent microscopy datasets, we propose generally applicable tests to assess the extent to which models learn causal representations across increasingly challenging levels of OOD-generalization. We show that despite seemingly strong performance, as assessed by other established metrics, both naive and contemporary baselines designed to ward against confounding, collapse on these tests. We introduce a new method, Interventional Style Transfer (IST), that substantially improves OOD generalization by generating interventional training distributions in which spurious correlations between biological causes and nuisances are mitigated. We publish our code and datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge