Sujie Li

Tensor networks for unsupervised machine learning

Jun 24, 2021

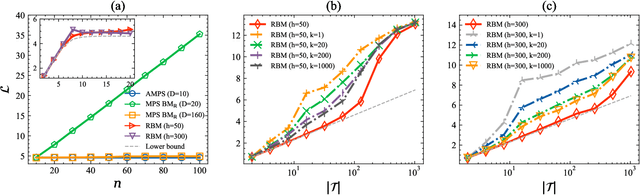

Abstract:Modeling the joint distribution of high-dimensional data is a central task in unsupervised machine learning. In recent years, many interests have been attracted to developing learning models based on tensor networks, which have advantages of theoretical understandings of the expressive power using entanglement properties, and as a bridge connecting the classical computation and the quantum computation. Despite the great potential, however, existing tensor-network-based unsupervised models only work as a proof of principle, as their performances are much worse than the standard models such as the restricted Boltzmann machines and neural networks. In this work, we present the Autoregressive Matrix Product States (AMPS), a tensor-network-based model combining the matrix product states from quantum many-body physics and the autoregressive models from machine learning. The model enjoys exact calculation of normalized probability and unbiased sampling, as well as a clear theoretical understanding of expressive power. We demonstrate the performance of our model using two applications, the generative modeling on synthetic and real-world data, and the reinforcement learning in statistical physics. Using extensive numerical experiments, we show that the proposed model significantly outperforms the existing tensor-network-based models and the restricted Boltzmann machines, and is competitive with the state-of-the-art neural network models.

Boltzmann machines as two-dimensional tensor networks

May 10, 2021

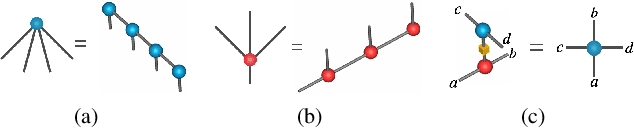

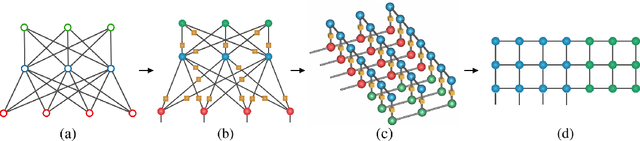

Abstract:Restricted Boltzmann machines (RBM) and deep Boltzmann machines (DBM) are important models in machine learning, and recently found numerous applications in quantum many-body physics. We show that there are fundamental connections between them and tensor networks. In particular, we demonstrate that any RBM and DBM can be exactly represented as a two-dimensional tensor network. This representation gives an understanding of the expressive power of RBM and DBM using entanglement structures of the tensor networks, also provides an efficient tensor network contraction algorithm for the computing partition function of RBM and DBM. Using numerical experiments, we demonstrate that the proposed algorithm is much more accurate than the state-of-the-art machine learning methods in estimating the partition function of restricted Boltzmann machines and deep Boltzmann machines, and have potential applications in training deep Boltzmann machines for general machine learning tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge